Choose the editor that survives a real handoff test, not the highest badge score. Compare ProWritingAid, Grammarly, and AutoCrit by checking current trust documents, export behavior, and account-recovery steps before rollout. Then run one draft-to-approval-to-export trial and keep records of policy dates, support escalation paths, and any limits that affect collaboration or formatting.

If you work across borders, choosing an editing tool is not just an app decision. You are deciding whether software like ProWritingAid, Grammarly, or AutoCrit fits cleanly into the rest of your operation. That includes client work, data handling, review disclosures, approvals, and how finished copy gets delivered.

| Lens | Checkpoint | If ignored |

|---|---|---|

| Compliance fit | Whether the tool and your use align with applicable rules; FTC disclosure and GDPR Article 25 can be practical checkpoints | A polished tool can still create compliance risk |

| Risk controls | Current security documentation or an independent controls report | You rely on vague trust-page language instead of evidence |

| Workflow friction | Effectiveness, efficiency, and satisfaction in the actual writing path | Time saved on edits gets lost to copy-paste, version confusion, or approval cleanup |

| Decision confidence | Whether claims match your use case, evidence, and tradeoffs | Feature claims drive the choice more than grounded fit |

This framework is for cross-border freelancers, consultants, and small teams that need more than feature screenshots. The goal is simple. You should be able to tell which writing or editing tools deserve a place in your stack, what you need to verify before rollout, and where a polished tool can still create operational risk. Use these four audit lenses throughout the article:

Compliance fit: Check whether the tool, and your use of it, align with the rules that apply to your business context. If your review or recommendation includes a material connection people would not expect, that disclosure can matter under the FTC endorsement guides revised effective July 26, 2023. If you handle client or personal data in EU-facing work, GDPR Article 25 can be a practical checkpoint for data protection by design and by default.

Risk controls: Look for safeguards that protect confidentiality, integrity, and availability. A real checkpoint here is whether the vendor provides current security documentation or an independent controls report, rather than vague trust-page language.

Workflow friction: Judge the tool by effectiveness, efficiency, and satisfaction in your actual writing path. A common failure mode is saving time on edits but adding extra time through copy-paste, version confusion, or approval cleanup.

Decision confidence: Good reviews help you choose between alternatives, not just admire features. That means matching tool claims to your use case, evidence, and tradeoffs.

We will audit the stack in this order:

Generic rankings optimize for fast, broad purchase decisions; you need a risk-aware decision for cross-border work where one editing tool can affect confidentiality, review integrity, and operational reliability.

| Tool | Team or sharing note | Environment or export note |

|---|---|---|

| Grammarly | Team analytics dashboards are available | Style guide is not supported on mobile; uploaded .docx can be downloaded as .docx |

| ProWritingAid | Live cursors support co-editing, but collaboration is tied to Web Editor | Integrates with Word, Google Docs, Scrivener, macOS, Windows, and Chrome; exports may not retain original formatting |

| AutoCrit | Supports sharing, but only ONE user can have edit access to a file at a time | Export/download options are documented; fits online self-editing for long-form drafts |

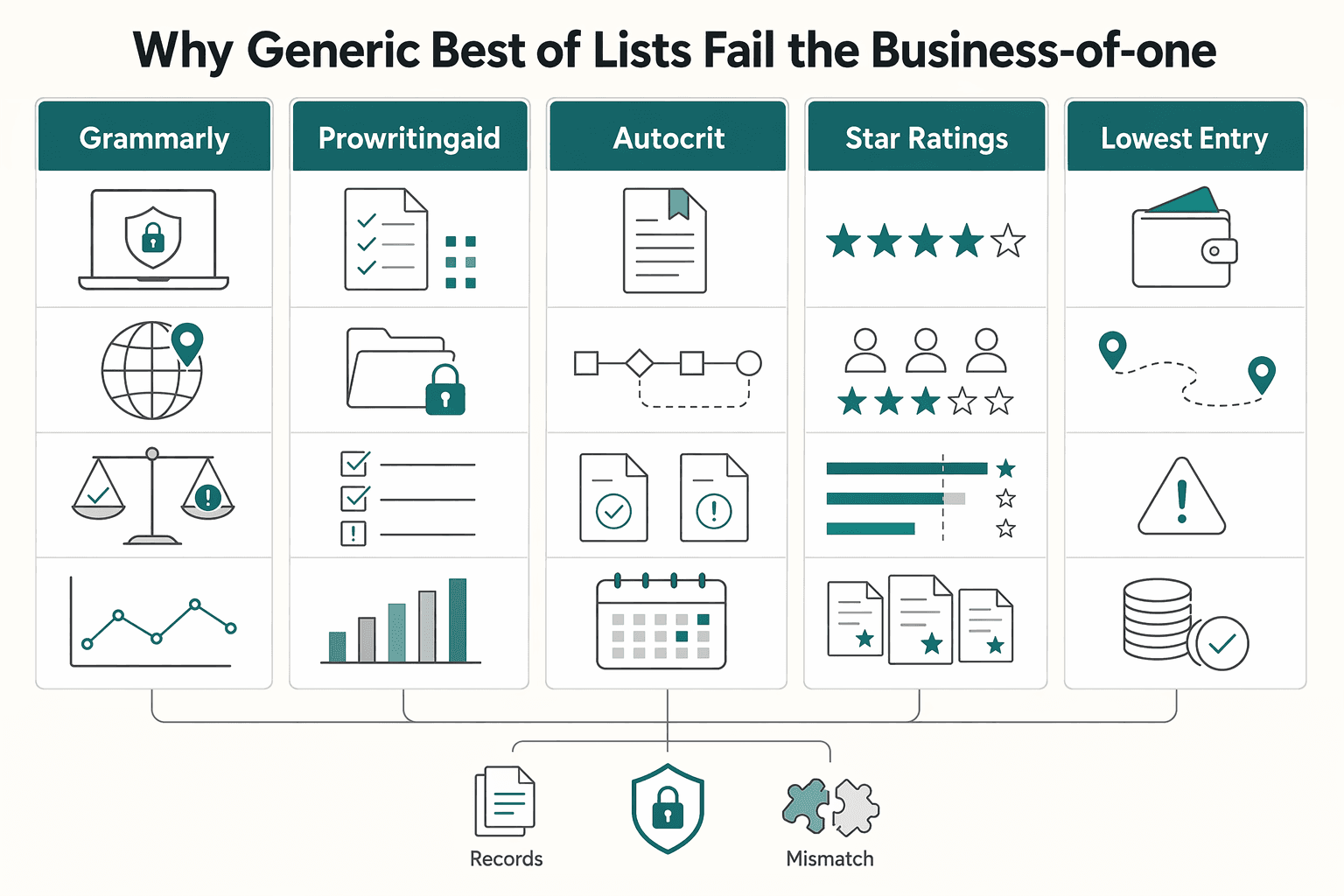

Mainstream lists are useful for discovery, but their scoring logic is usually too shallow for this context. Capterra's badge methodology includes ease-of-use and value recognitions, with eligibility at 10 published review rating scores. G2 says its rankings use a proprietary algorithm based on user reviews plus external online data. Those signals can help you shortlist tools, but they do not replace workflow, risk, and compliance checks. If a review has a material connection to a vendor, FTC guidance says that relationship should be disclosed clearly because it can change how much weight you give the endorsement.

| Typical "Best Of" Criteria | Business-of-One Decision Criteria |

|---|---|

| Star ratings and badge wins | Evidence pack: privacy terms, security documentation, and current controls evidence such as SOC 2 Type 2 |

| Lowest entry price | Total operating cost, including export cleanup, rework, and approval friction |

| Feature count | Fit with your real writing workflow and client data-handling obligations |

| Popularity | Jurisdiction fit, including GDPR-era data handling and transfer checks |

| Easy onboarding | Exit risk: can you retrieve data in a structured, commonly used and machine-readable format? |

Where generic lists usually fail:

Most rankings target general convenience, not cross-border operators. For Grammarly, ProWritingAid, and AutoCrit, badge position matters less than fit for client-facing delivery, review cycles, and privacy expectations.

Surface scoring misses trust checks. If sensitive text is involved, verify concrete statements and controls. Grammarly states it does not sell or monetize uploaded content, and ProWritingAid states it does not use user writing to train its AI models.

A highly rated tool can still create weekly friction. ProWritingAid highlights broad integrations (Word, Google Docs, Scrivener, macOS, Windows, Chrome), but details matter: it also warns exports may not retain original formatting. AutoCrit supports sharing, but only ONE user can have edit access to a file at a time.

GDPR has applied since 25 May 2018, and cross-border transfers still need a lawful path (such as adequacy or other safeguards). Generic rankings rarely test where text is processed, how transfer mechanisms are handled, or whether disclosure obligations are met.

Carry these checks into the next audit sections:

If you want a deeper dive, read The best 'writing apps' for authors (Scrivener.

Treat your editing tool as part of your compliance record chain, not just a writing app. It does not need to calculate taxes or file forms, but it must preserve the facts, labels, and exports those obligations rely on. If it fails the four tests below, treat it as a stack risk.

In product reviews, this is your filter: can the tool still hold up when your draft becomes onboarding data, invoicing support, retained records, and an export package for advisers or clients?

| Test | Minimum acceptable | Preferred | Red-flag |

|---|---|---|---|

| Residency evidence | Date-stamped records and exportable project history | Location/context fields plus retained versions for adviser review | Workspace-only access, weak history, unusable exports |

| Invoice handoff | Clean export of client/entity details and billing references | Structured fields that carry VAT-relevant details through handoff | Copy-paste workflow, billing facts trapped in comments/email |

| Cross-border tax forms | Space to store payer requests and submitted form copies | Request tracking, renewal reminders, exportable onboarding packet | No retention, no audit trail, unclear form handling |

| Reporting and retention | Downloadable files in common formats | Machine-readable export, clear retention/deletion path | Lock-in, unclear deletion process, weak archive support |

Pass this only if your tool preserves when work happened and the surrounding context, not just final text. Residency rules are jurisdiction-specific, and frameworks differ (for example, IRS physical-presence analysis, New York statutory residency logic, and California temporary/transitory analysis). Required capability: exportable history with dates and enough context to support adviser review; current threshold pending legal or tax adviser verification. Warning signs: no version history, no usable export, no way to connect work to time period or engagement context. Verify before adopting or renewing: export a full project and confirm the package keeps date context and usable record history.

Pass this only if approved draft data moves into invoicing without rework. For EU B2B services, invoicing is generally compulsory, and reverse-charge cases require the invoice wording "Reverse charge." Required capability: clean handoff of client legal name, business location, VAT-relevant details, service description, and delivery date. Warning signs: key billing facts live only in comments, email, or manual notes. Verify before adopting or renewing: run a real export-to-invoice handoff and confirm required wording/fields can be reproduced without guesswork.

Pass this only if you can prove request-to-submission traceability for payer-requested forms such as Form W-8BEN and W-8BEN-E. Required capability: retained request records, submitted copies, and linkage to the client/work record; withholding consequences can apply if requested forms are not provided, with current threshold pending legal or tax adviser verification. Warning signs: missing request logs, missing submitted PDFs, no renewal tracking. Verify before adopting or renewing: build a test onboarding packet and confirm you can export it intact.

Pass this only if records can be retained and exported in formats you can use outside the platform. For foreign-account reporting, FBAR is filed on FinCEN Form 114, not with the IRS, and the current record-retention window is pending legal or tax adviser verification. Under GDPR Article 20 logic, portability means a structured, commonly used, machine-readable format. Required capability: practical export for accountant/client/tool-exit use, plus clear retention/deletion handling. Warning signs: on-screen access without reliable archive export, or unclear deletion/portability path. Verify before adopting or renewing: test the full round trip. Grammarly documents create/upload/download workflows; ProWritingAid documents .docx/.txt exports; AutoCrit documents export/download options.

You might also find this useful: How to Structure a Joint Venture Agreement for a Software Product.

After a tool passes your compliance check, run a stress test before you buy or renew. trust means you can recover access, verify data-handling claims, and reach real escalation when work is blocked.

Stability is not a feature list. It is what happens on a bad day: login trouble, policy ambiguity, stale docs, or support gaps near a deadline.

| Claim | What to verify | Risk if false |

|---|---|---|

| "Your data is secure" | Independent security attestation status pending vendor verification, dated policy docs, and clear control scope | You are relying on marketing instead of evidence |

| "We align with privacy rules" | Current privacy terms, scope boundaries, and handling expectations for sensitive data | You may use a tool that conflicts with client obligations |

| "You will always have access" | Export path, recovery steps, and continuity options documented before purchase | Account issues can stop delivery work |

| "Support is here when needed" | Published support windows, escalation path, and blocked-work handling process | You lose time with no reliable human fallback |

A tool fails this test if you cannot reliably reach your drafts during a login, billing, or role-permission issue. What to verify: exports work without special intervention, recovery steps are published, and there is a fallback when the primary login method fails. Evidence to request: current help docs, export instructions, and written recovery/escalation handling; confirm document date/version. No-go red flags: recovery depends fully on support, exports are partial/unclear, or the vendor cannot point to current policy material.

Treat "secure" as unproven until you see documented controls and scope. What to verify: independent assurance status pending vendor verification, how data handling is described, and whether control scope is reviewable. The HIPAA Security Rule is a useful rigor check because it specifies administrative, physical, and technical safeguards. Evidence to request: current assurance summary (if available), privacy terms, and scope documentation for data categories. No-go red flags: broad security claims without evidence, undated privacy terms, or regulation-alignment statements with no scope boundaries. If your workflow involves ePHI or similar sensitive data, do not assume suitability by default.

You need evidence the vendor manages compliance risk early, not only after failures. What to verify: policy currency, documentation continuity, and change communication quality. FDIC definitions illustrate the maturity gap: One (1) reflects strong compliance management, while Three (3) reflects deficiency; use that as an evaluation mindset, not a direct SaaS certification. Evidence to request: dated policy pages, trust/security docs, and product notices showing how material changes are announced. No-go red flags: unexplained policy shifts, weak archive/export guidance, or no durable documentation you can retain locally.

Support quality is measured when publishing, export, or access fails, not when routine questions are easy. What to verify: available channels, published windows, and clear handling for business-critical tickets. Public service windows can be constrained (for example, noon to 2 p.m. on Tuesdays and Wednesdays versus 8:30 a.m. to 5 p.m. on Monday, Thursday, and Friday), so schedule-fit matters. Evidence to request: current support-hours page, escalation instructions, and blocked-account/process guidance. No-go red flags: hidden hours, no escalation path, or self-serve-only support for access/export blockers.

For a step-by-step walkthrough, see The Best Video Editing Software for Freelancers.

A tool passes this audit only if it reduces total workflow effort, not just where the effort happens. Use these four checks to measure real operating cost before you renew or switch.

| Check | Definition | How to test |

|---|---|---|

| Admin Tax | Recurring time loss from task-switching, reorientation, duplicate checks, and revision cleanup | (minutes lost per revision handoff + export/reformat time + duplicate tool-switching time) × monthly occurrences × current operational benchmark pending verification |

| Withdrawal Penalty | Practical cost of replacing a tool after it is embedded in your process | migration hours + template rebuild time + reviewer retraining + current fee range pending vendor verification |

| Single Source of Truth | One agreed place where everyone works from the same current version | Run a live handoff test and confirm review intent survives each step |

| Professional Signal | Visible proof that your editing process is consistent, controlled, and client-safe | Verify rule enforcement, review behavior, and export behavior in the exact environments your team and clients use |

Definition: recurring time loss from task-switching, reorientation, duplicate checks, and revision cleanup. Switching overhead is a documented risk, and observed teams have shown heavy app toggling loads, so treat tool-hopping as a measurable cost, not a minor annoyance. Use this method: (minutes lost per revision handoff + export/reformat time + duplicate tool-switching time) × monthly occurrences × current operational benchmark pending verification. To verify, time one real document from first draft to approved final, including every jump between editor, comments, email/chat, and export.

Definition: the practical cost of replacing a tool after it is embedded in your process. Include process disruption, retraining, file/template cleanup, and collaborator adjustment costs. Use this method: migration hours + template rebuild time + reviewer retraining + current fee range pending vendor verification. If a cheaper subscription creates a month of revision instability, it is not cheaper in practice.

Definition: one agreed place where everyone works from the same current version. If your workflow creates multiple "near-final" copies, friction and error risk rise quickly. Run a live handoff test: move one document through your normal review path and confirm review intent survives each step. For example, Google states tracked changes from Microsoft Office become suggestions in Google Docs; if your path breaks that intent, count it as real friction.

Definition: visible proof that your editing process is consistent, controlled, and client-safe. Client-facing quality depends on where controls actually work. Grammarly states SOC 2 Type 2 validation for key trust criteria, offers style-guide guidance, and notes style guide is not supported on mobile. Your check is simple: verify rule enforcement, review behavior, and export behavior in the exact environments your team and clients use.

| Tool | Manual effort required | Hidden costs | Reporting visibility | Client-facing experience |

|---|---|---|---|---|

| Grammarly | Lower in desktop/browser workflows; verify mobile gaps for your process | Current fee range pending vendor verification; recheck cost impact when mobile style-guide coverage matters | Team analytics dashboards are available | Strong consistency signal where style-guide controls apply; uploaded .docx can be downloaded as .docx |

| ProWritingAid | Lower when your stack already matches supported integrations; higher when collaboration must happen outside Web Editor | Free-plan caps can slow long revision cycles (500-word report limit; 2 runs per report) | Verify before buying | Live cursors support co-editing, but collaboration is tied to Web Editor |

| AutoCrit | Fits online self-editing for long-form drafts; verify handoff/export burden if others edit elsewhere | Current fee range pending vendor verification; switching and reformat overhead may sit outside the app | Verify before buying | Strong as an author-side editing stage; less suited as a shared review hub unless your team already works there |

Use this pass/fail checklist before you renew:

We covered this in detail in The Pre-Launch Checklist for a Digital Product.

Stop asking which app wins generic reviews. Before you adopt anything new, run it through one filter: does it fit your compliance duties, protect your cash position, reduce admin friction, and still make sense on total cost?

Your stack has to support the facts you may need to prove, not just help you send invoices faster. If your situation touches U.S. tax records, that can mean tracking the FEIE physical presence test at 330 full days, watching whether foreign accounts ever cross the $10,000 FBAR trigger, and using the right IRS form because W-8BEN is for individuals and W-8BEN-E is for entities. If you bill EU businesses, verify that your invoice can include the reverse-charge reference where required, then confirm the exact wording for [jurisdiction-specific requirement to verify].

A clean interface is not the same as balance protection. Check whether funds are actually held at an insured institution, how the $250,000 FDIC limit applies, and whether the provider can show current control assurance such as SOC 2 coverage for security, availability, and processing integrity. Red flag: treating SOC 2 as proof your money can never be frozen.

The right setup creates one evidence trail from contract to payment to bookkeeping. You want current terms saved, one successful test payment on record, and an evidence pack for key compliance records: original documents, storage location, capture date, and edits.

Price is only one line item. If a provider handles cross-border transfers, verify whether it discloses the exact exchange rate and amount received before payment where remittance rules apply, then count the support burden and rework cost too.

This week, audit your current stack, flag the weakest link, and fix one issue at the operating level:

This pairs well with our guide on Best Dictation Software for Writers Who Need Better ROI and Data Control.

Choose a payment path you can explain end to end, including who can move money, who can pause it, and who helps if something goes wrong. The grounding here does not support hard rules for specific cross-border rails in 2026, so decide by verifying the provider’s current liability terms, restriction terms, and human support path. Then save the latest terms, your onboarding approval, and one successful test payment record before routing a large client payment through it.

Start with agent access. The January 2026 CBA paper describes agentic payment tools as AI tools that can perform payment-related tasks without direct human instruction. It says existing consumer protection rules have uncertain application there. Your check is practical: read the current dispute, credential sharing, and account restriction language, then confirm whether you can disable agent permissions and require human approval for each payment action.

The best stack preserves one source of truth for both money and claims. If you collect reviews or reuse them in marketing, the FTC distinguishes a consumer review from a testimonial. Its Consumer Reviews and Testimonials Rule has been in effect since October 21, 2024, and knowing violations can lead courts to impose civil penalties. Keep an evidence pack for every reused quote: original text, where it appeared, capture date, and any edits made before publication, because FTC staff guidance itself says it does not create a safe harbor.

The hidden cost is often not the headline fee. It can include loss of control, extra compliance work, and weak support when money or reputation gets stuck. Exact tradeoffs vary by provider and contract terms. | Platform model | Control of client relationship | Total workflow fit | Compliance burden | Support quality to verify | |---|---|---|---|---| | Direct payment provider | Higher | Often needs separate invoicing and bookkeeping tools | You own document retention and current tax checks | Verify live escalation path and restriction handling | | Intermediary platform | Medium | Can centralize contracts and payments | Shared burden, but confirm what the platform still pushes back to you | Verify who owns the dispute and payout timeline | | Marketplace | Lower | Good for acquisition, weaker as your long term operating hub | Platform terms may govern reviews, testimonials, and payout behavior | Verify account hold process and human review access |

This is a tax status documentation question, not a guessing game. The grounding here does not support 2026 form rules or residency tests, so decide only after checking the current IRS instructions and the client’s exact onboarding request. Then verify the latest form and keep the submitted copy with the request email in your records.

This means your invoice wording and tax treatment must match the current facts of the deal. The grounding here does not support prescribing reverse charge rules or thresholds, so your decision should rest on verified current requirements for your jurisdiction and the client’s status. Then confirm the exact invoice language and required evidence before sending, rather than fixing it after a client rejection.

A former tech COO turned 'Business-of-One' consultant, Marcus is obsessed with efficiency. He writes about optimizing workflows, leveraging technology, and building resilient systems for solo entrepreneurs.

Educational content only. Not legal, tax, or financial advice.

Move fast, but do not produce records on instinct. If you need to **respond to a subpoena for business records**, your immediate job is to control deadlines, preserve records, and make any later production defensible.

The real problem is a two-system conflict. U.S. tax treatment can punish the wrong fund choice, while local product-access constraints can block the funds you want to buy in the first place. For **us expat ucits etfs**, the practical question is not "Which product is best?" It is "What can I access, report, and keep doing every year without guessing?" Use this four-part filter before any trade:

Stop collecting more PDFs. The lower-risk move is to lock your route, keep one control sheet, validate each evidence lane in order, and finish with a strict consistency check. If you cannot explain your file on one page, the pack is still too loose.