Use a role-based stack tied to your delivery phases. The best usability testing tools are the ones that help you validate a risky assumption before pricing, test key flows during build, and present clear proof at handoff. In practice, this article maps Maze to execution checks, Lookback to live qualitative observation, and Optimal Workshop to IA and navigation questions. Keep every client review grounded in one evidence format so decisions stay comparable and easier to approve.

You are not choosing among the best usability testing tools to chase feature lists. You are choosing a toolset that helps you control delivery risk, keep clients aligned, and make signoff easier. That is the decision that matters.

A roundup with 12 picks or 16 ranked options can help you scan the market, but it is not a decision. What helps is a clear-eyed read on each option's strengths, weaknesses, and best use cases, followed by a quick verification pass. Check for real screenshots, direct links, and a recent update date. If a "2026" guide was last refreshed in late 2025, treat it as a market scan, not proof.

The risks you are managing are usually operational, not abstract UX theory. Scope can creep when a client asks you to "just test one more flow" after work is already moving. Feedback loops can turn subjective when stakeholders argue about taste instead of user behavior. Approval friction shows up when work is delivered but decision-makers still want clearer proof behind the recommendation.

| Phase | What you need to prove | Evidence type | Why it matters |

|---|---|---|---|

| Proposal | The problem is real and worth paying to solve | Validation signals | Helps anchor the project around user need, not opinion |

| Execution | The design is working or failing in observable ways | Task performance signals | Gives you something firmer than stakeholder preference |

| Delivery | The outcome is credible and easy to defend internally | Stakeholder proof assets | Supports signoff, handoff, and smoother approvals |

One red flag matters more than a polished dashboard. Results are often context-sensitive, and loose tagging or poor matching can create false positives in reporting. If a tool cannot produce evidence you can verify, explain, and reuse in client conversations, it is probably not helping your business. If you need a parallel evaluation method, see How to conduct a 'Heuristic Evaluation' of a website.

Your toolkit should work as a risk-management system, not a feature collection. Keep it lean so each tool category supports one client decision in proposal, build, or handoff, with evidence stakeholders can quickly understand.

| Filter | Article guidance |

|---|---|

| Participant access | If clients cannot reliably source users, prioritize tools that simplify recruitment |

| Evidence format | Decide early whether you need shareable reports, raw observations, or diary-style qualitative material |

| Analysis effort | Some platforms combine recruitment with analysis/reporting; others give deeper observation but require more synthesis from you |

| Stakeholder readability | Check exported artifacts first; if a client cannot follow the output quickly, you will spend extra time translating results |

Use moderated qualitative work when your core question is why users struggle. This is usually most useful in proposal and early discovery, when you need to hear users think aloud, observe hesitation, and clarify the problem before pricing the solution. Thinking-aloud remains a strong first method because it is practical and flexible, but it does not cover every research need on its own.

Use unmoderated studies when the question shifts from explanation to pattern checking. In build, that helps you test prototypes, live sites, or mobile flows faster without scheduling live sessions for every iteration. At handoff, bring qualitative evidence back in when you need stakeholder-ready proof through real moments of confusion or success.

Before you commit to a stack, run those four filters against the exact client decisions you expect to support.

Then run a small sample study on the exact thing you plan to test and review the handoff artifact you would use in a client deck or approval pack. If the output is hard to verify, scan, or reuse, the tool may still be weak for delivery even if it is good for research.

| Tool category | Best-fit phase | Output artifact | Decision it supports | Common misuse to avoid |

|---|---|---|---|---|

| End-to-end remote testing platform | Build, sometimes proposal | Shareable report with analysis output | Whether the current direction is clear enough to continue | Selecting for feature breadth, then discovering reports are too dense for stakeholder decisions |

| Observation-heavy qualitative tool | Proposal and handoff | Live interview observations or diary-style qualitative material | Why users behave a certain way and which moments matter most | Using it when you actually need fast pattern checks across repeated tasks |

| Navigation/IA-focused tool | Proposal and structure work | IA/navigation study output | Whether labels, hierarchy, and findability are working | Expecting it to answer broader product questions outside navigation structure |

Representative options are useful for shortlisting, not final selection. Lookback is a better fit when live, observation-heavy interviews or diary-style qual are central. Optimal Workshop is a clearer fit when the key question is navigation or IA. If you are comparing broader platforms from current roundups, verify current feature fit and pricing before locking your stack. You might also find this useful: The best tools for transcribing 'User Interviews'.

Before you price delivery, test one high-risk assumption and turn what you observe into scope priorities. That keeps your proposal anchored in user behavior, not opinion, and helps you lead the project as a business decision.

| Proposal style | Decision quality | Revision risk | Client trust signal |

|---|---|---|---|

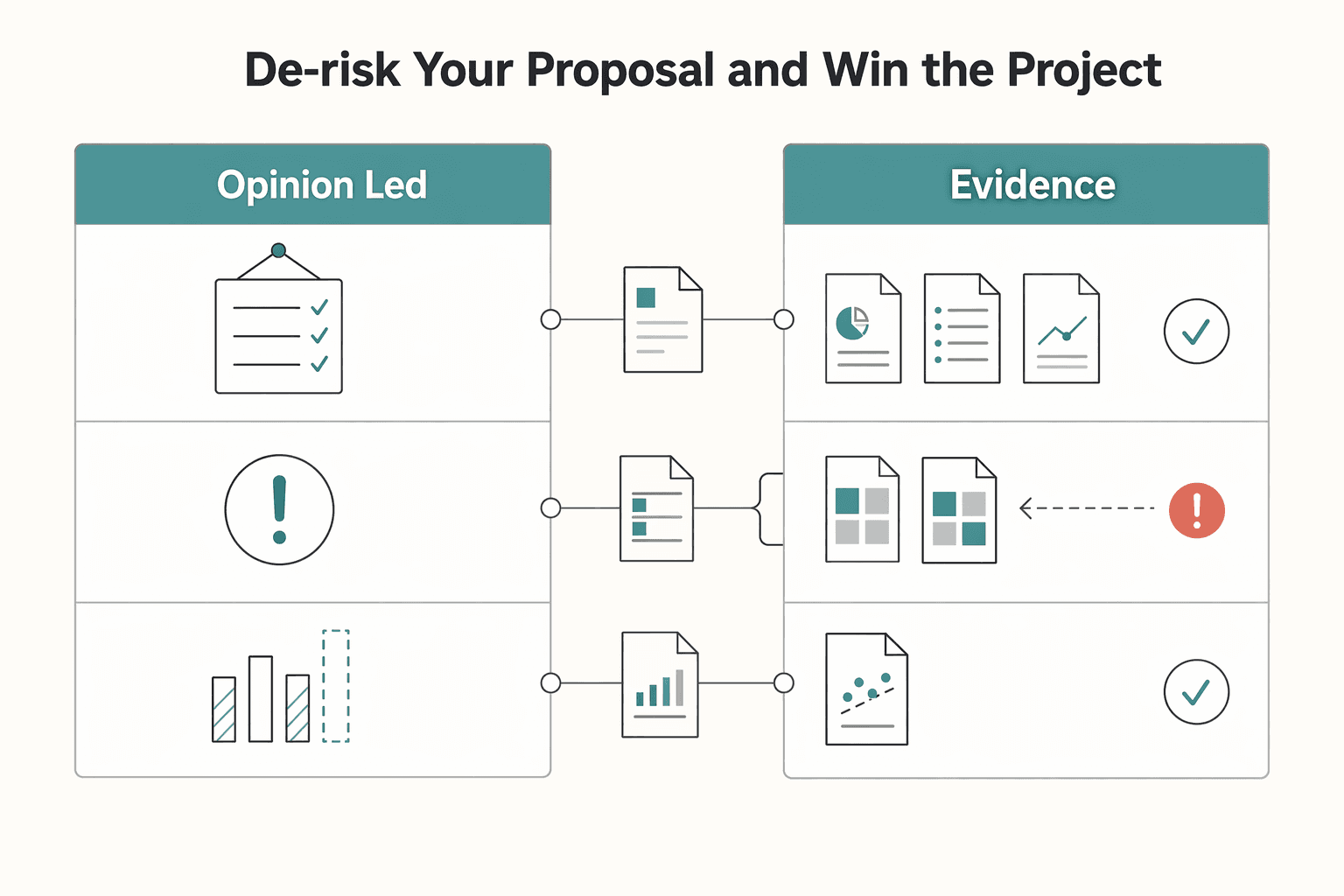

| Opinion-led proposal | Based on your judgment and stakeholder preferences | Higher, because direction can be reopened by opinion | Portfolio, credentials, taste |

| Evidence-led proposal | Based on observed task success or failure | Lower, because recommendations tie to user behavior | Short evidence brief with findings, impact, and next-step logic |

In discovery, isolate the single assumption most likely to derail the project if it is wrong. Common candidates are task clarity, findability, or whether a key screen supports the business decision it needs to support in real use. If you cannot state that assumption in one sentence, do not price full scope yet.

Use a behavior-focused qualitative usability check so you can see what users do, not just what they say. Keep the checkpoint concrete: can users complete the proposed task, and does the observed path match the real business use case? If the result is unclear, narrow the recommendation or add a discovery line item instead of writing around uncertainty.

Use one repeatable evidence format for each key finding: Observation (what users did), Business impact (how that affects the goal), and Recommended direction (what to change next). If you need a metric, mark it as current benchmark pending source-record verification.

Include the tested assumption, method, task prompt, observed behavior, evidence summaries, and resulting scope recommendation. End with a clear approval rule for later phases: decisions are reviewed against user evidence, not personal preference. This keeps expectations clear now and gives you something to point back to if revision requests expand later.

If you need to price this discovery work cleanly, keep it as a separate diagnostic step rather than burying it in production scope. How to Price a UI/UX Audit for a SaaS Company is a useful companion for that move.

During execution, replace opinion-led reviews with evidence-led reviews or scope will drift. Keep stakeholder participation, but anchor every decision to observed task behavior, a precise problem statement, and the business goal.

Use one repeatable review cadence so decisions stay comparable and defensible. Before each checkpoint, test the exact flow you are asking the client to approve. For rough work, use a paper prototype with a think-aloud protocol; for interactive work, keep task prompt, facilitation style, and analysis plan stable. Run a pilot first to catch brittle scripts and flaky tech before full scheduling.

| Review moment | Path | Input artifact | Decision owner | Approval criterion | Follow-up action |

|---|---|---|---|---|---|

| Early concept check | Subjective | Static screens or verbal walkthrough | Loudest stakeholder in the room | "Looks right" | Revise visuals and reopen discussion |

| Early concept check | Evidence-led | Paper or clickable prototype, task prompt, pilot notes | You facilitate, client sponsor signs off | Users can attempt the task and the main risk is named | Refine concept or narrow scope before build |

| Mid-project flow review | Subjective | Latest mockups | Mixed stakeholder group | Preference and internal opinion | More revision rounds |

| Mid-project flow review | Evidence-led | Tested prototype, note template, observer debrief | Client decision owner with your recommendation | Findings trace through severity, frequency, impact, task, and business goal | Implement changes and schedule next research iteration |

| Late change request | Subjective | Screenshot in email or meeting comment | Late stakeholder | "I don't like it" | Unplanned change enters scope |

| Late change request | Evidence-led | New request plus prior findings and retest plan | Sponsor or approver named in contract | Evidence shows material user risk or business risk | Retest, reprioritize, or issue a formal scope change |

When reporting, keep unverified measures clearly marked: current success-rate delta pending research-record verification, and current time-on-task comparison pending research-record verification. Then interpret the pattern. A smaller shift on a severe failure in a key task can matter more than a larger shift on a cosmetic issue.

If you have no fresh user evidence, you are collecting opinions, not approving work.

Document where users hesitated, failed, or took unexpected routes, and map each issue to severity, frequency, impact, and affected task.

Keep a short log per checkpoint: artifact version, tested task, top findings, business impact, recommendation, and what was accepted or deferred.

Use a weekly or per sprint rhythm when delivery is recurring. End each checkpoint with written sign-off: what was reviewed, what was approved, what risk remains, and what gets tested next.

Treat recordings and late requests as scope-control points, not admin tasks. If sessions are recorded or personal data is collected, keep consent and handling notes that state what was recorded, why, whether clips can be shared with the client, what identifying details appear, and who can access materials.

| Trigger | Required response |

|---|---|

| Target user group, task prompt, or success condition changes | Retest before approval |

| A request affects a key path tied to the business goal | Reprioritize the backlog before design updates |

| A late stakeholder asks for a materially different direction | Use formal scope-change handling unless it can be validated within the current plan |

| Recordings, clips, or notes are reused for a new purpose | Update consent and handling notes before sharing |

Your final handoff should make approval easier by tying delivery to validated results, not just files. Use the same evidence chain each time: agreed business goal, observed user behavior, and your recommendation.

| Handoff item | What to include |

|---|---|

| Summary | A brief with the agreed goal, tested task, top finding, and recommendation |

| Selected clips | Short, representative clips showing success moments or previously recurring friction now reduced |

| Metric snapshot | A compact view of current measures, including any clearly marked pending values |

| Next-step actions | A short action split such as implement now, defer, or retest |

This matters more than a polished handoff. Maze frames research value as tracking impact, calculating returns, and presenting research as a driver of business growth. Instead of ending with "here is the Figma file," end with a short result narrative: what you tested, what users did, and what should happen next. If a metric is still pending, state it clearly as current outcome delta pending research-record verification.

Keep your reporting structure explicit. A planning document helps align goals, methodology, and metrics before testing so stakeholders are working from shared study parameters. If you used a moderated-study template, name that limitation: moderated methods are not automatically transferable to unmoderated or mixed-method studies without adaptation.

| Delivery style | Narrative | Evidence type | Decision confidence | Invoice readiness |

|---|---|---|---|---|

| Artifact-led | "Here are the final screens and components." | Design files, style guide, mockups | Lower, because approval rests on opinion | Weaker, because payment is detached from outcomes |

| Findings-led | "Here are the issues we found." | Notes, raw findings, issue lists | Medium, because problems are visible but value can still feel incomplete | Better, but still open to "what changed?" |

| Outcome-led | "Here was the goal, here is the observed behavior, and here is the recommendation." | Selected clips, metric snapshot, summary, next-step actions | Higher, because evidence is tied to a business question | Stronger, because the invoice arrives with a documented result narrative |

Choose clips by criteria, not drama. Prioritize representative success moments, recurring friction that was removed, and examples tied to stakeholder concerns. Label each clip with the task, user type, and observed change. Do not rely on AI auto-highlights without human review: TestGuild reports that 67% trust AI-generated tests only with human review, and 75% say ambiguous requirements are the main bottleneck. If requirements were unclear, say that directly and narrow your recommendation instead of overstating certainty.

Use that four-part package to keep payment conversations tied to validated results.

If you want a deeper dive, read Value-Based Pricing: A Freelancer's Guide.

Keep the positioning clear: you run a business, and usability testing is part of how you control risk. The point is not to collect more UX activity. It is to reduce predictable problems in client work, especially subjective feedback and unclear decisions.

A usability test is valuable because real users interact with a prototype before production, which helps you spot overlooked UX issues while there is still time to act on them. That gives you something better than opinion when a client says, "I just do not like it." If a study cannot produce evidence that changes a decision, it is probably extra work rather than protection for your project.

Keep one category for collecting decision evidence, and another for organizing feedback into proof a client can review quickly. That distinction matters because ad hoc tracking can become chaotic and unusable once notes, clips, comments, and revisions start stacking up. Your checkpoint is the evidence pack itself: task outcome, supporting clip or verbatim, implication, and next action. If recruiting is part of your offer, standardize incentives too, and use an incentive calculator as a repeatable checkpoint. Guidance built from a large session dataset is a stronger control than guessing low and paying for it with time and no-shows.

Put a visible research line in your next proposal, marking any unconfirmed market number as current benchmark pending source-record verification. Carry the same structure into delivery and handoff: goal, evidence, implication, next action. Even in 2026, faster tools do not replace judgment or communication discipline.

That is the real shift: you stop behaving like hired labor and start operating like a small business that documents decisions, communicates clearly, and leaves fewer avoidable surprises behind. This pairs well with our guide on The Best Analytics Tools for Your Freelance Website.

A role-based stack can be more useful than trying to pick a single winner. For example, usability-testing platforms can support remote studies on prototypes, live sites, and mobile experiences; Optimal Workshop is positioned for IA and navigation questions, while Lookback is better suited when live, observation-heavy qualitative work is the core. Treat roundup counts as a warning, not a verdict: one source frames 11 tools, while another frames 18 for 2025, so list size depends on scope. For 2026 planning, use NN/g’s comparison table of 11 popular unmoderated tools (features noted as of September 2024) as a checkpoint, then verify current capabilities directly before you buy.

Use a consistent structure: goal, evidence, implication, next action. Pair quantitative and qualitative evidence from the study so the client can see both what happened and why it matters. If your evidence is one-sided, decisions usually slow down.

Not always. Tools and methods are not interchangeable, so avoid forcing one method across every project phase. Use unmoderated studies when you need repeatable task evidence, and use live observation when you need follow-up questions and deeper context. If the study goal is still fuzzy, start smaller and clarify the question before you scale session volume.

Budget by scenario, not by a flat percentage, because the right amount depends on scope, decision risk, and the cost of being wrong. A quick prototype check, a redesign of a critical flow, and a prelaunch signoff study can each get separate estimates for tool access, participant recruiting, session volume, analysis time, and evidence packaging. If you need a numeric benchmark, verify the current market rate from source records before use. Keep the research line item visible in your proposal so it stays tied to risk reduction and decision quality.

Run a basic compliance check before fieldwork: informed consent, data handling, retention, participant rights, and client contract alignment. Your consent language can clearly state what is recorded, why it is recorded, where it is stored, who can access it, and how participants can raise concerns. Then confirm your client contract does not promise broader reuse, sharing, or retention than participants agreed to. Legal requirements vary by jurisdiction, so verify exact thresholds with qualified legal guidance before launch.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

Includes 6 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

If you need to price UI/UX audit work for a SaaS client, the job is not finding a magic market number. It is turning uncertain scope into a quote you can defend, a Statement of Work (SOW) the client can approve, and payment terms that do not leave you carrying the risk.

Treat your site like an operating asset, not a gallery of past work. A portfolio mindset asks, "Does this look good?" An asset mindset asks, "Does this communicate clearly, build trust, and move the right person to act?"