Start by screening plaid alternatives on connection method, then reliability, then workflow ownership. Verify OAuth or other user-permissioned access and FDX-aligned documentation for each required institution, not just a coverage headline. Next, test auth-change and disconnected-account behavior with your real bank mix. Close with one payment-to-ledger walkthrough that includes an exception case, so you can see whether reconciliation work drops or your team still handles triage, approvals, and evidence exports.

When you evaluate plaid alternatives, treat it as a risk and operations decision first. If OAuth is missing where an institution requires it, account connections can fail before your product experience even starts. If reliability is inconsistent, the workflows you depend on, like balance checks and transaction pulls, can break.

Use this filter before you go deeper.

| Evaluate it like a tool | Evaluate it like a partner |

|---|---|

| Counts institutions | Verifies connection method by institution, including OAuth support |

| Leads with coverage claims | Shares status history, incident patterns, and refresh behavior |

| Says "we provide access" | Explains permissions, account selection, and token handoff |

| Matches feature checklists | Proves support for your required jobs, for example balances and transaction history |

The rest of this article follows three practical pillars:

Confirm how access is granted. OAuth lets users share data without handing over credentials directly to a third party, and permissioned or tokenized connection models are a core control signal.

Prioritize stable connections at the institutions your users actually depend on. In practice, that means checking uptime, error patterns, and institution-level support instead of relying on top-line coverage claims.

Check the real user flow and your production constraints: institution auth, account selection, token handoff, and operational limits like rate limiting. Include compliance readiness too, since U.S. personal financial data rights rules emphasize secure, reliable sharing and authorized third-party obligations.

Start with the connection model. This pairs well with our guide on The Best Calendly Alternatives for Freelancers.

Start with how the connection actually works. Choose a provider that uses user-permissioned, documented API access wherever possible, not credential-based retrieval as the default. When you compare providers, this choice shapes your day-to-day risk, your control over access, and connection reliability when institutions change authentication behavior. If you cannot tell which connection method is used for your priority institutions, treat that as a decision blocker.

In the FDIC materials, the core concept is user-permissioned financial data sharing among providers, recipients, and access platforms. The clearest U.S. technical signal is the FDX API, described as a common, interoperable, royalty-free standard for secure access to permissioned consumer and business financial data.

That matters because FDX states users can be authenticated without sharing or storing login credentials with third parties. That is the baseline to look for. It does not mean every institution supports the same method, and FDX reports that many of the largest U.S. financial services organizations have begun implementing this standard.

This risk is not theoretical. The same FDIC material defines screen scraping as retrieving account information with user-provided login credentials. Yale's analysis also highlights potential harms in open-banking data-broker practices, including loss of funds, theft, identity fraud, and operational failure.

For your team, that can show up as operational disruptions and harder incident analysis when feeds change unexpectedly. And if wallets are part of your flow, apply the same scrutiny. The Yale article notes wallet balances discussed in that context are not FDIC-insured.

| Decision criterion | Permissioned API access | Screen scraping |

|---|---|---|

| Credentials | User authentication can occur without sharing or storing login credentials with third parties | Relies on user-provided login credentials to retrieve account data |

| Access basis | User-permissioned sharing tied to documented access rules | Access is derived from the customer login itself |

| Connection stability | Standards-based method is usually a stronger trust signal | More exposed to operational failure when credential-based retrieval breaks |

| Permission granularity | Check how consent details are documented by institution | Check what data can be retrieved once credentials are provided |

| Ending access | Confirm whether a documented way to end access exists | Confirm how access is terminated after credentials have been used |

| Incident transparency | Check written method docs and clear failure explanations | Check how credential handling and failures are disclosed |

| Support coverage check | Check your exact banks and fintech tools institution by institution | Check the same list, especially where access depends on credentials |

Keep this simple and require written answers.

| Check | What to verify |

|---|---|

| Docs | Explicit language on user-permissioned sharing, FDX API alignment, and whether authentication avoids sharing or storing bank credentials with third parties |

| Dashboard or demo | The consent and connected-account flow, not just a "connected" status |

| Support response | Which required institutions and tools use permissioned API access versus credential-based retrieval |

| Failure and exit handling | What changes when institution authentication shifts, expected failure states, and whether there is a documented way to end access |

Look for explicit language on user-permissioned sharing, FDX API alignment, and whether authentication avoids sharing or storing bank credentials with third parties.

Ask to see the consent and connected-account flow, not just a "connected" status, so your team can see how access is actually granted.

Send your required institutions and tools list and ask which connections use permissioned API access versus credential-based retrieval.

Ask what changes when institution authentication shifts, what failure states you should expect, and whether there is a documented way to end access.

Save the support reply, key docs, and consent-flow screenshots in your vendor review file. Once the trust model is clear, move to Pillar 2 and test whether the platform turns connected data into something you can actually use. If you want a deeper dive, read Value-Based Pricing: A Freelancer's Guide.

Once data access is sound, the next question is whether the platform helps you make and defend decisions. If you still need spreadsheets to explain risk, rules, and follow-up actions, you may be looking at a data viewer rather than an intelligence layer.

Use this quick check.

| Output that matters | Basic aggregator | Intelligence layer to verify |

|---|---|---|

| Risk flags | Displays account activity for manual review | Should show why an exception was flagged, plus the rule context, date range, and affected accounts |

| Evidence readiness | Exports data with limited decision context | Should preserve timestamps, source-to-output traceability, and rule history |

| Forecasting usefulness | Primarily historical display | Should make assumptions and cash-timing logic visible enough to review |

| Actionability | You decide next steps manually | Should tie each flag to a concrete next step, owner, or review point |

Do not judge this by UI polish. Ask the vendor to show how it handles cross-account activity, multi-currency records, and duplicate-looking events. Then ask how each item is labeled, time-stamped, and reconciled. If they cannot explain the transformation path clearly, assume you will still be doing core intelligence work outside the platform.

One useful evaluation lens from a 2026 alternatives framework is to review data lineage depth and open API architecture during evaluation.

For compliance workflows, treat explainability as a requirement. Ask what triggered the flag, not just whether a flag exists. Ask for the included accounts, lookback window, and rule logic in plain language. If a filing or residency threshold is involved, verify the live threshold from official, vendor, contract, compliance, or source records before use.

| Alert check | What to ask |

|---|---|

| Trigger | What triggered the flag |

| Included accounts | Which accounts are included |

| Lookback window | What lookback window was used |

| Rule logic | The rule logic in plain language |

| Threshold text | Current threshold pending source/vendor verification. |

| AI score explainability | If a score cannot be explained, treat it cautiously for filing, reporting, or money-movement decisions |

This is also where AI governance starts to matter. If a score cannot be explained, treat it cautiously for filing, reporting, or money-movement decisions.

Before you trust any output, ask for one full replay: raw input, transformed record, applied rule version, and alert timestamp. If a teammate or advisor cannot follow that chain quickly, the system is not ready for high-stakes use.

Save one replay artifact in your vendor file. When rules or mappings change later, this is what lets you explain prior decisions without guesswork.

A short pilot tells you more than a polished demo. A grounded checkpoint sequence is: audit, define requirements, parallel run, adoption measurement. Use it to test output quality before full rollout.

In the parallel run, compare platform outputs against your current review process across multiple cycles. Some 2026 comparisons report faster time-to-value for cloud-native approaches, while legacy deployments can take 12-18 months to realize value. If setup is long and the logic is still unclear, pause before scaling. If this pillar holds up, the next question is whether the platform actually fits how your business runs day to day.

For a step-by-step walkthrough, see A guide to 'Open Banking' and its implications for finance.

This pillar comes down to operational fit. If the platform does not reduce manual admin, cut tool handoffs, and improve month-end confidence, treat it as not yet your system.

Judge fit by tracing one full chain: invoice created, payment detected, match confidence shown, exception handled, ledger updated. If the vendor cannot walk that sequence clearly, manual reconciliation is likely to remain.

Use a real example in the demo: one recent invoice, one payment, and one messy case. Then check who handles each step, what triggers each transition, and what event actually updates the ledger. Count manual touches before and after the pilot. That is a practical metric that matters.

Do not buy the label of a report. Evaluate it by inputs, packaging, and traceability. Ask the vendor to show exactly which records are included or excluded, how date ranges and currency handling are documented, and how derived numbers map back to raw records.

| Evidence item | What to confirm |

|---|---|

| Included or excluded records | Exactly which records are included or excluded |

| Date ranges and currency handling | How date ranges and currency handling are documented |

| Derived numbers | How derived numbers map back to raw records |

| Included accounts | Included accounts are explicit |

| Disconnected or missing accounts | Disconnected or missing accounts are disclosed |

| Unresolved exceptions | Unresolved exceptions are visible in the final packet |

Before relying on any output for third-party review, confirm that included accounts are explicit, disconnected or missing accounts are disclosed, and unresolved exceptions are visible in the final packet.

Automation only helps if it fails safely. Happy-path demos are not enough. Plaid's June 06, 2023 ConfigDB engineering write-up describes both sides of this tradeoff: runtime updates without deployments, and misconfiguration risk when Git-like history and atomic reverts are missing.

Use that lesson directly in evaluation. Require a configuration validation checkpoint, visible change history, and a clear rollback path for bad rule or mapping changes.

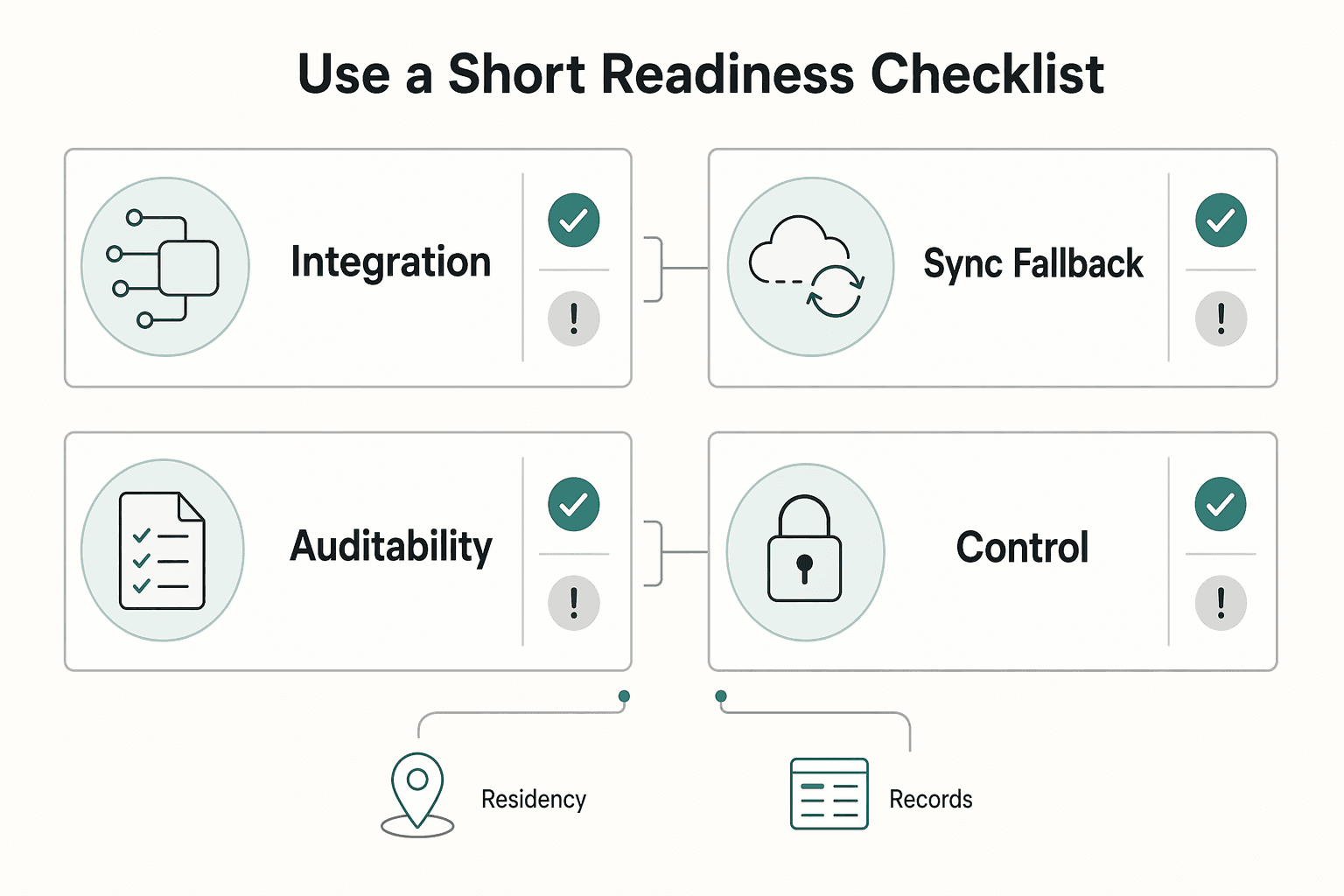

Use this as a blunt test. If these controls are missing, you are still stitching tools together.

| Checkpoint | What to verify | Red flag |

|---|---|---|

| Integration depth | One end-to-end flow from invoice to ledger, including an exception case | Detection, matching, and posting happen in separate systems |

| Sync fallback | Clear status, queue behavior, and manual review path when sync breaks | Silent failures or undefined fallback behavior |

| Auditability | Source timestamp, transformed record, rule or mapping version, approval trail | Output is visible, but the production path is not |

| Owner controls | Permissioned overrides, approvals, and resend actions with logs | Overrides exist but are not controlled or logged |

One market signal supports this framing: by May 04, 2022, Stripe had added Plaid-like account aggregation technology. That suggests data access is becoming expected, but it does not guarantee end-to-end operational fit. If you still have to hand-stitch invoicing, exceptions, approvals, and ledger updates, you have a connector, not a system.

We covered this in detail in The best alternatives to Stripe for international businesses. Before you lock your shortlist, validate your cross-border setup assumptions with the Tax Residency Tracker.

Use this as a shortlist filter for providers through the same three pillars, not as a feature checklist. The practical rule is simple: separate what is verified from what still depends on your own diligence. Technology choices can create both advantages and vulnerabilities, so unclear vendor answers are not neutral. They push risk and operating work back to you.

If your shortlist includes MX, Finicity, and Teller, treat them as diligence candidates rather than verified options in this evidence pack.

Assume you still own categorization checks, exception handling, approvals, and audit evidence unless a live walkthrough shows otherwise. In review, ask for one live institution test, one disconnected-account scenario, and one export that traces back to source records.

Regional strength can help when your accounts and workflows stay in one market. The risk for cross-border operators is consistency. What works well in one country can still break your month-end process when accounts span multiple jurisdictions.

For cross-border use, do not rely on a coverage claim alone. Require country-by-country institution validation and a sample evidence pack that clearly shows included accounts, missing accounts, and consistent exception labels.

Plaid should be evaluated as more than a connector. In Acquired's May 26, 2025 episode, Plaid is described as almost being acquired by Visa, then continuing as a standalone company while expanding into fraud analytics, alternative credit, and bank payments. That discussion sits in the $5 billion acquisition context.

Breadth can reduce how many vendors you stitch together, but it can also hide where responsibility still sits with your team. Validate with an end-to-end workflow walkthrough, not a product map.

| Provider | Connection model | Geographic fit | Data enrichment depth | Workflow/compliance support | Best-fit use case |

|---|---|---|---|---|---|

| MX | Not verified in this evidence pack | Must be verified against your required countries and institutions | Not established here | Not established here | Not established here; define after diligence |

| Finicity | Not verified in this evidence pack | Must be verified against your required countries and institutions | Not established here | Not established here | Not established here; define after diligence |

| Teller | Not verified in this evidence pack | Must be verified against your required countries and institutions | Not established here | Not established here | Not established here; define after diligence |

| Plaid | Exact connection mechanics are not verified here; broader scope is partly evidenced (fraud analytics, alternative credit, bank payments) | Must be verified against your required countries and institutions | Some expansion is evidenced; practical depth is not established here | Not established here | You are evaluating broader platform potential, not only account connectivity |

If a provider is strongest in data access only, pair it before purchase with reconciliation logic, an exception-review queue, approval and change logs, and an exportable evidence pack for third-party review. Without those pieces, you are buying components, not an operating layer.

Related: Mercury vs. RelayFi: Which is the Best US Bank Account for a Non-Resident LLC?.

Use this as your final test: if your team still owns issue triage when integrations fail, you are selecting a tool, not a partner. With plaid alternatives, decide on operating reality: coverage fit, integration and support model, and continuity risk.

Pass/fail question: Can the vendor prove your exact institutions work in the countries where you operate, before you sign?

A practical filter is to compare providers on coverage, infrastructure, and features, not brand familiarity. For UK and Europe, validate support against your real account mix, even when a provider reports scale such as close to 2,000 banks and financial institutions across 19 countries. Run a live institution test and ask how edge cases are handled. A large institution-count headline is not enough if your cross-border accounts are not reliably covered.

Pass/fail question: Does the implementation model match your product and team capacity?

Some providers are plug-and-play. Others support fully customisable web or mobile integration, including white-label API or white-label infrastructure if brand control matters. Then check support depth: is onboarding hands-on, and what ongoing support exists after launch? If support is mostly self-serve, more triage work may stay with your team.

Pass/fail question: Will this reduce daily operational cleanup, or add more exceptions for your team?

If bank payments matter, confirm support for one-off and recurring open banking flows, including refunds and variable recurring payments. Do not assume that implies card support. One published comparison explicitly flags that bank-focused connectivity can still be lacking support for traditional card payment processing. Another published snapshot (as of 22 January 2025) also stated Plaid was not onboarding new customers and was not facilitating new payment transactions or new funds, so re-check current onboarding and transaction status before procurement.

| Decision checklist | What to verify |

|---|---|

| Cross-border account coverage | Your required institutions, countries, and account types pass a live test |

| Support and escalation signals | Clear coverage disclosures, documented handling for delays, and a real escalation path |

| Automation depth | The platform supports the bank and payment flows you actually run, without pushing reconciliation back to your team |

Choose the option that most clearly lowers manual operations burden and improves day-to-day operating reliability. You might also find this useful: A guide to using 'Plaid' to connect bank accounts to your app.

If you want to confirm whether Gruv supports your specific collection and payout flow with the right controls, talk to the team.

It can be, but you should treat safety as something to verify in each product, not something to assume. In Open Banking, the app is often an account-information or data-access layer rather than your full banking provider. Start by reviewing the product documentation, and if you are switching, check for a migration guide from Plaid. If core details are unclear, treat that as a risk signal and pause.

Run two checks right away: documented product scope and migration evidence. First, verify whether the product is primarily account-information access or full payment processing, because those are different problems. Second, if you are moving from Plaid, check each alternative’s documentation for a migration guide. If security claims are broad but documentation is thin, treat those claims as unverified.

Only indirectly. An aggregator is usually a data layer, and data access alone is not the same as full compliance work. You still need a platform or internal process that turns raw records into reviewable compliance outputs. Any legal trigger or threshold must be verified from official, vendor, contract, compliance, or source records before use.

Usually no. A bigger connection-count headline does not, by itself, tell you whether a provider fits your needs. First verify product scope: some tools are primarily data-access layers, while payment processing needs can include cards or hybrid flows. Then check the documentation quality, including migration guidance if you are switching from Plaid.

Ethan covers payment processing, merchant accounts, and dispute-proof workflows that protect revenue without creating compliance risk.

Includes 2 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

Pick the setup that keeps money moving under pressure, then worry about nicer features.

When you use **Plaid to connect a bank account**, think of Plaid as connection infrastructure, not your bank. It moves permissioned data between your bank and an app.