Choose the best abm software in sequence: start with a CRM you will maintain daily, then add a data tool, and only then consider engagement add-ons. For most solo operators, HubSpot or folk plus LinkedIn Sales Navigator or Apollo covers the core motion before heavier platforms like Terminus, 6sense, or Demandbase make sense. The deciding rule is operational: buy when manual follow-up, record quality, or reporting gaps are slowing target-account progress.

If you start by buying tools, you can end up automating a fuzzy strategy. Account-based marketing works the other way around: pick specific accounts first, then build personalized outreach around them.

A practical place to begin is a small 1:1 ABM motion you can run by hand. It forces clear choices about who matters, why they fit, and what you will actually say.

A simple check helps here: if you cannot explain your target account list and next action in a plain spreadsheet, software is unlikely to fix the problem. ABM can also feel awkward if you are used to broad inbound activity, so keep the scope tight and practical.

| Approach | What you do first | What usually happens |

|---|---|---|

| Strategy first | Define ICP, pick target accounts, research each one, tailor outreach | Clearer account focus, more relevant messages, easier tool selection later |

| Tool first | Buy a platform, import lists, start sequences | More activity, but often weak targeting and generic outreach |

| Mixed but disciplined | Use light tools only to support a manual process | Faster execution without losing account judgment |

Start with a process you can inspect account by account. That keeps your targeting honest and makes it obvious where a tool might help later.

| Step | What to do | Grounded check |

|---|---|---|

| Define your ICP | Write down the kind of account you actually want and use quantitative and qualitative inputs to validate it | Rule out near-fits before you waste research time |

| Select priority accounts | Choose target accounts before outreach and rank them by deal size and strategic potential | Each account should have a written reason it made the list |

| Build an account dossier | Create a short dossier with strategic insights you can verify from reports, job posts, and news | Keep the data current |

| Map stakeholders and plan value-led outreach | Build a working view of who feels the pain, who influences the decision, and who signs off | Match outreach to each role |

| Set one clear next action per account | End research with a single next step | If there is no obvious next move, research is probably still too vague |

Write down the kind of account you actually want, not just the logos you admire. Use both quantitative and qualitative inputs to validate it. A good ICP also helps you rule out near-fits before you waste research time.

Choose target accounts before outreach starts, and rank them by deal size and strategic potential, not company size alone. A smaller company with urgent need and a clear path to budget may beat a famous enterprise that never prioritizes your category. Each account should have a written reason it made the list.

For every target, create a short account dossier. Include strategic insights you can verify from reports, job posts, and news. This is where personalization becomes concrete instead of cosmetic. Keep the data current, because stale account data quickly turns into bad outreach.

You do not need a perfect org chart, but you do need a working view of who likely feels the pain, who influences the decision, and who signs off. Plan outreach that matches each role. A practical insight, a relevant example, or a note tied to one active initiative is stronger than a generic "can I get 15 minutes?" message. The point is to match value to context, not send the same note to everyone.

End research with a single next step: send a tailored note, comment on a relevant announcement, ask for a warm intro, or share a short idea linked to a current signal. If an account has no obvious next move, your research is probably still too vague. One next action keeps momentum and stops research from turning into procrastination.

That is the point of this section: get the strategy working by hand first. Once your ICP, account dossiers, and next actions are clear, the search for ABM tools becomes much easier and much more grounded.

If you want a deeper dive, read A Guide to Account-Based Marketing (ABM) for SaaS.

Build your stack by workflow, not by brand. In a people-data market that spans 45+ platforms and budgets from fifty dollars a month to fifty thousand dollars a year, focus on covering three functions with the fewest moving parts: identify, engage, and measure.

| Function | Lightweight tool options | When to use each | Setup effort | Key risk to watch |

|---|---|---|---|---|

| Identify | LinkedIn Sales Navigator, Apollo, basic research sheet | Use a people-data tool when manual research is too slow and verification is slipping | Moderate if you add a new data source; lower if you keep one shared record | Bad data spreads fast when records lack source links and last-verified dates |

| Engage | HubSpot, Notion, disciplined inbox + task list | Use a CRM when follow-ups are missed; use a flexible workspace when account dossiers drive your process | Moderate if multiple systems must stay synced | Two sources of truth lead to dropped handoffs and duplicate outreach |

| Measure | HubSpot pipeline fields, Notion scorecard, weekly review sheet | Use the lightest setup that shows account progression, not just activity count | Low to moderate, depending on review discipline | Vanity metrics can hide stalled accounts and weak pipeline quality |

Keep a minimum account record before outreach: account, stakeholder, current signal, source URL, country, and last-verified date. Hand off to outreach only when the record includes one clear reason the account fits and one next action. Keep country visible, because compliance frameworks can vary widely across markets.

Pick the system you will update daily, then make it your single working record. Store touch history, next step, and do-not-contact status in one place so research and outreach do not drift apart. If you use AI agents for research or enrichment, require a human review before first outreach.

Track movement, not motion. Use a simple scorecard with progression signals such as: new stakeholder identified, meaningful reply, meeting booked, proposal sent, next step scheduled, stalled 14+ days, and active pipeline value. Keep the benchmark pending until it is verified from CRM or platform records, but start tracking now. Every week, de-duplicate records, re-verify stale contacts, and pause accounts with no movement and no fresh signal.

Even the best ABM tool will not make outreach compliant by default. Your advantage is operational: send fewer, better-researched messages, and keep enough record-level evidence to explain why each contact was relevant, respectful, and easy to stop.

ABM's precision targeting model gives you room to review records properly before outreach. Use that advantage.

Before first contact, confirm these fields in the live record:

If any field is missing, pause outreach for review. Keep the evidence with the account record: source link, capture date, reason for fit, trigger event, message version sent, reply status, and jurisdiction requirement status marked pending until source records confirm it.

If you use landing pages or tracking, log the consent artifact too. A consent-preference cookie can store user choices, and source examples list consent-storage cookies at 1 year and _GRECAPTCHA at 6 months.

Treat each data source as evidence to verify, not automatic permission to contact.

| Data source | Acceptable use | Risk flag | Required safeguard |

|---|---|---|---|

| Public professional profile | Research role, responsibilities, and account fit | Public visibility is not unlimited reuse | Save source URL, note role relevance, and mark jurisdiction requirements pending source verification |

| Company-published material | Tailor outreach to published initiatives and announcements | Weak signals can be overinterpreted | Store exact page and capture date |

| Website visitor or cookie signal | Support site experience and attribution checks you can explain | "Accept All" may include consent to share personal information with partners | Keep consent-preference record, purpose labels, and timestamp; verify outreach basis separately |

| Purchased or brokered contact data | Use only after provenance and permissions review | Stale records and unclear permission path | Do not enroll until source, date, and jurisdiction review are documented |

| Automated enrichment output | Use as a lead for verification | Errors can spread quickly into sequences | Require human check before first contact and keep original source link |

If a record only has an email and title, it is not outreach-ready.

A consistent structure makes review faster and mistakes easier to catch.

| Message element | What it should do |

|---|---|

| Disclosure line | Say who you are and how you found them in plain language |

| Relevance proof | Reference one specific signal tied to their role or account |

| Value-first opening | Lead with a useful observation or resource before any ask |

| Permission-respecting close | Include a clear, low-friction opt-out |

| Suppression handling | Mark do-not-contact, timestamp it, and stop follow-ups across your channels |

For cross-border campaigns, map jurisdiction before sequence logic. Then choose your consent or legitimate-interest path only after current verification for that jurisdiction, and record that decision in the account. If you cannot show source, relevance, jurisdiction check, and opt-out handling, pause scaling and review first.

Pay for tools when your numbers show a clear return, not when a platform demo looks sophisticated. For a business-of-one, the decision is simple: will a paid setup recover meaningful time or improve progress across your defined Target Account List (TAL) enough to justify total investment?

Enterprise spend ranges can be large ($35,000 to over $1 million annually, and higher in some large enterprises), but that context is not your budget target. Your question is narrower: can your current workflow handle your TAL without avoidable execution drag?

Start with client value, then compare it to full investment.

Use a simple worksheet:

Keep the comparison honest: ROI includes implementation effort and time-to-impact, not only subscription cost. ABM programs can take a meaningful ramp period (often 3-6 months) before significant impact appears. If useful, tighten your value assumptions first in Value-Based Pricing: A Freelancer's Guide.

Time is your scarcest asset, so baseline it first.

Track one normal week across:

Then verify current monthly hours spent from time, CRM, or platform records. Mark which hours are truly recoverable (repeat admin work) versus strategically necessary (high-quality research and personalization). Count only the hours you can reallocate to revenue-driving work without lowering quality or compliance discipline.

Pick one outcome metric tied to your TAL so you can test impact instead of guessing.

A practical option:

(Engaged/Won Target Accounts / Total Target Accounts) x 100Use a baseline first, then compare after the tool has had enough time in-market. If penetration stays low, treat that as a diagnostic signal for messaging, channel choice, or TAL quality, not automatic proof that you need more software.

Use operational signals, not hype, to decide whether to stay manual, go hybrid, or adopt a fuller paid stack:

If these patterns persist, evaluate tooling carefully against known implementation risks: wrong platform choice can consume 6-12 months, poor integration can reduce account matching performance, and weak analytics can block proof of impact.

| Path | Trigger condition | Expected benefit | Primary risk |

|---|---|---|---|

| Stay manual | TAL remains manageable with reliable follow-up and clear records | Lowest spend and strongest direct account familiarity | You become the execution bottleneck |

| Hybrid path | Research quality is strong, but tracking/reminders/reporting are slipping | Recovers admin capacity without full platform overhead | Tool sprawl and duplicate records |

| Adopt paid stack | Repeated leakage, inconsistent execution, and limited visibility across a growing TAL | More consistent coordination and measurable account progress | Paying for implementation mistakes, not just software |

If you cannot name the exact tasks a tool will remove and where that recovered capacity will go, wait. If you can map both clearly, the investment decision becomes operational, not aspirational.

For a solo operator, the best abm software is usually the next tool that removes a proven bottleneck. Buy in sequence: contact management first, then data quality, then selective engagement add-ons. Map your process manually first, then automate, and keep measurement tied to CRM outcomes (pipeline, SQLs, opportunities, revenue), not vanity metrics.

| Stage | Use when | Gate before moving on |

|---|---|---|

| Starter CRM | Follow-up discipline is weak and records, stages, and follow-ups are scattered across inboxes, docs, and memory | Fix CRM workflow first |

| Data and intelligence | CRM workflow is stable but contact quality is weak | Add one data tool only after CRM hygiene is working |

| Engagement specialists | List quality and messaging are stable | Use selectively for a stable target-account list and message set |

| Enterprise suites | You are considering an enterprise suite | Require clear ownership, integration capacity, and a 90-day pilot that finance will accept |

Use this order before you buy:

Start here if your records, stages, and follow-ups are scattered across inboxes, docs, and memory.

Add one data tool only after your CRM hygiene is working, so you can tell whether data quality is the real constraint.

Use these selectively for a stable target-account list and message set, so outreach feels human, not synthetic.

Treat these as criteria-based choices, not automatic upgrades. Without mature process ownership and integration discipline, complexity can outrun value.

| Tool | Best-fit use case | Core strength to verify | Implementation burden (solo) | Likely mismatch risk |

|---|---|---|---|---|

| HubSpot | Starter CRM when you need one operating record for accounts and follow-ups | Whether your pipeline stages and follow-up cadence are easy to maintain consistently | Scope to essentials first (fields, stages, reminders) | Tool is purchased, but work stays in disconnected systems |

| folk | Starter CRM when you want a lighter structure than spreadsheets | Whether your core relationship workflow stays clean without heavy setup | Depends on import quality, dedupe, and stage clarity | Reporting expectations grow faster than your setup |

| Apollo | First data/intelligence test after CRM discipline is in place | Whether contact/account coverage improves your named-account workflow | Requires QA rules and clean CRM handoff | Data volume increases, but targeting discipline does not |

| ZoomInfo | Alternative/second data test against the same account sample | Whether data quality is materially better for your target list | Requires side-by-side evaluation criteria | Evaluation overhead grows without a defined gap to solve |

| Terminus | Selective engagement once account lists and exclusions are stable | Whether audience execution maps to your verified TAM and CRM measurement | Requires list hygiene and regular optimization | Targeting complexity outpaces your process maturity |

| Sendoso | Selective human-touch programs for a small, high-value list | Whether it strengthens real human-to-human engagement in your motion | Requires operational rules and CRM logging discipline | Tactics feel performative if core messaging is still weak |

| 6sense | Enterprise-suite evaluation when cross-functional ABM operations are already mature | Whether orchestration and measurement into CRM improve core business outcomes | Validate ownership, integrations, and weekly operating load in pilot | Complexity and integration load exceed team capacity |

| Demandbase | Enterprise-suite evaluation for broad account-program coordination | Whether account-stage measurement in CRM is reliable enough to guide decisions | Validate ownership, integrations, and weekly operating load in pilot | Budget and implementation drag exceed practical return |

A sensible minimum stack for most solo teams:

Do not buy yet if:

ABM only holds up when outreach and operations run as one workflow in one record. If they split across disconnected tools or habits, you get the known failure pattern: fragmented buyer experience, dropped context, and lost deals.

Start with a quick ABM-fit check before adding process. This model is usually stronger when your cycle is long (>180 days), deal value is higher (>$30k ACV), your market is focused (<1000 target companies), and decisions involve multiple stakeholders (3+). If that is not your current reality, keep the system lighter and avoid enterprise-style overhead.

Then run one account through this flow, with a named owner at each step:

Store your rationale in the account record: why the company fits, where contact data came from, likely stakeholders, and which buying signal would trigger outreach.

Keep replies, intent cues, and account notes in the same CRM record. Validate matching before you scale, because weak integration is linked to 30-40% lower account match rates.

Before drafting a proposal, lock a short commercial brief: scope summary, stakeholder notes, assumptions, and next action.

Put trust controls in place at agreement time: [data handling note], [scope sign-off], and [approval path].

Treat billing as part of delivery, not an admin afterthought. The same account record should show delivery start, invoice status, [payment terms], and follow-up owner.

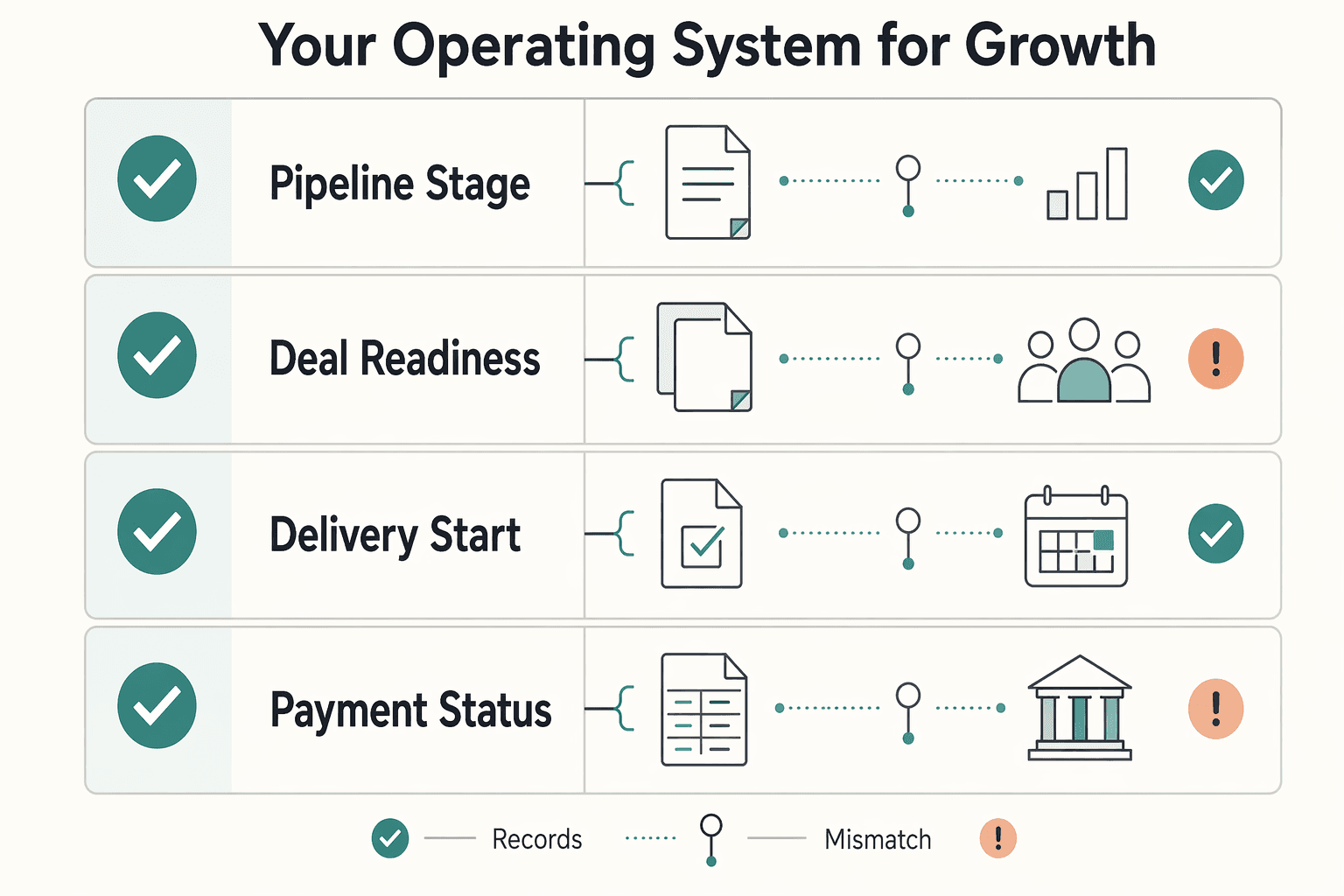

| Signal | What to check | Red flag |

|---|---|---|

| Pipeline stage | Target, active, proposal, signed, live, paid | Stage changes, owner is unclear |

| Deal readiness | Buying signals plus stakeholder confirmation | One contact responds, account is not aligned |

| Delivery start | Signed scope and kickoff date | Work starts before approvals |

| Payment status | Sent, viewed, paid, overdue | Invoice lives outside the account record |

Quick implementation checklist:

Usually, it is the next tool that fixes your real bottleneck, not the most complete platform on paper. Start with a CRM or contact hub you will actually keep clean, add a data layer when your target account list is stable, and add engagement tools last. A lightweight stack can work when one person owns follow-up and list hygiene, with the tradeoff of more manual stitching between tools.

Yes. ABM is account-centric, not lead-centric, so you can run it with a small stack when your target-account volume is manageable. Choose an all-in-one platform only when you truly need data, intent, orchestration, and analytics in one place, and you have the capacity to manage the setup.

Pick the lighter option when your team size is small, your account list is tight, and your main need is disciplined tracking plus better contact coverage. Move up only after you verify three things: team size, target-account volume, and integration requirements across your current stack. That check matters because the wrong platform can waste 6 to 12 months of implementation time.

Do not trust feature-list demos on their own. Test the same sample of target accounts in each tool, confirm how records match into your CRM, and assign ownership for fields, syncs, exclusions, and weekly cleanup before you sign. Data quality is foundational here, and one source reports poor integration can be associated with 30 to 40% lower account match rates.

This section’s grounding does not provide jurisdiction-specific legal rules, so avoid treating a checklist as legal advice. Operationally, keep a simple evidence pack for each contact source: where the contact came from, why the account is on your list, and how suppression requests are handled. If you cannot document the source or intended use of a contact record, pause and verify before sending.

Use a verification rule, not a promise. Buy when manual work is clearly slowing account coverage or follow-up, and you can state what improvement you expect to test over the next 3 to 6 months. Compare total cost, not just license price, against account-level engagement and revenue impact, then validate those inputs before you commit.

Track account-level engagement and revenue impact, not just activity totals. In practice, that means watching whether target accounts move from first response to meeting, from meeting to opportunity, and from opportunity to revenue inside your CRM. If clicks go up but accounts do not progress, it may indicate issues with message quality, list quality, or follow-up discipline rather than a lack of software.

A former tech COO turned 'Business-of-One' consultant, Marcus is obsessed with efficiency. He writes about optimizing workflows, leveraging technology, and building resilient systems for solo entrepreneurs.

Includes 4 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

Use ABM when you have a defined set of high-value accounts and those deals need several people to agree before anything closes. Keep demand generation in the lead when you still need broader reach, wider lead capture, or you do not yet have enough account insight to justify one-by-one research and tailored follow-up.

If your publishing is inconsistent, nobody clearly owns decisions, and each post dies after one channel, you do not have an ideas problem. You have an operating problem. For a solo operator or lean team, your content gets more reliable when you document a few non-negotiable rules and review points.