Yes, an ai co-pilot for global compliance can reduce manual risk for a solo operator if it watches your records and flags decision points before filing, invoicing, or travel. The article grounds this in concrete checkpoints like IRS residency tests, FEIE day counting, FBAR monitoring tied to FinCEN Form 114, reverse-charge invoice handling, and Schengen stay limits. You still approve final actions and escalate ambiguous cases.

If you run a cross-border business on your own, you are managing growth and compliance at the same time. In practice, that means keeping four risk areas aligned with your actual records:

| Area | Rule or threshold | Article detail |

|---|---|---|

| U.S. substantial presence test | 31 days in the current year | Plus a 183-day calculation across a 3-year period |

| FEIE physical presence test | 330 full days | In a 12-month period |

| California state residency | Temporary-or-transitory standard | State rules are not uniform either |

| Virginia state residency | Maintaining an abode | And spending more than 183 days in the year |

| FBAR | Aggregate foreign accounts exceed $10,000 at any point in the year | Filed on FinCEN Form 114 |

| EU invoicing | Invoices required for most B2B supplies | Some cross-border B2B services use reverse charge instead of supplier-charged VAT |

| Schengen short stays | 90 days | In any 180-day period |

In plain terms, you are juggling tax residency tracking, cross-border reporting obligations, invoicing compliance, and travel-status limits.

This gets complicated quickly. U.S. tax residency is not a universal one-year 183-day rule. The IRS substantial presence test uses 31 days in the current year plus a 183-day calculation across a 3-year period. The FEIE physical presence test uses 330 full days in a 12-month period. State rules are not uniform either. California uses a temporary-or-transitory standard, while Virginia includes maintaining an abode and spending more than 183 days in the year. Before you rely on any day count, reconcile calendar data with supporting records.

The same pattern shows up elsewhere. FBAR can be triggered if foreign accounts exceed an aggregate $10,000 at any point in the year, and it is filed on FinCEN Form 114. EU rules require invoices for most B2B supplies, and some cross-border B2B services use reverse charge instead of supplier-charged VAT. Schengen short stays are capped at 90 days in any 180-day period.

Treat an AI co-pilot for this work as a personal decision-support and monitoring layer, not a replacement for legal or tax advice. It should watch thresholds, flag changes, and help you sanity-check decisions before you file, invoice, or book travel. You still remain responsible for what gets submitted.

This use case is often underserved because many tools are built for company buyers or narrow tasks, not for one person carrying end-to-end accountability. This piece focuses on that gap, defines what a useful co-pilot should do, lays out the operating model, and gives you a practical way to judge whether a tool is actually trustworthy.

We covered this in detail in Cayman Islands LLC for Global Solopreneurs Who Want Fewer Compliance Surprises.

Many compliance AI products are built for enterprise governance, not for one person managing cross-border personal liability. They are designed to control employee behavior, document internal controls, and reduce company-level exposure. Your problem is different. You need help deciding what to do before you file, invoice, or travel.

That helps explain why this category often routes you into enterprise tooling. The market is shaped by shadow AI concerns, governance controls, and whether usage appears in an AI inventory. Those are valid company needs, but they do not automatically produce personal, action-ready guidance.

The dominant framing is organization-first: compliance, governance, and liability inside a business. A recurring concern is that employees can use consumer AI tools outside formal controls, creating governance blind spots. One cited survey summary reports only 40% of companies had an official LLM subscription, while employees in over 90% of surveyed companies reported regular use of personal AI tools.

In practice, that pushes vendors toward visibility, registration, policy enforcement, and auditability. If a product emphasizes shadow AI detection and inventory tracking, it is probably solving an enterprise buyer problem first.

You can usually spot the mismatch early. If the workflow is centered on inventory tracking, formal registration controls, and audit records, that is a strong signal. Those features are not inherently bad, but they often point to enterprise workflow design rather than personal decision support.

| Enterprise objective | Business-of-one consequence |

|---|---|

| Keep AI usage visible in an AI inventory and reduce shadow AI | Your personal triggers can still stay manual. |

| Maintain continuous visibility, validation, and remediation for exposure control | You may get monitoring depth without clear personal next-step guidance. |

| Prepare for formal governance review or regulated AI registration | You may still need to translate outputs into your own filing, invoicing, or travel decisions. |

| Reduce enterprise data leakage from employee prompts | You can still expose sensitive personal documents if data boundaries are unclear. |

One practical red flag is data handling. Enterprise risk writing repeatedly warns that external AI use can become third-party data transfer, and weak controls can lead to leaks quickly. For a solo operator, that can mean uploading sensitive personal documents without clear control over downstream use.

Before you buy, test the basics against your actual workflow:

| Check | Confirm | Article detail |

|---|---|---|

| Rule coverage by jurisdiction | What regions are actually supported | What remains manual |

| Alert explainability | Plain-language reasoning | Assumptions and trigger conditions |

| Data provenance | Where outputs came from | Which inputs were used and how corrections are handled |

| Export readiness | Clear review record | Not just chat text |

If a tool cannot map your personal triggers to practical alerts, explainable reasoning, and basic what-if planning, it may not be a practical personal co-pilot. You might also find this useful: The 'Compliance-First' Philosophy: A New Approach to FinTech for Freelancers.

A personal AI co-pilot is not just a chat interface. It is decision support for your own cross-border risk. It should continuously monitor your inputs, apply rule-aware checks, and flag issues before you travel, invoice, move money, or file.

That matters because risk is often created between filing dates. When obligations span jurisdictions and change quickly, periodic manual checks can miss the moment when a decision starts to matter. A credible tool should work as an always-on checker, not a year-end helper.

Use one practical test: does it track the facts that create obligations, then clearly tell you what changed and what decision needs attention now? If not, your process is still mostly manual. At minimum, a practical capability stack can include:

| Capability | What it does | Article detail |

|---|---|---|

| Trigger tracking | Tracks real thresholds and timelines | FBAR at $10,000 aggregate foreign account value during the year; FEIE at 330 full days in 12 consecutive months; Schengen short-stay limits of 90 days within any 180-day period |

| Scenario planning | Tests actions before committing | Add travel, change invoice location, or move funds to see what moves closer to a trigger and where manual review is still needed |

| Single source of record | Stores inputs and history together | Inputs, assumptions, timestamps, and output history instead of splitting context across inboxes, notes, and spreadsheets |

| Advisor-ready reporting | Exports a clean package | Travel history, threshold timelines, supporting inputs, exceptions, and alert logic |

The trust signal is not polished language. It is whether you can inspect where each output came from. That usually means visible source inputs, clear assumptions, and explicit handling for missing data.

Auditability matters most. Article 12 of the EU AI Act, for high-risk systems, emphasizes logging for traceability, and Article 14 emphasizes human monitoring and override. Even when a tool sits outside that legal scope, those are still good product controls. Show the rule used, log the trigger event, and let you correct or disregard an output.

If confidence is low, the right behavior is escalation, not false certainty. For example, when a Schengen warning appears, you should be able to verify the date logic against the official EU short-stay calculator before travel.

| Decision moment | Manual workflow | Co-pilot workflow |

|---|---|---|

| Before booking travel | Rebuild day counts across calendars, emails, and notes | Checks prior stays automatically, flags risk early, and tests a proposed itinerary |

| During the year | Review thresholds occasionally, often after the fact | Monitors triggers continuously and alerts as you approach or cross a threshold |

| At filing time | Assemble records from multiple places under time pressure | Exports an advisor-ready package with dates, inputs, assumptions, and alert history |

| Error exposure | High if one trip, account, or invoice is missing | Can be reduced with connected sources, visible data gaps, and logged corrections |

| Decision speed | Slow because each question starts from scratch | Can be faster because prior facts and rules are already mapped |

Use this buying rule: if a tool cannot show source inputs, support overrides, and route low-confidence cases to a human advisor, it is not acting like an early guardian.

For a step-by-step walkthrough, see Beyond Spreadsheets: The Rise of the AI-Powered 'Virtual CFO' for Global Professionals.

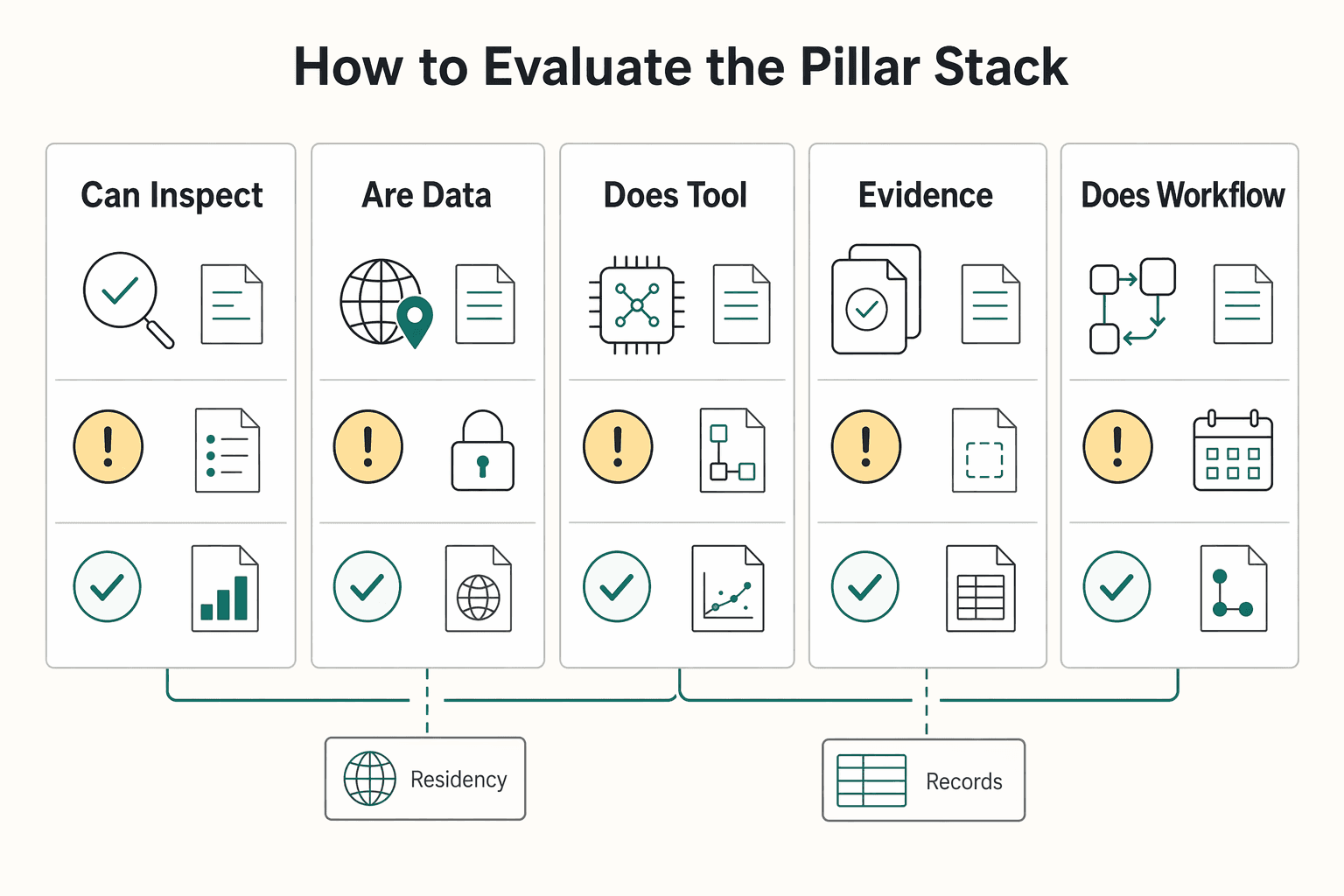

Use one standard: does it help you make the next decision with traceable inputs, clear limits, and a defined escalation path when confidence is low? Many AI frameworks describe three pillars as experience, agentic capabilities, and governance. For a business-of-one, those controls still need to hold across tax and residency, money and invoices, and travel workflows. Treat this as an operating framework, not a substitute for legal or tax advice. The pillars only work if the same controls run through all of them: visible inputs, controlled data flow, and explicit exception handling.

This pillar should help you decide before you act, not after filing season. You need tracking and forecasting that show what changed, which rule set was applied, and where human review is still required.

A useful decision flow is straightforward:

The key trust check is inspectability. You should be able to open an alert and see source inputs, timestamps, assumptions, and any override. If you cannot, you are relying on a black box.

Security matters here too. AI security is not just perimeter security. You should know where your records can flow, who can access them, and whether controls comparable to sensitivity labels and DLP boundaries are enforced in AI workflows.

This pillar should answer one practical question: can you issue this invoice now and explain how it was prepared later? Strong tools preserve an evidence trail, handle exceptions clearly, and avoid pushing sensitive buyer data into uncontrolled AI prompts.

Use this comparison when evaluating options:

| Check | Fragile setup | Stronger setup |

|---|---|---|

| Jurisdiction coverage | Generic invoice output with unclear scope | States supported invoice scenarios and flags unsupported scenarios |

| Evidence trail | No durable record of buyer-data checks | Logs validation results, timestamps, invoice version, and source inputs |

| Exception handling | Vague warnings or silent failure | Explicit exception state, missing-data flags, and required review path |

| Accountant handoff readiness | Invoice file only | Export includes assumptions, buyer details used, overrides, and alert history |

Also test two failure modes directly. One is hidden data flows from ad hoc AI usage, often described as shadow AI. The other is document-based prompt injection that can override intended safeguards. No tool can guarantee perfect prevention; if a tool cannot explain how it reduces these risks or what it does when extraction confidence is low, treat that as an operational gap.

This pillar should protect future options before you book. Every time you plan a trip, you should be able to review three outputs: remaining allowance from your current records, scenario simulation for the proposed itinerary, and conflict warnings when one destination choice affects another.

The alert logic should be simple and useful:

Reliability matters more than interface polish. You should be able to verify trip history and see the date basis used for calculations. If one record is missing or duplicated, the plan can look safe when it is not.

When you compare tools, stay close to the operating details:

If a product is weak on explainability or handoff evidence, a polished interface does not reduce your real compliance risk. Related: How to use AI Tools to Supercharge Your Freelance Business.

Before committing to any co-pilot, pressure-test your process with a simple workflow in the tax residency tracker.

You are still the decision-maker. A useful co-pilot can reduce compliance workload by handling repetitive monitoring and rule checks, while you keep context, tradeoff decisions, and final approval.

Compliance failures can come from small misses, not just dramatic mistakes: a travel-day count that drifted, an invoice assumption that was wrong, or an obligation noticed too late. A good tool watches the facts that move underneath your business and flags risk early. That includes Schengen travel approaching 90 days in any 180-day period, foreign account balances crossing the FBAR $10,000 aggregate threshold, or invoice cases where VAT liability may sit with the customer in a reverse-charge scenario.

The tool is strongest at three jobs: monitoring, normalization, and flagging. It can consolidate inputs, keep date logic current, and surface threshold changes for review.

It is weaker where context is missing or the rule itself is ambiguous. If travel records are incomplete, buyer details are missing, or account data is partial, outputs can look clean while risk is understated. The right promise is earlier flags and clearer tracking, not guaranteed outcomes.

For tax, AI output should not be treated as final advice without review. The IRS Taxpayer Advocate warns against relying solely on AI-generated tax advice and reminds taxpayers that they remain responsible for what is reported.

Before you act on any alert, check the source inputs, timestamps, assumptions, and overrides. Also check whether low-confidence or missing data is clearly flagged, and whether the decision affects travel, money movement, or a filed position enough to require advisor input.

Practical checks should stay simple. If FBAR exposure is flagged above $10,000, verify balances and filing timing, including the April 15 due date and automatic extension to October 15. If a Schengen warning appears, verify the trip history used in the rolling 180-day lookback. If reverse-charge risk is flagged, confirm transaction facts before approving invoice treatment.

If a vendor references explanatory summaries of the EU AI Act, treat that as guidance only, not binding legal text. Verify the legal text or get advice when exposure is material.

Define responsibilities before you rely on automation. NIST calls for explicit human-AI role definitions, and Article 14 of the EU AI Act emphasizes human ability to monitor, interpret, and override outputs for high-risk AI systems.

| Area | Tool strength | Your role | Override or escalate when |

|---|---|---|---|

| Travel-day tracking | Continuously counts days and checks rolling limits, including 90 days in any 180-day period | Confirm trip records, planned dates, and counting basis | Override for incorrect or incomplete dates; escalate if plans affect residency or immigration options |

| Invoice rule checks | Flags missing buyer data and possible reverse-charge cases | Confirm transaction facts and approve invoice treatment | Override if client or supply facts are wrong; escalate when VAT treatment is unclear |

| Obligation alerts | Tracks thresholds and deadlines, including FBAR exposure above $10,000 and April 15 or October 15 timing | Decide whether to file, gather records, or pause for review | Override if accounts are missing or misclassified; escalate when filing position is uncertain |

| Edge-case interpretation | Surfaces patterns and potential issues | Apply business context and risk tolerance | Escalate to qualified tax or legal professionals for complex or ambiguous cases |

Use one operating rule: the tool should never be the last unreviewed step before filing, invoicing, or booking. If you cannot inspect, override, and hand off with a clean evidence trail, you have shifted uncertainty rather than reduced it.

This pairs well with our guide on A Guide to 'Duty of Care' for a Company with Global Employees.

The practical shift is straightforward: move from reactive, after-the-fact cleanup to earlier checks and clear stop points before you file, invoice, move money, or book travel.

That is the value of a compliance co-pilot when you use it with discipline. Treat time savings conservatively. The immediate benefit is better operating control when manual compliance and risk processes no longer keep pace.

| Before | After |

|---|---|

| Issues are found after a draft, message, or internal output is already moving. | Checks happen earlier, so problems can be caught before release. |

| Compliance context can be scattered across notes, inboxes, and files. | Inputs and checks can be centralized, which can make decisions faster when records are complete. |

| Limits are assumed rather than tested. | Limits are explicit, and security outcomes depend on implementation quality. |

| Human review is late or inconsistent. | Human review is explicit at the decision point, especially for sensitive financial, customer, vendor, pricing, or operational data. |

A useful rule is simple: automate first-pass checks, keep final approval with you. A practical checkpoint pattern is to run those checks before you file, invoice, or book travel, then review the supporting records yourself.

For any output, use the same guardrails. AI can improve speed and efficiency, but it can also raise security anxiety when sensitive business data is involved. Keep source scope tight, confirm what the tool actually reviewed, and require signoff for consequential decisions.

If you want a deeper dive, read Value-Based Pricing: A Freelancer's Guide. If you want to verify coverage, policy gates, and audit-trail fit for your setup, review the Gruv docs.

It can help if you configure rule-based workflows and provide complete travel records, but this grounding pack does not confirm a built-in tax-residency or visa-window calculator. Use outputs as decision support, not a final determination. You still need to review trip records, date assumptions, and overrides before acting. If stamps, bookings, or calendar data are missing, the result can look precise and still be wrong.

It can support rule-based checks, but fixed filing-trigger coverage is not confirmed in this pack. Microsoft describes AI approvals as approve-or-reject steps against predefined criteria, with people overseeing important decisions. You still need to verify account scope, statement dates, classifications, and conversion logic. If source records are incomplete, any result can understate exposure.

Yes, especially when your checks can be expressed as approval rules. Microsoft describes AI approvals as automated approve-or-reject steps that can also process unstructured inputs, including attached PDFs or images. That means you can configure screening for missing fields before final review. You still own final invoice treatment and any tax position. If intake facts are wrong, the tool can return a clean decision on top of bad inputs.

Choose based on governance and data access needs, not hype. The cited Microsoft material does not prove one option is always better than spreadsheets or enterprise regtech, but it does show concrete tradeoffs: Copilot Studio lite favors quick/simple builds, while full supports deeper control. Also, Microsoft 365 Copilot Chat is web-grounded, but without a Microsoft 365 Copilot license it cannot access shared enterprise data, individual data, or external data indexed via Microsoft Graph connectors. In that case, you may need to upload files directly and verify coverage each cycle.

Start with the knowledge sources, because Microsoft states that responses depend on the internal and external sources configured during setup. Then verify timestamps, uploaded files, and data completeness before using the output for a real decision. As a recency check, confirm current product documentation. The Copilot Studio FAQ excerpt is marked last updated 9/4/2025. If your policy or source set is outdated, treat the answer as a draft, not a decision.

Hand off when the case is critical, exceptional, or difficult to reverse. Microsoft’s AI approvals guidance says people should oversee and finalize important decisions, so you should escalate when a result is material. Use the tool to collect records, summarize facts, and surface conflicts first. Escalate immediately if records are missing, sources conflict, or the rule requires legal or tax interpretation.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

With a Ph.D. in Economics and over 15 years of experience in cross-border tax advisory, Alistair specializes in demystifying cross-border tax law for independent professionals. He focuses on risk mitigation and long-term financial planning.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

If you are hunting for more **AI tools for freelancers**, stop and put controls in place first. One practical setup is `Acquire -> Deliver -> Collect -> Close-out`, with each AI action checked through `Data`, `Client Policy`, `Quality`, and `Money`. If a tool has no named job, no clear data boundary, and no record you can retrieve later, it probably does not belong in your stack.

Use this as a buyer guide, not a vendor-pitch decoder. Compliance-first fintech means compliance controls are built into product architecture from day one, not added after launch. If a platform cannot show where KYC checks, AML monitoring, and transaction controls happen in the product flow, treat that as added risk. In practice, that means live behavior, not policy documents: how users are verified, how access is controlled, how suspicious activity is handled, and how actions are logged when money moves.