Build your SOW as an execution file before setting dates. Put signed client authority, approved and excluded assets, operational contacts, and pending pre-start checks into one authorization packet. Pair it with written ROE instructions, and require a documented, dated change approval whenever a target, method, environment, or deadline shifts. Then map invoicing and final reporting to observable events so readiness delays and scope changes are recorded instead of handled informally.

A solid SOW is not just a polished document. It is the control point that tells your team whether this exact test can start, what it can touch, who can make live decisions, and how changes get approved. If one reviewer cannot verify approval, in-scope targets, exclusions, and the change path in one place, do not start testing.

That framing matters because many engagement problems do not begin with a technical mistake. They begin with a file that looks complete until someone needs to act quickly. A tester sees a hostname that might be covered. A client asks for one more environment. Access is not ready at kickoff, but the calendar slot is already blocked. A report draft uses broader language than the signed scope. In each case, the issue is not writing quality. It is whether the SOW package gives the team a usable operating answer.

A good SOW therefore has to do two jobs at once. It has to read clearly enough for commercial and client review, and it has to function as an execution control for the delivery team. If it only satisfies the first job, the team ends up improvising under pressure. If it only satisfies the second, the client may not understand what was approved. The durable version is the one that lets a reviewer, scheduler, tester, and report owner all reach the same answer from the same record.

The practical test is simple: can a new reviewer open the engagement file and, without reconstructing intent from scattered messages, determine what is approved, what is excluded, what assumptions must hold, who can decide live issues, and what happens if anything changes? If not, the document set is still a draft in operational terms, even if everyone informally thinks the project is ready.

Use PTES as guidance for pre-engagement flow, not as contract authority. In the available excerpt, PTES is presented as testing guidance and explicitly references pre-engagement interactions, a Scoping Meeting, and Scope Creep. The practical takeaway is simple: finish scoping decisions before the engagement is treated as live.

That does not mean every business detail has to be elegant. It means the decisions that control delivery need to be settled before the team is asked to execute. If the engagement is "starting" while key approvals still sit in open threads, or while basic scope questions are being answered verbally, your team is already carrying risk that should have been removed upstream.

That baseline matters because the rest of the file should let your team move quickly without guessing.

A useful way to think about pre-start is that this is the last point where ambiguity is cheap. Before kickoff, you can still return a draft for clarification without disrupting delivery. After kickoff, the same ambiguity turns into a live call with schedule pressure attached. The discipline here is not bureaucratic. It is operational. You want the file to absorb uncertainty before people are on the clock.

In practice, "finish scoping decisions" means more than getting general agreement that testing will happen. It means the record is mature enough that the following questions all have direct answers:

If any of those answers depends on memory, context, or a certain person being available to explain what everyone "meant," then the scoping stage is not actually finished.

This is also the point to separate momentum from readiness. A team may have calendar holds, internal staffing plans, and a client expecting a start date. None of that converts an incomplete record into an approved one. If the file does not prove the approval and operating rules, the right move is to pause the start decision, not to hope the missing detail can be sorted out after testing begins.

You might also find this useful: A Guide to the Statement of Work (SOW) for a SaaS Development Project.

Start by building one engagement-specific record that shows this exact test is cleared to begin.

Keep the current approval record, approved target list, exclusions, live decision contact, and any unresolved client dependencies together. The goal is not paperwork for its own sake. It is to make sure a reviewer can confirm, in one pass, what is approved and who has authority if something changes.

Go or no-go rule: if a reviewer cannot confirm the approved targets and the decision-maker in one pass, pause start and scheduling.

The key phrase here is one engagement-specific record. Do not make the team reconstruct approval from a proposal attachment, a calendar invite, a few message fragments, and a separate note someone saved locally. If the evidence exists but is spread across too many places, it will fail exactly when you need speed and clarity.

A strong authorization packet usually works because it is assembled for use, not just storage. The reviewer should be able to open it and answer the core questions in a fixed sequence:

If those answers appear only after cross-referencing several versions or interpreting shorthand, the packet is not ready.

One practical way to keep the packet useful is to organize it around proof rather than convenience. That means the contents should exist because they support a start decision, not because they are traditional attachments. The packet should let a stranger verify the engagement without needing background explanation from the original drafter.

At minimum, the packet should pull together the items already named in the draft and make their relationship obvious:

The emphasis on current matters. A record that was accurate two versions ago is not enough if later edits changed targets, timing, or assumptions. The packet has to reflect the state of the engagement you are actually about to run, not a prior working draft that happened to be approved earlier.

It also helps to make the packet internally coherent. Target names should be written the same way each time. Environments should not be shortened in one place and expanded in another if that creates doubt about whether they match. If an exclusion exists, it should not appear elsewhere as if it were merely deferred or optional. Tiny inconsistencies create large problems later because people read them as permission when time is tight.

When you assemble the packet, watch for four common failure modes.

First, approval exists but is not tied to this exact engagement. This often shows up when someone points to a general approval of "the test" without a record that clearly matches the current scope, environment, or timing. If the file cannot tie the approval to the exact work being scheduled, treat that as incomplete.

Second, target approval exists at a higher level than execution requires. A broad statement that a system is approved may not answer whether a specific asset, environment, or activity is approved. If the delivery team needs a more exact answer than the packet provides, the packet is not yet practical.

Third, live authority is assumed rather than recorded. Teams often think they know who to call if something changes. That is not enough. The packet should name the live decision path so the tester does not have to guess whether the right contact is commercial, technical, or procurement-side.

Fourth, unresolved client dependencies are hidden instead of surfaced. An unresolved dependency does not necessarily block the engagement forever, but it does need to be visible. If access, contact readiness, or another client-side condition is still open, the packet should show that clearly so nobody mistakes an assumption for a settled fact.

If you want the packet to survive handoff between teams, write it so it can support three moments: scheduling, kickoff, and audit after the fact. At scheduling, it tells you whether to commit the date. At kickoff, it tells the team how to proceed. After the engagement, it tells you what was actually approved and whether execution stayed inside that boundary.

That is why one-pass review matters so much. A one-pass review is not about speed for its own sake. It is about removing interpretation from the start decision. If one reviewer says "go" and another says "I think so," the packet still needs work.

When you review the packet, use direct questions:

If any answer is no, pause start and scheduling until the packet becomes self-explanatory.

If you want a deeper dive, read Germany Freelance Visa: A Step-by-Step Application Guide.

The test team needs scope language it can act on under time pressure. Use these working definitions:

| Category | Meaning | If issue appears |

|---|---|---|

| In scope | Only assets, environments, and activities explicitly approved for this engagement | Proceed only within the explicit approval |

| Out of scope | Anything explicitly excluded or not clearly approved | Treat it as out of scope until it is clarified in writing |

| Assumptions | Client-side conditions required for timing or coverage, such as access or contact availability | Pause, record it, and decide whether timing, coverage, or commercial terms need to change |

The operating rule is straightforward: if an item is unclear, treat it as out of scope until it is clarified in writing. If an assumption fails, pause, record it, and decide whether timing, coverage, or commercial terms need to change.

This is where many avoidable problems start. Vague scope forces live judgment calls that should have been settled before kickoff.

The point of scope drafting is not to sound complete. It is to give the delivery team an execution boundary they can trust. That means scope should answer the practical question the tester will face in the moment: Can I touch this, or not?

That answer gets harder when scope is written at the wrong level of detail. If it is too loose, the team has to interpret it. If it is too fragmented, the file becomes hard to read and maintain. The right balance matches how the work will actually be performed. You do not need decorative language. You need language that removes doubt.

A useful discipline is to separate three different things that are often blended together:

Those are not interchangeable. An exclusion is not the same as a failed assumption. A dependency issue is not the same as a permanent limit on scope. If the draft mixes them together, the team can easily misread a timing problem as permission to improvise or misread a narrow exclusion as a temporary blocker.

For example, the draft already gives the right pattern for assumptions: they are client-side conditions required for timing or coverage, such as access or contact availability. That means assumptions should be written so that failure has a predictable consequence. If access is not ready, what happens? If the live contact is unavailable, what happens? The file does not need ornate language, but it does need an answer that the team can follow without debate.

Scope language also needs to work under pressure. Imagine the most common live questions:

In every case, the SOW should push the team toward the same rule: if it is not clearly approved, it is out of scope until clarified in writing.

That rule matters because it stops good intentions from becoming unauthorized work. Most scope drift does not begin with misconduct. It begins with a plausible assumption made in the name of efficiency. "We are already here." "This looks related." "The client seems comfortable." None of those are substitutes for explicit approval.

When you write the scope, aim for language that lets the reader distinguish between named approval and contextual guesswork. You want the boundary to be visible. If an item is approved because it is explicitly named, say it that way. If something is excluded, make that visible too. If something depends on client readiness, write that as an assumption rather than letting it hide inside the schedule.

The phrase activities explicitly approved for this engagement also deserves attention. Scope is not only about targets. It is also about what the team is permitted to do with them. If the activity itself is not clearly approved, broad target language will not save you. The same asset can be in scope for one form of work and not for another. If the delivery team would need to infer the method from surrounding context, the scope language is not yet complete enough for live use.

A practical drafting method is to review scope from the point of view of the person least able to ask follow-up questions in real time. That person needs clear yes-or-no guidance. If the language would force them to stop and ask what someone intended, you have not finished translating the commercial agreement into operational instructions.

This is also where assumptions need more discipline than they usually get. Many teams list assumptions as a soft appendix, but they are often the hinge on which timing and coverage actually turn. If an assumption is material enough that its failure would change the engagement, write it in a way that makes the failure visible and practical. The draft already gives the right response pattern: pause, record it, and decide whether timing, coverage, or commercial terms need to change.

That sequence matters:

If you skip the recording step, later teams will argue about what happened. If you skip the decision step, the engagement can continue on assumptions that are no longer true.

The phrase clarified in writing matters for the same reason. Verbal comfort is not a control. Written clarification creates a stable point the team can refer back to. It also supports reporting later, because the report owner can map what happened against the same record that governed live work.

When you review scope quality, ask a narrower set of questions than people usually do:

If the answer to any of those is no, the scope still needs work. The danger is not only misunderstanding at kickoff. It is that the team will create its own unwritten rule set during delivery, and that unwritten rule set will usually be broader and harder to defend than the signed one.

Related: How to Write a Scope of Work for a Web Development Project.

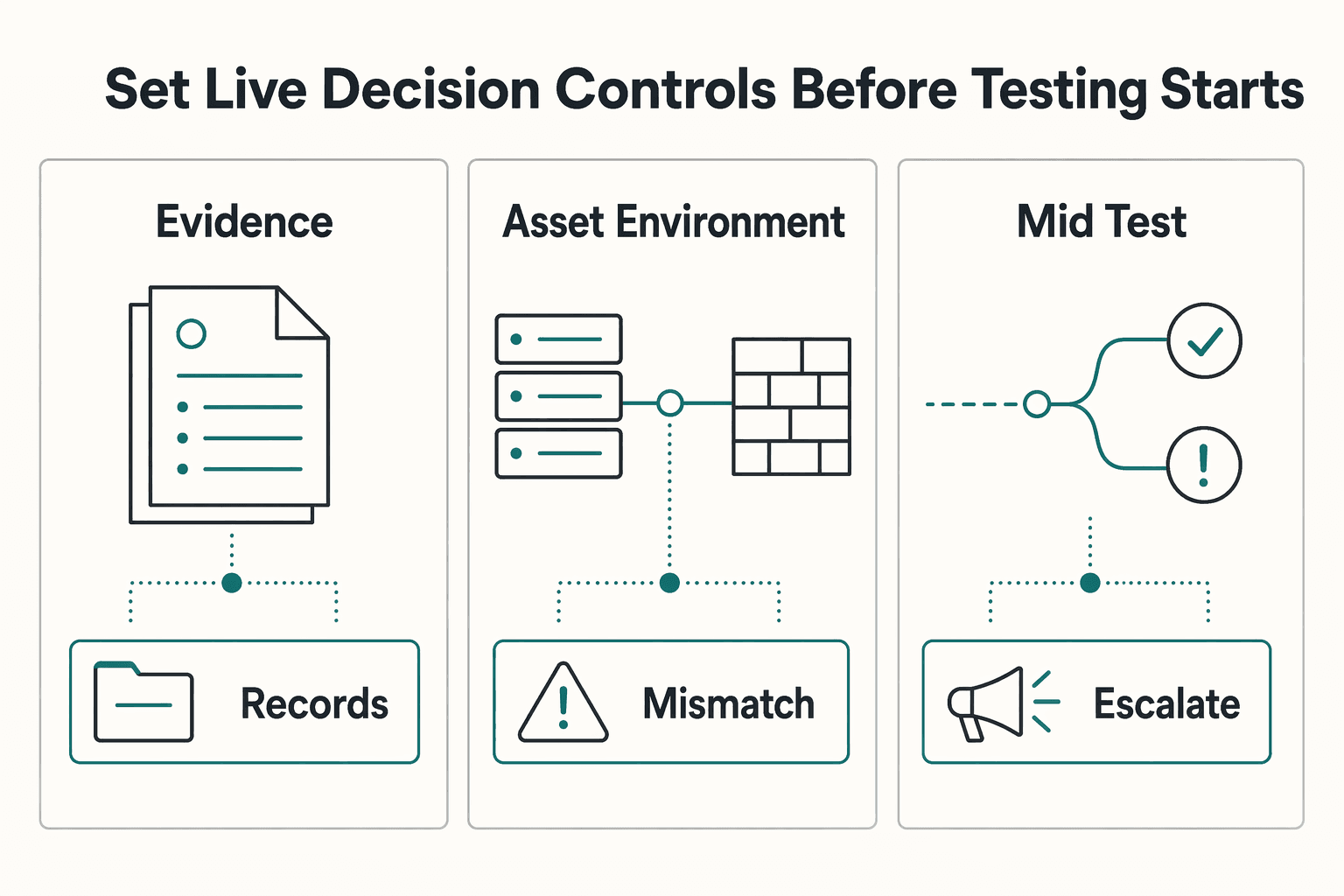

Before testing begins, decide how live decisions will actually be handled.

Use amendment as the working term for a dated written change to scope, timing, environment, method, or commercial terms.

The rule here should be strict: review change requests, but do not perform expanded work until the amendment is signed.

| Trigger | Required proof | Next action | Record to retain |

|---|---|---|---|

| Approval authority is unclear before kickoff | Current written approval tied to this engagement | Pause start | Approval record in the engagement file |

| Asset or environment is not clearly named | Approved scope list that explicitly includes it | Decline and escalate for clarification | Scope question log and client response |

| Client dependency fails (for example, access/contact readiness) | Evidence of failed assumption | Pause or reschedule per recorded assumptions | Timestamped dependency note and client notification |

| Mid-test request changes targets, methods, timing, or environment | Dated written amendment | Stop expanded work until signed | Signed amendment and version history |

| Procurement-bound request appears to exceed existing buying approval | Buyer confirmation on routing/coverage | Escalate instead of assuming coverage | Buyer instruction and updated engagement record |

Treat informal "while you are in there" requests as potential scope creep and run them through the same change control.

The important thing about this step is that it turns the SOW from a static description into a living control system. Testing rarely goes exactly to plan. The value of the ROE is not that it predicts every event. It is that it tells the team how to handle the events that matter without improvising authority.

A good ROE answers process questions the SOW alone often leaves open:

Those answers should be available before testing starts, not developed during the first live escalation. If the team is waiting until a problem appears to decide who owns it, they are already behind.

It helps to think about live decision controls as protection against three kinds of drift.

Execution drift happens when the team keeps working while waiting for clarification, on the assumption that the issue is minor.

Authority drift happens when someone without recorded authority gives practical instructions the team feels pressure to follow.

Record drift happens when a live decision is made but the file is not updated in a way that later reviewers can verify.

The ROE should prevent all three.

Take the first row in the table: approval authority is unclear before kickoff. The action is to pause start. That matters because kickoff pressure often creates a temptation to let uncertainty ride for a few hours. But unclear authority does not improve once the team is live. It gets worse, because every next decision now depends on a missing answer from the first one.

The second row shows the same logic with scope naming. If an asset or environment is not clearly named, decline and escalate for clarification. The word decline is important. The team should not merely flag the ambiguity while continuing to work around it. They should actively refuse to expand into an unclear item until approval is explicit. That refusal protects both sides. It stops unauthorized testing, and it stops the client from later discovering that "we thought it was included" turned into actual work without a signed record.

The client dependency row is equally important because failed assumptions often create silent scope distortion. If access or contact readiness fails, teams can be tempted to use the available time on nearby work or to compress later steps to stay on schedule. The right move is the one in the table: pause or reschedule per recorded assumptions, and retain a timestamped note plus client notification. That gives you a clean record of why the original plan changed and whether the impact was timing, coverage, or both.

The amendment rule should stay uncompromising. Review the request, yes. Discuss it, yes. Prepare the change language, yes. But do not perform expanded work until the amendment is signed. This line is what keeps helpful responsiveness from turning into uncontrolled delivery. Once the work happens first and the paperwork follows later, the organization has effectively trained itself to treat signature as an afterthought.

The row on procurement-bound requests highlights a different but related issue: not every request that sounds operational is purely operational. If the request appears to exceed existing buying approval, escalate instead of assuming coverage. The delivery team should not be put in the position of interpreting commercial authority from context. That is exactly the kind of question the control system should route away from the test team and toward the right reviewer.

The phrase "while you are in there" deserves special caution because it captures how scope creep often sounds in real life. It is casual, efficient, and framed as a small favor. The danger is that it bypasses the question your file is supposed to answer: was this approved for this engagement? The right response is not to debate whether the ask is reasonable. The right response is to route it through the same change control as any other expansion.

To make these controls work, record the sequence of the decision, not just the outcome. A usable file should show:

That structure mirrors the table and gives later reviewers confidence that the team did not merely arrive at a defensible result by luck. They followed a repeatable process.

Another useful discipline is to align the ROE with how the delivery team actually communicates. If the team will escalate through a defined internal channel and then await a written client answer, the ROE should reflect that path clearly enough that nobody invents a shortcut under pressure. If the client has a live decision contact, the team should know when that contact can clarify an issue and when the matter must be routed into amendment instead.

The underlying idea is simple: not every question requires a signed change, but every question requires a known path. The team should be able to distinguish between:

If those categories are blurred, the team will either over-escalate ordinary clarifications or under-control actual scope changes. Both create friction. Clear live controls prevent that by giving each type of issue a defined route.

For a step-by-step walkthrough, see How Cloud Architects Structure an SOW for Multi-Cloud Migration.

At this stage, the main job is consistency. Keep one traceable chain from approval through closeout.

| Record | Role in the file | Must stay aligned with |

|---|---|---|

| Authorization packet | Says what could start | Approved targets, exclusions, and approval |

| ROE | Says how live questions would be handled | Pauses, escalation path, and live controls |

| Change records | Say what moved | Signed amendments and version chain |

| Billing notes | Say what observable event supports the commercial trigger | Billing trigger and delivery record |

| Report | Says what was actually delivered and what affected delivery | Approved scope, exclusions, pauses, and signed changes |

The same target names, exclusions, assumption failures, pauses, and signed amendments should match across the authorization packet, ROE, change records, billing trigger notes, and final report language. If those records drift apart, you lose the ability to show what was approved, what changed, and what actually happened.

Closeout rule: if something was excluded, delayed by dependency failure, or changed by amendment, say that plainly in the report using the same wording as the signed records.

This is where document quality becomes operational credibility. A team can manage a live issue correctly and still create a weak engagement record if the downstream documents tell the story differently. The goal is not to make every line identical. The goal is to preserve a traceable chain from the original approval to the final report.

Think of the engagement file as one narrative told through several records. The authorization packet says what could start. The ROE says how live questions would be handled. The change records say what moved. Billing notes say what observable event supports the commercial trigger. The report says what was actually delivered and what affected delivery. Those records do not have to repeat each other word for word, but they do need to align.

Misalignment usually appears in small ways first:

Each of those gaps weakens your ability to show a clean engagement path. Later, when someone asks what was approved, what changed, or why coverage differed from the initial plan, you do not want the answer to depend on stitching together competing versions of the truth.

The easiest way to avoid that problem is to reconcile records deliberately before closeout. Review them in sequence and compare the operational anchors:

If any item is described in different terms across those records, decide which wording matches the signed file and normalize the rest. Consistency is especially important for exclusions and changes. Those are the exact points most likely to be questioned later.

The draft is right to tie closeout language to the signed record. If something was excluded, delayed by dependency failure, or changed by amendment, the report should say that plainly using the same wording as the signed records. That discipline does two things. First, it protects the team from overstating what was performed. Second, it helps the client understand the engagement as it actually occurred, not as originally imagined before constraints appeared.

The billing connection matters for similar reasons. Billing should map to a defined, observable engagement event, and the note supporting it should align with the rest of the file. If billing depends on a description of delivery that the report or change log cannot support, you have created an avoidable dispute point. The fix is not to rewrite history at closeout. The fix is to make sure the billing trigger, change record, and report all describe the same engagement reality.

This step is also where version discipline pays off. If amendments were signed, the file should make it easy to see which state governed at which point in time. You do not want later reviewers to wonder whether the report follows the original scope or the amended one. A clear version chain prevents that confusion and makes closeout much easier to defend.

One practical review method is to compare the key nouns and events across documents. Are the target names the same? Are exclusions described the same way? Does the report mention the same assumption failure that triggered the pause record? Does the billing note point to an event the delivery record can actually verify? These are simple checks, but they catch most drift before signoff.

Do not underestimate the importance of plain reporting language here. When something changed, say so directly. When coverage was limited by a failed dependency, say so directly. When an item remained excluded, say so directly. Trying to smooth over those facts usually creates more confusion than candor does. Clear closeout language is not a confession of weakness. It is proof that the team stayed inside the approved controls.

This pairs well with our guide on How to Write a Scope of Work for a Mobile App Development Project.

Before signoff, run your draft through the SOW generator to verify that scope boundaries, change-control triggers, and evidence fields are clear enough for live decisions.

Before you sign or schedule, use this as the hard gate. Every control below should be a clear go. If any row is a hold, stop and fix the file first.

The checklist works best when you use it literally. Do not treat it as a soft review aid or a reminder list someone can satisfy mentally. The point is to convert broad confidence into specific proof. Every row should force the file to answer a narrow, verifiable question. If it cannot, the engagement is not ready.

This is where you confirm the file can stand on its own. Each check should tie to one file-level proof point, not a chain of assumptions.

| Check | Go | Hold | Required evidence | Failure action |

|---|---|---|---|---|

| Authorization | You can confirm who approved this exact engagement and who can make live decisions | Approval authority is unclear, outdated, or split across threads | Approval record | Pause kickoff |

| Scope and cross-border readiness | Named targets are explicit, and jurisdiction, data-handling, and client-side approval conditions are documented before start | Targets are vague, or jurisdiction/handling approvals are assumed instead of documented | Scoped asset list | Return for redline |

| Rules of engagement | Operating instructions are signed and usable by the delivery team | The team would need to improvise pause, escalation, or test behavior decisions | Signed ROE | Return for redline |

| Change control | Any scope movement can be checked against a current signed change record | Expanded work would rely on informal approval | Amendment log | Route through amendment |

| Billing | Billing timing maps to a defined, observable engagement event | Billing depends on an event that cannot be verified later | Billing trigger record | Defer billing event |

| Reporting | Draft reporting structure maps to approved scope, exclusions, pauses, and signed changes | You cannot show how final reporting will mirror the signed record | Draft report mapping | Return for redline |

Do not accept unresolved links as evidence. One provided source currently shows "Page Not Found," and it also warns that older links or bookmarks may no longer point to the same place. If any approval, policy, or client record is link-based, reopen it before signoff. If the link fails, relocate it through homepage, search, or department paths, then update the engagement file.

The phrase verify records, not interpretations is the discipline that keeps this checklist honest. A reviewer should be able to point to one file-level proof point for each row. If passing a check requires a story about what several scattered records probably mean together, the row is not green yet.

Take the Authorization row. The standard is not that someone on the team feels comfortable. The standard is that you can confirm who approved this exact engagement and who can make live decisions. If approval authority is unclear, outdated, or split across threads, the failure action is to pause kickoff. That may feel strict, but it is far cheaper than discovering mid-test that the approval chain does not match the work underway.

The Scope and cross-border readiness row deserves equally careful reading. It is not enough that targets are mostly understood. Named targets must be explicit, and jurisdiction, data-handling, and client-side approval conditions must be documented before start. The operative contrast in the table is between documented and assumed. If those conditions are assumed instead of documented, return for redline. The reason is simple: assumptions about handling and approval conditions often stay invisible until a delivery or reporting issue makes them visible at the worst time.

For the Rules of engagement row, ask whether the operating instructions are not only signed but usable by the delivery team. A signed document that still leaves the team improvising pause, escalation, or test behavior decisions is not really a control. The proof point is the signed ROE, but the practical standard is whether the team could apply it under time pressure without inventing steps.

The Change control row is one of the clearest. Any scope movement should be checkable against a current signed change record. If expanded work would rely on informal approval, route it through amendment. This row often catches issues that feel harmless because everyone agrees in principle. Agreement in principle is not the same thing as a current signed change record, and this checklist is designed to preserve that distinction.

The Billing row protects you against a different kind of weak file. Billing timing should map to a defined, observable engagement event. If the billing event cannot be verified later, defer it. That protects the commercial record from becoming dependent on memory or on informal descriptions of what "counted" as completion.

The Reporting row closes the loop. Before signoff, you should be able to show how the final reporting structure will mirror approved scope, exclusions, pauses, and signed changes. If you cannot map the report to the signed record now, you are likely to feel that mismatch more sharply at closeout when delivery details are fresh, pressure is higher, and drafting shortcuts are more tempting.

A strong way to use this checklist is to insist that each row be reviewable by someone who was not part of the original drafting. If that reviewer can independently confirm the proof point, the file is probably in good shape. If they keep asking what the authors intended, the file still relies too much on interpretation.

The warning on unresolved links is more than a housekeeping note. Link-based evidence is fragile by nature. If an approval, policy, or client record is accessible only through a path that now fails, you should not accept a claim that it existed at the time. Reopen it before signoff. If the link fails, relocate it through homepage, search, or department paths, then update the engagement file. The key principle is that the file should contain evidence that can still be reached and verified when needed.

This matters because broken links create a false sense of completeness. The file appears to contain support, but in practice the support is gone or inaccessible. That is exactly the kind of hidden weakness a pre-signoff review is supposed to catch. A link that no longer resolves is functionally similar to a missing attachment until you recover and update it.

One final discipline here: do not let a row pass because another row feels strong. Good scope does not compensate for weak approval. A strong ROE does not compensate for an unverifiable billing trigger. The checklist is a hard gate precisely because each control protects a different failure mode.

Use tools at the end, not as a substitute for control. Once the checklist is fully green, use the SOW generator and freelance contract generator as final validation aids.

They can stress-test wording, but they do not replace signed controls or a traceable engagement record.

That order matters. If you bring tools in too early, people can mistake polished output for completed control work. A generator can help surface wording gaps, awkward phrasing, or internal inconsistencies in how the engagement is described. It cannot create approval where none exists, decide authority that was never recorded, or convert an informal client ask into a signed amendment.

The right use case is after the hard gate is already met. At that point, the file should already contain the proof points discussed above. Then a tool becomes useful as a validation aid: it can help you inspect whether the written draft still mirrors the approved record cleanly and whether any section drifts away from the signed controls.

Used this way, tools are especially helpful for a final pass on consistency. You can compare the generated phrasing against your signed scope, exclusions, assumptions, and change path and ask practical questions:

Those are worthwhile checks. But they only matter because the underlying controls are already in place.

The same caution applies to the freelance contract generator. It may help refine phrasing around engagement mechanics, but it does not replace the need for a signed ROE, a current approval record, or a traceable amendment path. Treat these tools as quality control on wording, not as authority.

A helpful mental model is this: tools can improve the expression of a decision, but they cannot supply the decision itself. They can make a draft clearer. They cannot tell you whether a target was actually approved, whether a dependency failure was properly recorded, or whether billing maps to a verifiable event. Those are file and process questions, not drafting questions.

So use the tools late and deliberately. First get the file fully green. Then use them to stress-test language, tighten structure, and catch drift before signoff. If the output from a tool conflicts with the signed record, the signed record wins. Update the language to reflect the approved controls, not the other way around.

That keeps the SOW in its proper role: not just a good-looking document, but a dependable mandate for the exact work your team is authorized to perform.

We covered this in detail in How to Structure an SOW for a Retainer-Based Consulting Engagement.

Once your checklist is complete, convert the approved scope into client-ready terms with the freelance contract generator.

Set numeric anchors before kickoff so the team can evaluate requests quickly: a 5-business-day pre-start review, a 24-hour escalation response target, and a written 48-hour amendment turnaround target for urgent scope decisions.

Use a 3-gate sequence: Gate 1 confirms signed authority, Gate 2 confirms technical readiness, and Gate 3 confirms reporting and billing triggers. If any gate is red, pause scheduling and run a targeted revision through the SOW generator before restart.

Define practical guardrails in writing, such as changes above 15% effort, more than 2 new environments, or a USD $5,000 equivalent commercial shift requiring amendment review. The exact numbers can vary, but explicit thresholds prevent informal expansion.

Before kickoff, require 100% completion of approval, scope, ROE, and escalation fields. If any section is below 95% complete, route a short corrective pass and align language using the retainer SOW guide and mobile SOW guide.

Use these FAQ answers as operating checks during drafting and live delivery handoffs.

At minimum, keep a signed engagement approval, explicit target and exclusion list, signed ROE, and a named live decision authority. If any item is missing, hold kickoff and route fixes through your SOW workflow.

Treat it as out of scope until it is explicitly added in writing. Record the discovery, pause expansion, and escalate for written confirmation rather than relying on verbal comfort.

A clarification becomes an amendment when any approved baseline shifts: targets, methods, timing, environments, or commercial terms. If the change affects delivery boundaries, do not proceed until the amendment is signed.

Use one observable trigger tied to documented delivery events, then mirror that same trigger in the billing note, change log, and closeout report. This avoids disputes when scope pauses or amendments occur.

Update the log each time a dependency fails, a scope question appears, or a client decision changes the run plan. For active engagements, daily updates during execution and immediate updates on major events are practical.

Use terminology from the NIST Cybersecurity Framework and CISA service guidance for consistency, but keep the signed SOW and ROE as the binding source. For drafting consistency across teams, this also pairs well with the SaaS SOW guide.

Before kickoff, maintain a signed approval record tied to the exact targets, exclusions, and named live decision authority.

Treat newly discovered assets as out of scope until explicit written inclusion is approved and recorded.

It becomes an amendment when targets, methods, environments, timing, or commercial terms change from the signed baseline.

Use a clearly observable trigger tied to the agreed delivery milestone and reflected consistently in change and report records.

Update the delivery log whenever scope questions, dependency failures, pauses, or change approvals occur.

Use standards like NIST and CISA terminology for clarity, but preserve the signed SOW and ROE as the binding authority.

Oliver covers corporate structure decisions for independents—liability, taxes (at a high level), and how to stay compliant as you scale.

Priya specializes in international contract law for independent contractors. She ensures that the legal advice provided is accurate, actionable, and up-to-date with current regulations.

Educational content only. Not legal, tax, or financial advice.

Choose your track before you collect documents. That first decision determines what your file needs to prove and which label should appear everywhere: `Freiberufler` for liberal-profession services, or `Selbständiger/Gewerbetreibender` for business and trade activity.

Use your SOW as a pre-work control document, not a project diary. Before work starts, make sure the key project documents describe the same deliverables, responsibilities, and timing.

**Treat your SaaS SOW as a cash flow control system before you treat it as legal paperwork.** If you run a business-of-one, it is one of your core operating controls.