To structure a limitation of liability clause for OpenAI API client work, align your client contract with the provider terms you actually use, state human review and client approval duties, and avoid promises your stack, process, or insurance cannot support. OpenAI's terms limit OpenAI's obligations to you, not your client's claims against you. Your clause works best when it matches verified provider terms, documented review steps, and enforceable technical controls.

When you deliver AI-assisted work to a client, OpenAI's terms and your client promises do not line up automatically. In a dispute, OpenAI's obligations stop at its own contract terms, while your client may still pursue you under your SOW, proposal, or MSA.

That mismatch is the liability gap. It is not about unusual terms. It is about scope. OpenAI's terms primarily define OpenAI's obligations, give you limited protection in specific areas, and do not rewrite what you promised your client.

For API and client delivery work, start with the business stack, not the consumer terms. The OpenAI Services Agreement (effective January 1, 2026) and Service Terms (updated January 9, 2026) are the key documents. The Service Terms say they control if there is a conflict.

| Record | Article detail | What to save |

|---|---|---|

| OpenAI Services Agreement | Effective January 1, 2026 | Save the current version. |

| Service Terms | Updated January 9, 2026; controls if there is a conflict | Save the current version. |

| Order Form or account plan record | If applicable | Keep it in your file with dated copies or screenshots. |

Do not assume the consumer $100 figure applies to API or business use. Before you draft anything client-facing, save the current OpenAI Services Agreement, the current Service Terms, and your Order Form or account plan record, if applicable. Keep dated copies or screenshots in your file so you can show which version you relied on.

Once you know which terms apply, read them like a risk map. Focus on liability limits, warranty disclaimers, and user-responsibility language. Until you verify the live contract text, mark the cap wording as unresolved and confirm it against the Services Agreement and any Order Form before use.

OpenAI's terms also put output evaluation on the user and warn against treating output as a sole factual source. In practice, you remain the final reviewer for anything you send under your name. Beta Services raise the risk further because they are offered "as-is" and excluded from indemnification obligations.

| Provider term | What it protects | What still sits with you |

|---|---|---|

| Services Agreement liability limits | Limits what OpenAI may owe under its own contract (confirm live cap text). | Potential client exposure above that amount, unless your contract limits it. |

| Warranty disclaimers and Beta "as-is" terms | Limits OpenAI warranty commitments, especially for beta features. | Your delivery quality, validation process, and promises about suitability or performance. |

| Output review responsibility | Confirms the user must evaluate output before reliance or sharing. | Errors in delivered work and avoidable misuse. |

| API IP indemnity | May apply to certain third-party IP claims tied to output use or distribution. | Non-IP claims and excluded IP scenarios. |

The API indemnity can help, but it is narrow. It is framed around certain third-party IP claims tied to output use or distribution, not all output-related harm. The exclusions matter. They include cases where infringement risk was known or should have been known, or where output was modified, transformed, or combined with non-OpenAI products or services. API use also has to follow applicable documentation.

Keep ownership and indemnity separate. OpenAI saying it will not claim copyright over API-generated content does not make the broader legal risk disappear.

The practical takeaway is simple: provider terms may give you a limited backstop, but they do not automatically cover your client-facing exposure. That is why Layer 1 matters first. Your client contract should match provider reality instead of promising more than your stack can support. Related: A Deep Dive into the 'Limitation of Liability' Clause for Freelance Software Developers.

Before you sign, your contract should do one clear job: align what you promise with what your provider terms, review process, and insurance can actually support.

Do not draft from memory or marketing copy. Pull the live terms and account records that govern your use, save dated copies, and draft from that file.

In the clause itself, state plainly that you use third-party AI tools in delivery, that you perform human review before delivery, and that the client is responsible for final approval and use in its business context. Track these unresolved items until you verify the live provider text:

For confidentiality terms, avoid overpromising. A stricter posture can create tradeoffs across cost, usability, auditability, and security, and zero-retention setups can reduce continuity in repeat review work. Promise only protections you can verify for your actual account.

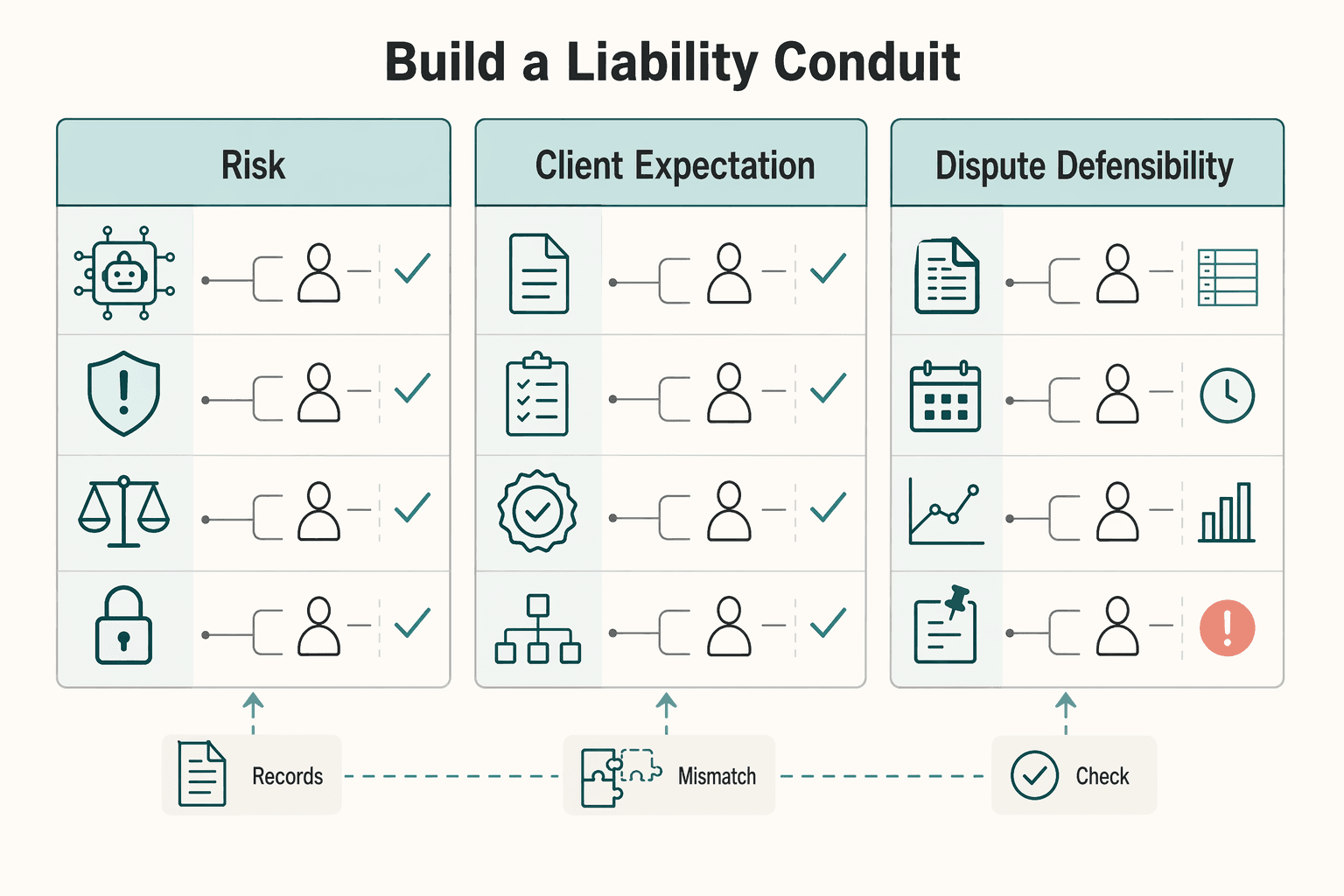

A generic cap is meant to limit the amount at issue, but it can still leave AI-specific responsibility blurry. What you want instead is a liability conduit that shows who is responsible for what before a dispute starts.

| Decision point | Generic limitation clause | AI-linked limitation clause |

|---|---|---|

| Risk transfer | Usually caps liability generally, without mapping AI-specific failure paths. | Can connect AI-related exposure to any verified provider limits, your review role, and client-side decisions. |

| Client expectation clarity | Client may assume AI-assisted output is warranted like fully manual work. | Clarifies where AI is used, what you review, and what remains client approval or use risk. |

| Dispute defensibility | Can leave a weaker record of warning, allocation, and process. | Can create a stronger record when boundaries, review steps, and approvals are explicit. |

Use this drafting checklist, and map key actions to named decision-makers, including prompting, review, and approval. That accountability trail helps later if you need to defend how the work was handled.

The contract sets the boundary, but delivery is where misunderstandings happen. Add a short deliverable notice that the work was AI-assisted, reviewed, and still requires client-side validation before publication or implementation. That keeps expectations aligned when the output gets forwarded internally or externally.

Do not assume professional liability or E&O coverage automatically applies to AI-assisted output. Review the policy wording first. Then ask your broker or carrier written questions about AI-assisted output, exclusions, endorsements, and cross-border scope. Confirm the endorsement name and effective date if one is required.

| Check | Article instruction |

|---|---|

| Policy wording | Review it first. |

| AI-assisted output | Ask your broker or carrier a written question about AI-assisted output. |

| Exclusions | Ask for written confirmation about exclusions. |

| Endorsements | Ask about endorsements in writing, and confirm the endorsement name and effective date if one is required. |

| Cross-border scope | Ask for written confirmation about cross-border scope. |

Keep the policy, endorsements, and written confirmations with your contract file. If you cannot get clear written confirmation, narrow the scope before you sign.

You might also find this useful: A Guide to Liability Clauses for Freelance AI/ML Engineers.

Before you finalize your liability clause, build a first-pass draft you can redline with counsel using the Freelance Contract Generator.

Once the contract sets the boundary, your process should show you stayed inside it. Use the same approach on every project: mandatory human review, zero-trust input handling, and an audit trail you can produce on demand.

Review should be mandatory for any output a client could rely on, publish, or deploy. OpenAI's safety guidance recommends human review before outputs are used in practice, with extra rigor for high-stakes use and code generation.

Assign one named signer per deliverable, even if that signer is you. "Ready to ship" should mean:

Avoid vague notes like "lightly edited." They are hard to defend later.

A simple classification rule does most of the work here. Align it with GDPR data minimization (Art. 5(1)(c)): send only what is necessary for the task. Use three classes:

At minimum, avoid sending direct identifiers when possible. Hash identity fields such as username or email where feasible. If context is still needed, consider redacting first or using synthetic test data. Do not treat "not used for training by default" as a reason to share sensitive data freely.

| Risk point | Required safeguard | Evidence to retain |

|---|---|---|

| Output could drive real decisions | Named reviewer signs off before delivery | Reviewer name, date, decision note |

| High-stakes or code output | Enhanced review against source material | Verification notes, source record used in review |

| Identifying or restricted input | Minimize, redact, hash, or replace with synthetic data | Data classification note, redacted or synthetic version |

| Potentially unsafe output | Run moderation where relevant and handle exceptions | Moderation result, exception log |

| Retention representations to client | Verify current provider terms and account setup before promising anything | Saved policy snapshot, account or config record |

If your contract says you review, minimize, or control retention, your file should prove it. Capture four things in each project file: prompt or context class, review outcome, exception handling, and retention practice.

For retention language, verify it before it goes into client-facing documents. OpenAI states abuse-monitoring logs may include prompts, responses, and metadata, typically retained up to 30 days unless legal retention applies. Zero Data Retention is not automatic and requires prior approval. As of current public statements, OpenAI also notes limited historical April-September 2025 data is being securely stored for legal obligations.

Your contract and review process define the boundary, but your application has to enforce it on every request before anything reaches a client or end user. If your limitation-of-liability clause for OpenAI API work narrows legal exposure, these controls make that position credible. A 2025 legal analysis argues the Services Agreement does not automatically shield downstream providers or modifiers.

Centralize control first. Route every model call through an AI gateway, or an equivalent single control layer. That way, authentication, strict input validation, token-based rate limiting, request and response filtering, cost controls, and audit logging are enforced in one place.

On input, validate and redact sensitive data before prompt assembly. Remove direct identifiers when they are not needed, separate trusted instructions from user-supplied text, and treat prompt injection as an active attack path. Because prompt injection has no single fix, use layered defenses: context isolation, least-privilege model or tool access, and output validation before display or execution. If you allow tools or agents, assume compromise and apply execution isolation, network and filesystem restrictions, and human approval for sensitive actions.

Before release, require a clear block-or-escalate decision when a request includes restricted data, attempts instruction override, or asks for a sensitive action outside allowed scope.

RAG helps only when the underlying sources are trusted and validated. Treat your knowledge base like production content. Define who can add or edit sources, validate RAG data sources, and run checks so stale documents do not become default truth. Where you control model or dependency versions, pin versions and verify checksums to reduce silent behavior drift.

That same discipline should carry through to the answer itself. Validate outputs against retrieved material before any answer is shown. If retrieval support is weak, return a limited answer that states the gap, or escalate to human review.

| Control | Risk reduced | Residual risk | Who owns the decision |

|---|---|---|---|

| Input validation and redaction | PII leakage and oversharing to third-party models | Misclassification and missed sensitive fields | You |

| Prompt-injection defenses and tool restrictions | Instruction hijacking and unsafe actions | No single control eliminates new attack variants | You |

| Output filtering and block-or-escalate rules | Harmful, unsafe, or policy-breaking responses | False negatives and context-specific harm | You |

| RAG source validation plus model/dependency pinning | Unsupported or weakly grounded answers | Low-quality or stale sources can still pass weak checks | You; client also owns source quality where they supply content |

| Logging, alerts, and traceability | Slow detection and weak incident evidence | Logging does not prevent the underlying error | You |

Logs matter most when something goes wrong, not when a demo goes right. For each material interaction, keep enough metadata to reconstruct decisions, such as request ID, timestamp, model version, retrieval source IDs, moderation/filter outcome, and reviewer or approver when required. Minimize or hash identifiers where feasible.

| Metadata | Article instruction |

|---|---|

| Request ID | Keep it for each material interaction. |

| Timestamp | Keep it for each material interaction. |

| Model version | Keep it for each material interaction. |

| Retrieval source IDs | Keep it for each material interaction. |

| Moderation or filter outcome | Keep it for each material interaction. |

| Reviewer or approver | Keep it when required. |

Set alerts for misuse and failure patterns such as repeated blocked prompts, sudden token spikes, unusual tool-call patterns, or bursts of API errors. Then define a written response flow. Contain the affected feature when needed, preserve relevant logs, review the prompt plus retrieved context, notify the client when your contract requires it, and document remediation. In your policy or project file, keep the current retention window pending official verification before use.

For OpenAI API work, a limitation-of-liability clause helps only when your contract terms, review records, and technical controls work together. On its own, the clause is weak if your process or safeguards cannot be shown.

Align the contract first. This can reduce exposure risk by defining what you are responsible for and what you are not. Keep your limitation clause, AI-use scope, approval language, and exclusions matched to the actual service you deliver. Treat provider cap wording and retention-window language as unresolved until the live terms are confirmed. Do not assume provider terms automatically pass through to your client contract.

Document the review. This gives you evidence that delivery decisions were controlled, not ad hoc. Keep a clear approval trail showing who reviewed output, what was checked, what changed, and who approved release, especially for higher-risk content. Documented review is a practical risk control.

Verify the safeguards. Check that moderation or filtering and escalation controls are active in the shipped path before release. Alleged cases where high-risk content was flagged but no intervention followed are a reminder that detection alone is not enough.

Apply this now. Before your next delivery, align contract terms to the service, verify live-term language, confirm review steps and keep approval records, and test safeguards in production-like conditions. If one layer is missing, your liability position is weaker even when the other two are strong.

For a step-by-step walkthrough, see How to Write a Limitation of Liability Clause for a Freelance Contract. After you set your contract, process, and technical controls, map them into an operational workflow with the Gruv Docs.

Start with the OpenAI Services Agreement, because it is the business or developer contract baseline for API use. As of this update, that page shows Effective January 1, 2026 and Updated December 1, 2025, and the Service Terms page shows Updated January 9, 2026. Then check your signed Order Form and any amendments. Your client contract still needs its own liability and AI-use terms.

Do not assume the individual Terms of Use liability formula applies to API client work. Use the Services Agreement plus your Order Form, and confirm the live cap structure before putting provider-cap wording into client contracts. That cap limits OpenAI's exposure to you, not your client's claims against you. You limit client-facing exposure only if your own contract says so.

No. The Service Terms include API indemnity for certain third-party IP claims tied to your use or distribution of output, but it is not blanket protection. Review the exclusions carefully, including cases where infringement risk was known or should have been known, or where output was modified, transformed, or combined with non-OpenAI products or services. Beta Services are as-is and excluded from indemnification. Your contract still needs to allocate the claim types and responsibilities that remain with you.

API data is not used to train or improve models by default. That does not mean zero storage in all cases, so verify retention language against current terms before using it in client-facing documents. Zero Data Retention and Modified Abuse Monitoring require prior approval and added requirements. Even with stricter settings, verify endpoint behavior because some endpoints may still store application state.

Treat yourself as responsible for the final output you deliver. OpenAI states output may be inaccurate and should not be your sole source of truth or a substitute for professional advice. Make human review and approval mandatory before delivery. Keep records of review and escalation decisions.

Start with the three layers in order: contract limits first, review and data-handling discipline second, and technical controls third. In the contract layer, leave liability-cap wording unresolved until provider terms are confirmed. In the process layer, require human sign-off for high-stakes output. In the technical layer, route calls through controlled paths, respect rate limits, and flag Beta use before release.

An international business lawyer by trade, Elena breaks down the complexities of freelance contracts, corporate structures, and international liability. Her goal is to empower freelancers with the legal knowledge to operate confidently.

Priya is an attorney specializing in international contract law for independent contractors. She ensures that the legal advice provided is accurate, actionable, and up-to-date with current regulations.

Includes 3 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Choose your track before you collect documents. That first decision determines what your file needs to prove and which label should appear everywhere: `Freiberufler` for liberal-profession services, or `Selbständiger/Gewerbetreibender` for business and trade activity.

Start by setting the structure, not just a number. Liability terms allocate risk, so your first move is to define how risk is organized before you negotiate the cap amount. Use these terms consistently from round one:

Start with one written freelance contract, then negotiate liability-related terms from that same text. That keeps payment, scope, and risk terms tied to the same deal. If you skip that step, both sides are more exposed to misunderstandings and avoidable disputes.