Start by treating price white-label service decisions as a risk-and-margin exercise, not a quote comparison. Pick the model that fits your delivery pattern, then calculate ROI using revenue impact, total delivery cost, and reclaimed focus value. Before approval, verify process maturity, define scope and acceptance in a SOW, and lock accountability in an SLA with clear escalation. Use a short pilot to confirm oversight load and revision behavior under real conditions.

Choosing a white-label partner is a control decision first and a buying decision second. If that partner works behind your brand, delivery reliability, client trust, and margin stability can matter more than the cheapest line item.

Frame the choice this way: are you buying the lowest acceptable output, or choosing a partner that helps reduce operational risk?

| Factor | Low-price option | Operational partner |

|---|---|---|

| Oversight load | Can be higher when process is unclear | Can be lower when process is clear |

| Quality consistency | Can be more variable | Can be more consistent |

| Client-facing risk | Higher if delivery slips | Lower if process is stable |

| Profit predictability | Can be less predictable | Can be more predictable |

Step 1. Screen process maturity before you discuss rates. In a white-label delivery model, your agency stays client-facing while the partner executes behind the scenes. That means you need evidence of a structured delivery process, not just a polished portfolio. They should be able to explain, in order, how they capture requirements, define scope, set timelines, collect brand inputs, complete the work, and return it for your review and QA before branded delivery. If they cannot walk you through that sequence, treat it as a risk signal and plan for more oversight from your team.

Step 2. Test communication discipline and scope control under stress. Risk often shows up after volume increases, not during a sales demo. One partner-evaluation source noted that hidden issues may surface once support and billing complexity rise, sometimes around the first 30 customers. That is not a universal threshold, but it is a useful reminder to ask what happens when blockers, revisions, and change requests pile up. You want a defined communication cadence and a clear method for documenting out-of-scope work.

Step 3. Confirm accountability terms, then move to price. Ask for the NDA and confidentiality terms early, because those documents help protect the client relationship you own. Start with qualifying questions. How do you intake scope? Who performs QA before handoff? How are change requests approved? What does your NDA cover? Once those answers are clear, move to pricing. How are revisions, rush work, and ongoing support billed?

For the legal side, see How to Structure a White Label Service Agreement. If you want a quick next step on pricing white-label service work, try the free invoice generator.

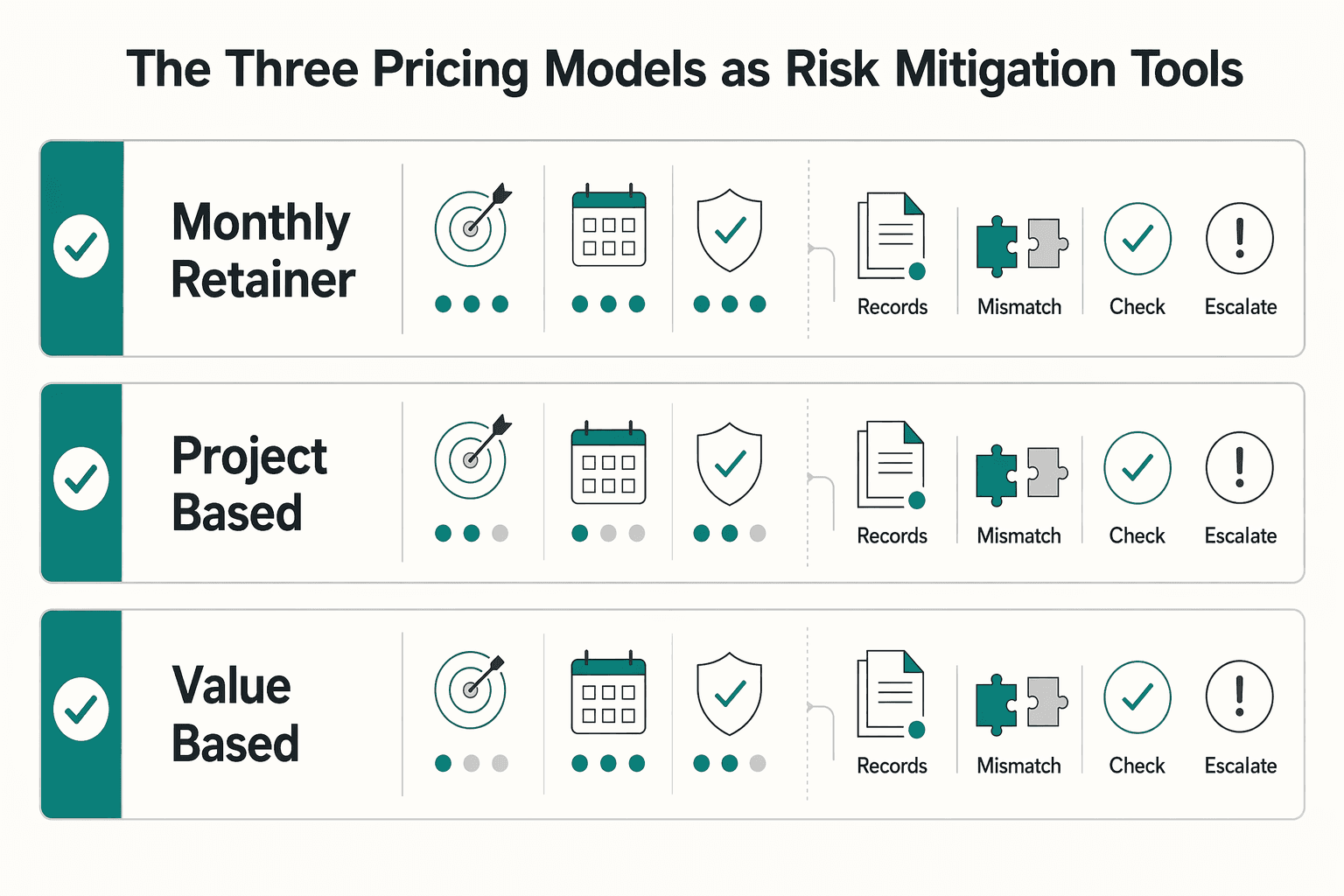

Choose your pricing model by risk fit first, then negotiate the rate. The right option matches scope clarity, change frequency, and accountability, while keeping cash-flow pressure manageable.

| Pricing model | Best fit | Cash-flow impact | Common failure mode | Required control |

|---|---|---|---|---|

| Monthly retainer | Ongoing work with a repeatable delivery pattern | Predictable monthly outflow | Scope creep, priority conflicts, duplicated work | SLA with clear service boundaries and escalation |

| Project-based | Defined deliverables with a clear finish line | Concentrated spend for a fixed scope | Ambiguous ownership, rework, approval disputes | SOW with deliverables, exclusions, checkpoints, and acceptance criteria |

| Value-based | Outcome-linked work with reliable measurement and attribution | Payment timing can vary by review period | Metric disputes, weak attribution, unclear baselines | Performance terms defining metric, data source, window, exclusions, and fallback rules |

If you cannot explain in one sentence why one row fits better than the other two, pause negotiations and tighten scope first.

Use a retainer when work is truly repeatable, not just "ongoing." The SLA should define what is included, response or turnaround expectations, review limits, escalation steps, and out-of-scope handling. If "quick requests" keep showing up with no clear intake or approval path, expect the retainer to drift.

Use project pricing when scope is testable before kickoff. Your SOW should name deliverables, dependencies, timeline, handoff points, approvals, and acceptance standards in plain language. If inputs are missing or approval ownership is unclear before work starts, the project is not ready.

Use value-based terms only when measurement is clean and both sides can trust attribution. Performance terms should lock the baseline before kickoff and define what happens when data is missing, delayed, or disputed. If you are already disagreeing on metrics before execution begins, stop there.

Before finalizing rates, run a short fit check and record it in a risk register: key risks, owner, monitoring signal, and exception path. Keep controls proportionate to the engagement instead of forcing one template onto every job. Then move to ROI: does this model still hold up after oversight load, rework risk, and payment timing are included? We covered the pricing side in more detail in How to Price a Podcast Production Service.

Choose the option that improves profit and capacity after overhead and delivery risk, not the one with the lowest quote. Compare every path using the same three inputs: revenue impact, delivery cost, and reclaimed focus value.

| Cost source | Examples | Track with |

|---|---|---|

| Handoff friction | Brief rewrites, missing assets, unclear ownership, extra kickoff calls | Owner, estimated monthly hours, and evidence such as calendar records, PM comments, and revision history |

| Revision cycles | Avoidable change rounds, quality fixes, missed standards | Owner, estimated monthly hours, and evidence such as calendar records, PM comments, and revision history |

| Follow-ups | Status chasing, deadline nudges, blocked-item clarification | Owner, estimated monthly hours, and evidence such as calendar records, PM comments, and revision history |

| Rework | Error correction, file rebuilds, duplicate reporting, client-facing cleanup | Owner, estimated monthly hours, and evidence such as calendar records, PM comments, and revision history |

Step 1. Set your baseline loss before reviewing quotes. Start with your current inefficiency cost, because that is your real benchmark. In ROI terms, this is your baseline loss: time loss, errors, duplicate work, and slower delivery in your current workflow. Pull recent timesheets, ticket history, revision logs, follow-up threads, and manual reporting effort. If work depends on scattered spreadsheets, treat that as a warning signal because duplication and input errors can distort your estimate.

Use the same structure for every option:

Revenue impact + Reclaimed focus value - Delivery costAbsolute profit / Delivery cost x 100Execution hours avoided - oversight hours introducedVerification point: if two reviewers cannot land on similar estimates for delays, approvals, and rework, your baseline is too weak for a decision.

Step 2. Include full delivery cost, including micromanagement tax. Do not treat the partner fee as the full investment. Delivery cost should include quoted fees, internal PM time, QA time, client communication time, usage-based charges, and any required tools.

Account for the micromanagement tax explicitly before approval:

For each line, assign an owner, estimated monthly hours, and evidence (calendar records, PM comments, revision history).

Step 3. Compare ratio, cash, and capacity side by side. A higher ROI percentage can still be the worse choice if it produces less profit or frees fewer hours.

| Option | Revenue impact | Delivery cost | Reclaimed focus value | Absolute profit | ROI % | Capacity recovered |

|---|---|---|---|---|---|---|

| Keep in-house | Insert current revenue preserved or added | Insert internal labor cost + tool cost | 0 | Revenue impact - Delivery cost | Absolute profit / Delivery cost x 100 | 0 hrs |

| Partner A | Insert estimated project revenue after ramp timing | Insert quote + internal oversight + tool/usage costs | Insert net hours saved x verified billable rate | Revenue impact + Reclaimed focus value - Delivery cost | Absolute profit / Delivery cost x 100 | Insert net hours saved |

| Partner B | Insert estimated project revenue after ramp timing | Insert quote + internal oversight + tool/usage costs | Insert net hours saved x verified billable rate | Revenue impact + Reclaimed focus value - Delivery cost | Absolute profit / Delivery cost x 100 | Insert net hours saved |

Include timeline assumptions in revenue impact. If one partner starts faster or reduces reporting and approval lag sooner, that changes return.

Step 4. Risk-adjust base ROI before deciding. After base ROI, add confidence bands for delivery reliability and failure impact. Score each option High/Medium/Low for reliability, based on references, sample work, response behavior, and a small live test, and Low/Medium/High for failure impact, based on margin loss, client trust loss, or both.

Then stress-test the leading option with a downside case: later-than-planned revenue and one extra revision cycle. If it only works in the optimistic case, it is not your best option.

Final checkpoint: run parallel testing and validation before trusting projected ROI. A small paid pilot or limited first sprint helps confirm whether projected gains hold in real delivery conditions.

If you want a deeper dive, read How to Calculate Your Billable Rate as a Freelancer.

Your low quote is only a win if delivery stays clear, manageable, and billable. If coordination load or scope ambiguity rises, your margin can erode and payment timing can slip.

| Danger | Early Warning Sign | Immediate Mitigation Action |

|---|---|---|

| Delivery mismatch | They pitch "custom" outcomes but describe a mostly standard process | Confirm what is fixed, what is configurable, and what inputs you must provide before kickoff |

| Micromanagement load | You are rewriting briefs, chasing updates, or fixing files before work even starts | Run a small paid test and track your oversight time alongside their output |

| Contract ambiguity | The agreement is thin, unlabeled, or unclear on core responsibilities | Mark up the draft and require clear sections for ownership, confidentiality, acceptance, rework, and disputes |

| Scope drift | Extra requests are approved in chat without a formal change path | Put every change in writing and tie scope changes to delivery, acceptance, and billing |

1) Delivery mismatch: what fails, how it shows up, what it costs The first thing that breaks is fit between what your client expects and what your partner is actually built to deliver. You usually see it early through vague discovery, recycled examples, or unclear answers on customization. If you ignore it, you absorb extra revision cycles and cleanup work that reduce the value of the original quote.

2) Micromanagement load: what fails, how it shows up, what it costs Here the problem is operating flow, not necessarily technical skill. Early signs include unclear ownership, repeated status chasing, missing inputs, and handoffs that return half-finished. If that pattern continues, your reclaimed capacity turns into oversight time, your margin tightens, and approvals can slow enough to delay invoicing.

3) Contract ambiguity: what fails, how it shows up, what it costs The problem here is enforceability of day-to-day expectations. You see it early when key terms are buried, unlabeled, or missing around IP ownership, confidentiality, acceptance criteria, rework responsibility, and dispute process. A practical review checkpoint is clause identification itself, for example, the discipline reflected in DFARS 252.103 and Subpart 252.2, because unclear structure now becomes expensive disagreement later.

4) Scope drift: what fails, how it shows up, what it costs Here the breakdown is scope control across delivery and billing. It usually appears as "quick extras," informal revisions, or unpriced asks handled outside an approval path. If you let that continue, scope creep and micromanagement combine to erode margin and push payment further out.

Use these signals as your bridge into due diligence: verify how the partner works before you commit, not after issues show up. For a step-by-step walkthrough, see How to Price a 'Productized' Consulting Service.

Do the diligence before you sign, not after the first delivery miss. If a partner cannot show how they operate, protect data, and hand work back cleanly, treat that as a fail regardless of price.

| Step | Ask for or test | Pass if | Fail if |

|---|---|---|---|

| Process proof | Anonymized project plan, sample communication log, client reporting template, and a live workflow demo | They can show repeatable execution and explain how they prevent branding leaks and client data mixing | They stay at feature-list level, rely on screenshots, or cannot explain how they prevent branding leaks and client data mixing |

| SLA language | Translate each promise into the event, owner, channel, measurement point, and what happens when targets are missed | Each promise is enforceable | Promises stay vague instead of naming the event, owner, channel, measurement point, and what happens when targets are missed |

| Contract control | Acceptance criteria, revision ownership and rework responsibility, subcontractor disclosure, data handling obligations, termination handoff requirements, IP ownership, and confidentiality | The draft is explicit on those terms | Key terms are buried, unlabeled, or missing |

| Communication stress test | One email with specific questions, one change-request scenario, and one deadline check | They answer each item directly, name the day-to-day contact and escalation contact, and confirm where approvals and status updates live | Ownership is vague, answers are partial, or scope control drifts into ad hoc messages |

| Off ramp | Notice terms, handoff responsibilities, return or deletion of client data, transfer of in-flight files, credential rotation, and final reporting exports | The exit path is documented before kickoff | They cannot explain how you recover access, assets, and continuity on exit |

Step 1. Ask for process proof, not portfolio highlights. Pass only if they can show repeatable execution, not just polished outcomes. Request an anonymized project plan, a sample communication log, a client reporting template, and a live workflow demo.

In that demo, ask them to create a client workspace or sub-account, connect mailboxes when relevant, run a delivery cycle, and export branded reporting. Fail if they stay at feature-list level, rely on screenshots, or cannot explain how they prevent branding leaks and client data mixing.

Step 2. Translate vague SLA language into terms you can enforce. Pass only when each promise names the event, owner, channel, measurement point, and what happens when targets are missed.

| Vague wording | Ask them to define it as | Pass if | Fail if |

|---|---|---|---|

| "Timely responses" | Response target by issue level, covered channels, business hours, escalation contact, and current response target pending contract/source verification | You can point to a measurable target and a named escalation route | "We usually reply fast" |

| "Regular updates" | Status cadence, format, owner, delivery day, blockers section, and current update cadence pending contract/source verification | Updates are scheduled and attributable | Updates depend on memory |

| "High quality delivery" | QA steps, reviewer role, acceptance checklist, rework trigger | QA and acceptance are visible, separate steps | Quality is only a promise |

| "Secure handling" | Access rules, storage location, subcontractor access, deletion or return on exit, and current controls pending policy/source verification | They can explain their security posture in plain terms | Security is answered with a generic checklist only |

Step 3. Mark up the contract for control, not just coverage. Pass only if the draft is explicit on acceptance criteria, revision ownership and rework responsibility, subcontractor disclosure, data handling obligations, termination handoff requirements, IP ownership, and confidentiality.

Third-party vendors add cyber security and data-protection risk. If the terms are unclear, your downstream exposure increases if the vendor has a breach. If this partner is critical to your delivery, use an independent reviewer or counsel to reduce blind spots.

Step 4. Run a communication stress test before onboarding. Treat this as a pre-onboarding protocol, not a casual chat. Send one email containing specific questions, one change-request scenario, and one deadline check; then document response time, completeness, and whether scope stays in written approvals.

Pass if they answer each item directly, name the day-to-day contact, name the escalation contact, and confirm where approvals and status updates live. Fail if ownership is vague, answers are partial, or scope control drifts into ad hoc messages.

Step 5. Define the off ramp before work starts. Pass only if the exit path is documented before kickoff. Confirm notice terms, handoff responsibilities, return or deletion of client data, transfer of in-flight files, credential rotation, and final reporting exports.

Fail if they cannot explain how you recover access, assets, and continuity on exit. That is dependency risk, not operational reliability.

This pairs well with our guide on How to price a 'Branding Package' for a new business.

If you take one rule from this guide, make it this: do not treat partner selection like a lowest-bid purchase. The number matters, but the decision is really about risk, cash flow, and whether the partner can deliver under your name without creating cleanup cost.

A useful reality check comes from outside agency work. An independent live pricing experiment with 437 shoppers across four cities found that displayed prices for the same grocery item could differ at the same store and time, in some cases showing as many as five prices. That does not prove anything about agency partnerships, but it is a useful reminder that headline pricing and real value are not the same thing. If pricing transparency can break down that easily, you should verify what sits behind every quote.

Before you sign, run one final screen against five items: pricing model fit, clear scope boundaries, revision and approval flow, expected oversight load from your team, and escalation paths when delivery slips. Then compare options against those controls, not just the fee. Choose the partner that protects profitability and service delivery, not the one with the lowest headline price.

You might also find this useful: How to Price a Bookkeeping Service for Small Businesses.

Start with a work breakdown so you estimate the real pieces of delivery instead of using one blended guess. Write down your assumptions for volume, revision rounds, turnaround time, tools, and the hours you will still spend on oversight. Validate the input data before you lock the estimate, then compare partner cost plus your internal review time against the revenue you can sell or the capacity you free up. Pressure-test the result with low, base, and high demand scenarios, plus a risk-and-uncertainty check.

Add the hours you will spend briefing, reviewing, chasing updates, and fixing avoidable errors. Then add failure costs such as rework, client credits, delayed billing, and the opportunity cost of sales time lost while you manage delivery problems. A useful checkpoint is to ask upfront for an anonymized reporting sample, a QA checklist, and one example of how revisions are handled. If a provider cannot show those, treat the quote as incomplete.

Do not rely on a blanket claim that one model is always more profitable. Build an estimate for your own demand pattern, management bandwidth, and risk tolerance, then test it against low, base, and high cases. | Criteria | White-label partner | In-house hire | |---|---|---| | Cost structure | Model fees, internal review time, and likely rework in your forecast | Model salary, tools, taxes, management time, and utilization in your forecast | | Control | Define review points, approvals, and acceptance criteria before work starts | Define internal QA ownership, approval paths, and backup coverage | | Speed to add capacity | Verify realistic start dates and throughput commitments | Estimate recruiting, onboarding, and ramp time before full output | | Risk ownership | Map handoffs and acceptance points so accountability is explicit | Map escalation paths and contingency plans for internal delivery gaps |

Do not assume those labels mean the same thing from one provider to another. Ask four direct questions: who appears to the client, who owns the relationship, who handles support, and whether any third-party branding appears in reports, portals, or email. If those answers are fuzzy, the naming does not matter because your delivery risk is still high.

A practical approach is to keep scope, acceptance criteria, revision limits, and out-of-scope items in writing. Then agree on response expectations, reporting cadence, and a clear escalation contact, and decide where approvals and change requests will live before kickoff. A simple check helps here: send one test change request before launch and confirm who approves it, who executes it, and what happens if the deadline slips.

Build review points into the service instead of relying on end-of-project fixes. Agree on a sample deliverable, a reviewer on each side, and a sign-off point before anything reaches your client. During the first live cycle, check exported reports, sender names, shared folders, and client-facing dashboards so brand leakage or mixed client data does not slip through.

Recheck your assumptions, your billable-rate math, and your exit path one last time. Make sure the quote you approved matches the actual scope, governance terms, and reporting you will use day to day. If you want a tighter pricing baseline, review your billable rate guide. Then use our white-label partnership structuring guide to lock down roles, communication, and off-ramp terms before you commit.

Chloé is a communications expert who coaches freelancers on the art of client management. She writes about negotiation, project management, and building long-term, high-value client relationships.

With a Ph.D. in Economics and over 15 years of experience in cross-border tax advisory, Alistair specializes in demystifying cross-border tax law for independent professionals. He focuses on risk mitigation and long-term financial planning.

Educational content only. Not legal, tax, or financial advice.

A workable rate is not the neat number a calculator produces. It is the number that still works after you account for real billable capacity, non-client time, scope drift, and the gap between sending an invoice and receiving cleared cash. Start with hourly math even if you do not plan to bill hourly, then turn that number into a quote with clear `payment terms`.

Treat this as a delivery risk decision first and a growth move second. A **white label partnership with agency** is more likely to hold up when the promise you make to the client matches the operating reality behind the scenes: who delivers, who supports, and who controls quality.

Move fast, but do not produce records on instinct. If you need to **respond to a subpoena for business records**, your immediate job is to control deadlines, preserve records, and make any later production defensible.