Use a staged scope to price data science project work from model performance. Start in CRISP-DM Business Understanding, confirm the decision owner and baseline source, and sell a paid validation phase if those are unclear. Quote larger work only after a written go or no-go review, then tie invoices to acceptance evidence such as a feasibility memo, recommendation set, or experiment design instead of open-ended ROI claims.

To price a data science project well, do not rely on hourly billing alone. Many buyers want a total spend they can budget for, and you need protection against extra effort that never turns into better pay. The real job is to turn technical effort into a priced business decision, not just a day rate.

That does not mean pretending uncertainty is gone. It means matching the fee to what is known, what still needs to be verified, and what the client is actually buying: analysis, recommendations, a decision-support tool, or a staged path to deployment.

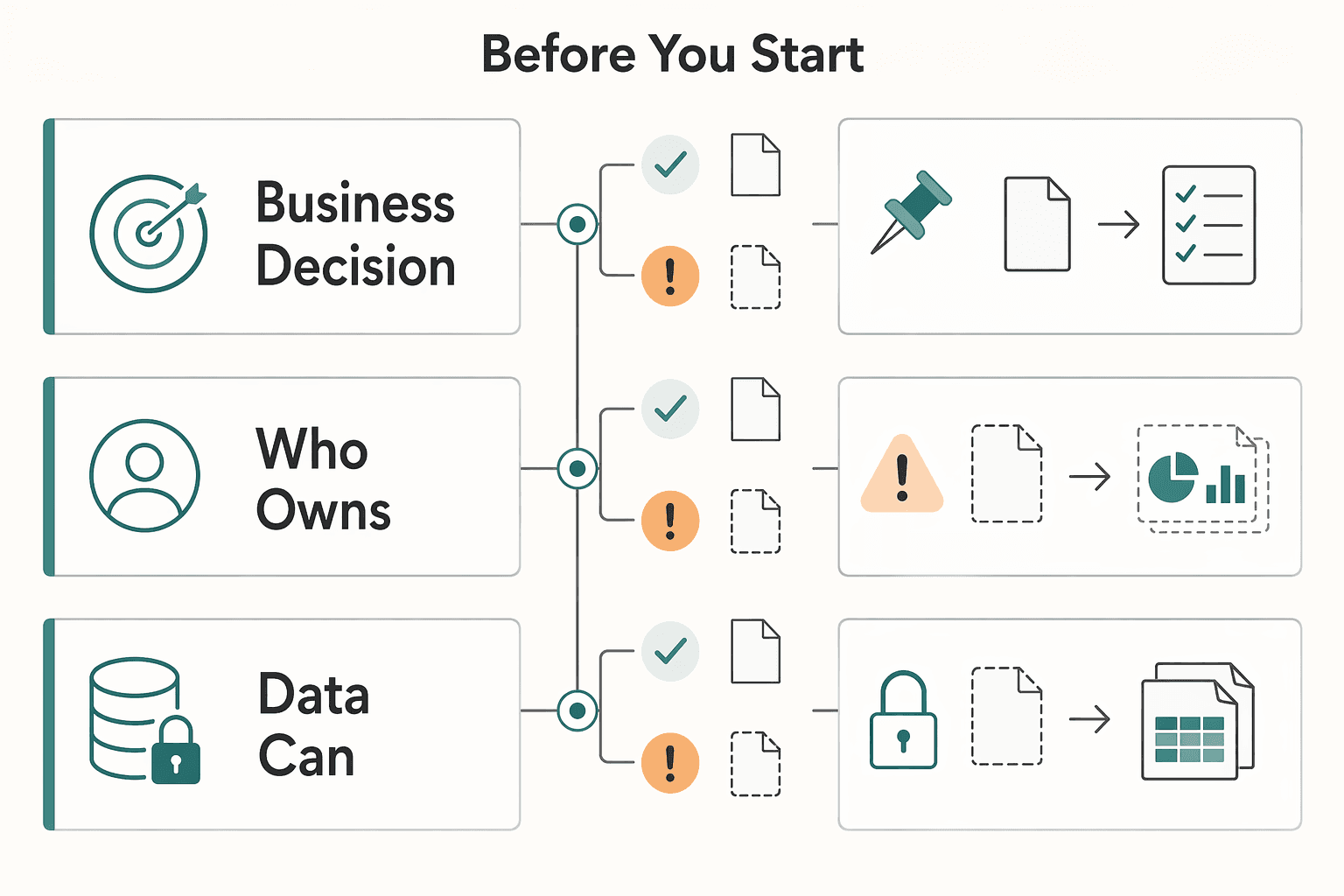

Do not quote a full deployment if the client cannot answer three basic questions: what business decision will change, who owns the KPI, and what data you can actually access. If any of those are vague, sell a smaller discovery phase first.

| Basic question | Used in scoping | If vague |

|---|---|---|

| What business decision will change | Start with the decision, not the model | Sell a smaller discovery phase first |

| Who owns the KPI | Outcome statement should name the business owner | Sell a smaller discovery phase first |

| What data you can actually access | Scoping pack should include data-access status | Sell a smaller discovery phase first |

That smaller phase should not be framed as a placeholder. It should produce a concrete scoping pack: confirmed decision owner, baseline source, data-access status, major assumptions, main risks, and a written go or no-go review. If the client wants a firm number before any of that exists, your safer move is to narrow the promise, not guess harder.

Step 1. Define the business outcome in one sentence. Start with the decision, not the model. "Improve pricing" is too broad. "Help the pricing team make daily price recommendations using current sales and product data" is specific enough to scope. In one online retail case, the useful outcome was a daily pricing decision-support tool, not a model score on its own.

A good outcome sentence should be usable in the proposal, the kickoff, and the acceptance review without changing meaning. If you keep having to translate the project from technical language into business language, the scope is still loose. The sentence should make it obvious what the client will do differently if the work succeeds.

Checkpoint: your outcome statement should name the decision, business owner, review cadence, and current baseline source. If the client cannot point to where the baseline lives, you are not ready to estimate value.

Also watch for cadence mismatch early. If the decision is supposed to happen daily but the key data arrives late or is refreshed on a different rhythm, that is not a minor delivery detail. It changes what you can credibly scope, how you validate the work, and whether the client is buying analysis, recommendations, or something operational.

Step 2. Map the model metric to a business KPI and then to a payment checkpoint. A technical metric matters only if it changes something the business already tracks. For pricing work, that can mean moving from forecast quality to revenue, profitability, or reduced lost sales. In the retailer case, the work had to estimate lost sales, forecast demand for new products, and account for cross-product price effects. That is the reminder here: a clean accuracy number on one item can miss the real pricing problem.

Use a simple chain like this in your proposal: forecast error improvement -> fewer bad pricing decisions -> measurable revenue or profitability effect -> payment tied to delivery and acceptance of the agreed analysis, recommendation set, or experiment design.

That last link matters. You do not want your fee hanging on a vague future outcome that depends on people, timing, approvals, and execution choices outside your control. Tie business logic to the work, then tie payment to deliverables and acceptance events the client can actually approve.

If you can test pricing changes, say so. Price elasticity testing and A/B testing give you a better bridge between model performance and business impact than a slide full of offline metrics.

It also helps to state what will count as evidence at each stage. In an early phase, evidence may be a feasibility memo, data review, baseline check, and recommendation on whether testing is justified. In a later phase, evidence may be a recommendation set, experiment design, or accepted deployment deliverable. When those proof points are named up front, it becomes easier to defend the fee and harder for the project to drift into endless "just one more analysis."

Step 3. Estimate a value range, not a promise. Build three cases: conservative, base, and upside. Use the client's own numbers for volume, average order value, profitability, or current pricing error cost. If a client wants certainty you cannot support, slow the sale down and move to a discovery phase.

The discipline here is not just building three scenarios. It is separating verified inputs from assumptions. Put the client-owned inputs in one place, label any unverified assumption clearly, and show which parts of the value logic still need to be tested. That keeps the commercial conversation honest and gives you a cleaner path to say, "This phase is priced around validating the assumptions that drive the larger estimate."

A common risk is an offline model that looks strong while business value stays unclear once operational constraints or cross-product effects show up. In pricing work, cross-product effects are not a detail. They can break a neat single-product estimate.

Another common mistake is letting the client treat the upside case as the promised result. The fix is simple: anchor the proposal around the range, the assumptions log, and the validation steps. If the client later wants the larger implementation, you already have a shared record of what was known, what was uncertain, and why the pricing structure was chosen.

Once the outcome and value logic are clear enough to test, the next decision is how much uncertainty you and the client are each taking on.

| Pre-sprint checkpoint | Required to exit phase |

|---|---|

| Data access | Have data access |

| Baseline KPI | Have a baseline KPI |

| Feasible method | Have a feasible method |

| Go or no-go review | Have a signed go or no-go review |

Step 4. Pick the fee model based on what is known today. You do not need one pricing model forever. It is often better to combine them.

| Pricing structure | Use it when | Main risk |

|---|---|---|

| Hourly or T&M | The task is genuinely open-ended and the client accepts spend uncertainty | Hard to budget, easy to question efficiency |

| Fixed-scope | Deliverables are clear, dependencies are known, and acceptance is definable | You absorb scope drift if terms are loose |

| Value-based | The business KPI is measurable and both sides accept assumption-based pricing | Disputes if outcome attribution is weak |

| Hybrid | You need proof first, then a larger build if the evidence is good | Confusion if discovery exit criteria are not written down |

For most strategic pricing engagements, the practical path is a hybrid. Start with an X week pre-sprint for EDA and feasibility. Exit that phase only if you have data access, a baseline KPI, a feasible method, and a signed go or no-go review. If it passes, quote the deployment as fixed-scope, value-linked, or a blend. Put an X months time box on the main effort so spend does not drift indefinitely.

Treat that pre-sprint as a real commercial stage, not an informal warm-up. Write down what the client will get at the end of it. Include the baseline check, the confirmed scope, the data findings, the main assumptions, the recommended next phase, and the conditions required to continue. If the answer is no-go, that result is still valuable. It tells both sides not to spend more money pretending the project is ready when it is not.

Write change-order triggers into the proposal. Typical triggers are new data sources, added integrations, extra experiments, support for more business units, or additional review work that was not in the original scope.

This is also where many consultants underprice without realizing it. They quote the model build and forget the approval cycles, data clarification, stakeholder review, experiment planning, and revision rounds around the actual recommendation. If those steps are necessary for the client to use the work, scope, time, and price them instead of absorbing them silently.

A good fee structure still fails if the proposal leaves ownership, approvals, or payment timing vague. Step 5. Document assumptions, responsibilities, and payment protections. Use this checklist before you send a number:

| Proposal area | Include |

|---|---|

| Statement of work terms | Deliverables, acceptance checkpoints, out-of-scope items, change-order triggers, and the time box |

| Data-access dependencies | Exact datasets, owners, access method, refresh frequency, and what happens if data is late, incomplete, or unusable |

| Decision responsibilities | Who approves experiments, who owns production decisions, and who signs off on major updates |

| Payment terms | Payment tied to clearly defined project checkpoints rather than vague future outcomes; if an approval is delayed, define whether the timeline pauses |

Make these items easy to scan. A proposal usually works better when assumptions, dependencies, and approval points are visible rather than buried in paragraphs. If a stakeholder can read the document and still not tell who is responsible for data access or experiment approval, you have left room for delay.

It also helps to make acceptance criteria concrete enough that a non-technical approver can use them. "Model delivered" is vague. "Feasibility memo delivered," "recommendation set delivered," or "go or no-go review completed" is much easier to approve and work against. The more operational your acceptance language is, the less likely you are to end up with work completed but payment stalled because no one knows what counts as finished.

That last point matters more than most people expect. A strong proposal does not just justify your fee. It protects your cash flow when the project is useful but the client is slow, undecided, or still figuring out what they actually want.

If you want a deeper dive, read How to Price a Clinical Trial Data Analysis Project. Want a quick next step? Try the free invoice generator.

The practical shift is simple: price the decision, not just the labor, and write down who owns the risk. That is how you price a data science project without making payment depend on vague promises.

Step 1. Tie model performance to a business KPI. Start in CRISP-DM's Business Understanding phase, where the job is to define business objectives and success criteria from the customer side. If the client cannot name the KPI owner, baseline, and decision that will change, do not jump to a full proposal. Your first verification point should be a written project plan with phase-level plans. That gives you a concrete approval point instead of a fuzzy "the model looks good."

Step 2. Match the pricing structure to uncertainty. If data access, data quality, or decision rules are still unclear, sell a paid validation phase before any larger fixed-scope or value-linked work. That protects your payment checkpoints because you can bill for verified work such as data review, assumptions testing, and planning. It can also reduce scope disputes, especially if you document which datasets are in scope, which are excluded, and any quality issues found during Data Understanding. Remember the common failure mode here: rush past early scoping, then get hit by garbage-in, garbage-out later.

Step 3. Put approval paths and risk ownership in writing. Name the approver for deliverables, the owner of data dependencies, and who signs off on testing, pricing changes, or governance questions. The stronger that path is, the less likely your work stalls between "analysis delivered" and "client approved."

Before you send a proposal, check for five items:

If those five items are solid, your pricing conversation usually gets easier because the client is no longer reacting to a number in isolation. They can see the decision being bought, the conditions behind the fee, and the points where both sides will verify progress.

If you need help setting your internal floor, use the billable-rate guide first. Then sharpen the client-facing offer with Value-Based Pricing for Strategic Consultants: A How-To Guide. Related: How to Price a 3D Animation Project. Want a cleaner way to handle invoicing and payouts? Talk to Gruv.

Start the scoping call in the CRISP-DM Business Understanding phase and ask what decision will change, who owns the KPI, where the baseline lives, and how you will access the data. If any of that is unclear, do not quote the full engagement. Propose a paid validation phase first, with business success criteria, data quality verification, and a written project plan. Then choose the fee model: time and materials for open-ended unknowns, fixed scope when deliverables and acceptance are clear, and value-linked pricing only when assumptions and attribution rules are written into the proposal. A short written summary after the call helps you catch moving scope before you send a number, and if you still need to set your internal floor, baseline it separately with How to Calculate Your Billable Rate as a Freelancer, then shape the client-facing fee around the value logic and written assumptions.

Include the business outcome, phase-by-phase deliverables, acceptance checkpoints, named approvers, data dependencies, risks and contingencies, and change-order triggers. Add a simple data appendix that lists which datasets are in scope, which are excluded, and why, because CRISP-DM treats those inclusion and exclusion decisions as a concrete project artifact. Also state what happens if data is late, unusable, or incomplete, and if the project is staged, say what must be true to move from discovery into a larger build and who has authority to approve that move.

Build a value hypothesis, not a promise. On the call, ask for the current baseline, the decision cadence, and the business owner who will validate whether a recommendation would actually change pricing behavior. In the proposal, show conservative, base, and upside cases, and keep any external benchmark or margin assumption pending until you can verify it against current benchmark or source evidence before using it. Price the first phase around validating assumptions and data quality, not around a guaranteed return, and say clearly what the first phase will not prove.

The biggest risks are often not model error alone. They are unclear business objectives, poor data quality, incomplete data, and unmanaged changes to scope or pricing logic. Ask who owns the business objective, who can verify data quality, who approves tests, and who owns change-control once recommendations start affecting live decisions. If the client cannot name those stakeholders, keep the engagement at analysis or recommendation level and do not take deployment accountability.

Prediction focuses on estimating what is likely to happen. Optimization goes a step further and supports what the client should do with that information. In the retailer case, the work was not only about forecasting demand; it also had to support pricing decisions and account for cross-product price effects. Ask this directly on the scoping call: “Do you want a forecast, a recommendation, or a decision-support tool?” That answer changes your scope, validation method, sign-off path, and pricing, because a decision-support tool usually needs clearer approval paths than a model-only deliverable. If the client is unsure, that usually points back to discovery, so the first paid step should clarify the target state before you quote a larger fixed-scope build.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

With a Ph.D. in Economics and over 15 years of experience in cross-border tax advisory, Alistair specializes in demystifying cross-border tax law for independent professionals. He focuses on risk mitigation and long-term financial planning.

Includes 3 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

A workable rate is not the neat number a calculator produces. It is the number that still works after you account for real billable capacity, non-client time, scope drift, and the gap between sending an invoice and receiving cleared cash. Start with hourly math even if you do not plan to bill hourly, then turn that number into a quote with clear `payment terms`.

Undercharging usually starts before the invoice: when you send one attractive number without clear pricing logic, ownership, or controls for scope or rate changes. In value-based consulting, your price and payment structure are one decision, so you need to build them that way.

Move fast, but do not produce records on instinct. If you need to **respond to a subpoena for business records**, your immediate job is to control deadlines, preserve records, and make any later production defensible.