Start with a structured ARC cycle: lock your book details, send neutral honest-review requests, and track each reviewer by source and posting status on Amazon and Goodreads. For most first-time structured campaigns, a platform-first lane such as BookSirens or Booksprout reduces setup friction, while direct outreach can be layered in once follow-up is stable. The key is disciplined checkpoints, not mass outreach.

If you want to get book reviews without creating avoidable risk, treat review collection like a repeatable process, not a launch-week scramble. The practical foundation is a clean Advance Reader Copy (ARC) process, a clear channel plan across Amazon, Goodreads, and BookBub, and disciplined tracking from first contact to posted review.

Many review problems are operational. Authors send too many requests, use vague wording, mix channels, and lose track of who received what and where they planned to post. A better approach is smaller, documented batches with clear asks and channel-specific tracking from day one.

By the time you start recruiting, you should be able to verify three things. Your ARC package is the current version. Your outreach asks for an honest review. Your tracker can tell you who accepted, where they came from, and whether a review is live. That is what makes the process reusable instead of stressful.

Step 1. Lock the book details you will use everywhere. Start with the basics and make sure they are stable. Before you start outreach, confirm the title and author you will use everywhere. If your subtitle, pen name, or edition details are still moving, ARC outreach gets messy fast because readers cannot reliably find or match the right listing later. Your first checkpoint is simple: the title, author name, cover, and ARC file version should be settled enough that every request points to the same book.

Step 2. Build your timing around publication date, not hope. Set your process by working backward from launch. Decide when ARCs go out, when you stop accepting new reviewers, and when reminders begin. Do not assume every review will land during launch week. Reviews of popular books are typically published close to publication dates, while scholarly review outlets can take months. That is fine if you want long-tail coverage, but not if you are counting on immediate social proof.

Step 3. Choose review sources by purpose. ARC readers are a practical way to collect reader reviews across Amazon, Goodreads, and BookBub. If you want help managing readers, BookSirens positions itself around reader vetting, reminder sending, and daily review tracking. It also markets a community of 51,000+ reviewers and influencers. That does not guarantee results, but it does show the kind of operational help some platforms are built to provide. Keep that separate from paid editorial review services such as Clarion or magazine consideration through Foreword. Those can be useful assets, but they are not the same as customer platform reviews.

Set the rules before outreach starts: define success by channel, use one honest-review script, and log every contact so the process is explainable.

Step 1. Separate your goals by channel. Split your plan into two lanes: Amazon for credibility signals, Goodreads for discovery and reader conversation. Write one clear success statement for each lane so you are not chasing the same outcome in both places.

Step 2. Lock in honest-review wording. Use the same plain-language ask in every email, DM, and ARC note: you are offering a review copy and requesting an honest review if the reader chooses to leave one. Keep the wording neutral and consistent so outreach stays clear and governed.

Step 3. Choose scope before launch pressure. Decide upfront whether this is a launch-only push or a rolling process that also includes backlist titles on Amazon and BookBub. A fixed scope keeps follow-up, ownership, and expectations consistent.

Step 4. Write a one-page policy note. Document what you will not do, who can contact reviewers, which channels you will use, and how outreach is logged. Keep the log audit-ready with simple fields such as date sent, sender, title, platform, and follow-up owner.

Before you invite anyone, make your ARC workflow execution-ready: clean package, clear templates, reliable tracker, and launch timing with a fallback.

Step 1. Build a clean Advance Reader Copy package. Send a version that is final enough to review, with the manuscript file, cover, blurb, genre positioning, and concise content notes so readers on BookSirens or Booksprout know what they are accepting. The blurb carries real weight in ARC acceptance, so treat it as a decision asset, not filler. Run one checkpoint before outreach: review the exact file and metadata as a reader would, and fix any mismatch between promise and delivery.

Step 2. Write two separate review request templates. Use one template for Amazon and one for Goodreads, each with plain honest-review wording. Keep the ask simple: the reader received a free ARC and may leave an honest review if they choose. Remove any incentive, pressure, or outcome language, and keep saved copies of the exact templates you use.

Step 3. Set up your reviewer tracker before the first invite. Track BookSirens, Booksprout, and direct outreach in one place, with source channel, accept/decline, review status, and follow-up dates. A tracker is only useful if every accepted reviewer has one current status and one next action date. This prevents duplicate follow-ups and platform confusion.

Step 4. Lock launch logistics and fallback now. Set publication timing, ARC close date, and reminder timing before outreach starts. If Amazon or Goodreads review velocity is slower than expected, use your fallback plan rather than changing your standards mid-launch. A practical fallback is to keep building your ARC team while adding free asks through channels you already control.

Related: The best 'launch strategies' for a self-published book.

Pick the channel mix you can run consistently without losing control of follow-up. If you need faster setup and less admin, start platform-first with BookSirens or Booksprout. If reviewer fit and long-term relationships matter most, lead with direct outreach through bloggers, genre communities, Reddit, or r/selfpublish.

A combined approach is often practical because each lane solves a different problem: platforms help with process speed, while direct outreach can improve fit.

| Model | Best when | Setup effort | Reviewer fit | Tracking visibility | Post-launch reusability |

|---|---|---|---|---|---|

| Platform-first | You need quick setup and process support | Lower than manual outreach | Depends on clear blurb, genre, and content notes | Easier to monitor in one place, then mirror to your tracker | Moderate if you keep strong reviewers in your ARC team |

| Outreach-first | You can recruit from niche communities and bloggers | Higher: outreach, qualification, follow-up, tracking | Often stronger when niche targeting is tight | Only as good as your tracker discipline | High if relationships continue into future Amazon and Goodreads launches |

| Hybrid | You want speed plus tighter fit | Highest at the start because you run two lanes | Often strongest overall if overlap is managed | Good only with clean source labels from day one | Highest when you build both repeatable platform flow and direct reviewer relationships |

Use a realistic operating window. A focused push toward an early milestone like 25 reviews is often framed as possible in about 4-8 weeks, but results vary by genre and author.

Before you choose, score each channel against the same criteria.

| Criterion | What to verify |

|---|---|

| Setup effort | listing setup, reviewer vetting, ARC delivery, reminders |

| Reviewer fit | genre match and likely posting behavior on Amazon, Goodreads, or both |

| Tracking visibility | how easily you can see accepted, pending, posted, and dropped reviewers |

| Post-launch reusability | whether the channel helps you build a reusable ARC team |

Use one verification rule: each channel must map to a distinct source label in your tracker (BookSirens, Booksprout, blogger, Reddit, r/selfpublish). If you cannot sort by source, you cannot tell which lane is actually producing reviews.

The tradeoff is straightforward: platform convenience can reduce admin time; manual outreach can improve fit but adds coordination load.

A practical starting rule:

Avoid expanding your mix into paid or incentivized review offers. Some vendors advertise packages starting at $449 and list higher tiers like $1,706, and incentivized reviews carry documented ethical and downside risk. Keep the system trackable and defensible.

Build for fit first: a reliable ARC team is a smaller group of genre-matched readers you can manage well. The goal of an ARC is preparation and early honest-review momentum, not sales.

Recruit for fit, not volume. Prioritize readers who already read your genre and actively post honest reviews on Amazon, Goodreads, or both. Better-fit readers are more likely to give useful feedback and publish thoughtful reviews.

Before you approve anyone, log two basics in your tracker: genre fit and posting behavior. If those are unclear, treat that as a risk and keep screening.

Set expectations at acceptance, not at the last minute. Explain what an ARC is, when you hope they can finish, and where they can leave an honest review. If they are not ready to post publicly, tell them what feedback is most helpful.

Keep the tone respectful and clear: ask for honesty, not positivity, and avoid pressure language.

Segment your list so follow-up stays organized. A simple split like new reviewers, proven reviewers, and non-responders is enough if each person has a clear status and next action in your tracker.

The point is operational clarity: do not manage everyone the same way or let accepted readers disappear into one generic "ARC sent" bucket.

Protect the relationship as carefully as the review count. ARC members are volunteers, so thank them when they join, thank them when they post, and update them if launch details change.

Respectful communication makes it more likely you'll keep a team that stays useful beyond one launch across Amazon and Goodreads touchpoints.

Related reading: How to Get Featured in the Press as a Freelance Expert.

Once your ARC team is segmented, switch from recruiting to execution. Treat launch as a phased workflow with clear checks at each stage.

| Phase | Operational focus | Verification checkpoint |

|---|---|---|

| Pre-launch | Confirm delivery and instructions for every accepted ARC reader | Click every link in your instructions and tracker |

| Launch window | Run reminders and check review activity every day | Keep statuses separate: posted, promised but not posted, and silent |

| Post-launch | Send a short thank-you, run a final reminder cycle, and transition the right readers into your ongoing ARC team | Confirm tracker status matches live platform visibility or direct reader confirmation |

Start pre-launch by confirming delivery and instructions for every accepted ARC reader. If you use BookSirens or Booksprout, verify each reader received the ARC and knows exactly where to post an honest review: Amazon, Goodreads, or both.

If your review pages are not live yet, BookSirens lets you add Amazon and Goodreads URLs later. Before reminders go out, click every link in your instructions and tracker so readers do not land on the wrong edition, a blank page, or a broken URL.

Run one compliance pass on your message copy before you send anything:

Run the launch window as daily monitoring, not wait-and-hope. Trigger your reminder sequence when the book is live, keep reminders friendly, and check review activity every day.

Use tracking visibility to keep statuses separate: posted, promised but not posted, and silent. This avoids mixing active reviewers with misses and keeps your next launch list clean.

Reconcile tracker status against live platform outcomes each day. Mark complete only when the review is visible or directly confirmed by the reader.

In post-launch, keep follow-up light, then transition the right readers into your ongoing ARC team. Send a short thank-you, run a final reminder cycle, and record outcomes clearly without pressure.

Booksprout supports this cleanup directly: remove non-reviewers and invite helpful reviewers to future ARC campaigns. Promote based on behavior, not intent.

Use these verification checkpoints before closing the cycle:

The operating rule is simple: be strict with tracking and gentle with people. Friendly reminders improve follow-through, and relationship-safe follow-up keeps your ARC team usable for the next release.

Need the full breakdown? Read How to Find Beta Readers for Your Book and Use Their Feedback Well.

After launch, use your tracker as a decision record: track only what changes your next action.

Track three process metrics in your ARC sheet: acceptance rate by channel, completion rate by reviewer segment, and time-to-review by platform. Keep status labels separate (submitted, pending, live) so the counts stay usable.

Your checkpoint is simple: every metric should tie back to named tracker rows or direct reviewer confirmation, not memory.

Run one channel review each month for BookSirens, Booksprout, and direct outreach, then make one decision per channel: scale, pause, or redesign.

Use the same questions each cycle:

End with one documented decision per channel so the next launch starts cleaner.

Interpret credibility outcomes and conversion outcomes separately so you do not overread short-term Amazon or Goodreads activity. Review count, review freshness, and platform presence are credibility signals. Clicks or sales are conversion signals and need different evidence.

If credibility improves but conversion does not, adjust the broader launch system before discarding the review process.

Document one change per cycle in your standard operating procedure (SOP). Keep it concrete: one instruction update, one tracker field change, one reminder timing change, or one ARC team rule.

Checkpoint: update the SOP from observed evidence, then use that update in the next launch. This is how the process improves without relying on memory.

If results stall, tighten the process before you add more volume: clean up qualification, clarify instructions, handle inactive participants consistently, and centralize records before you relaunch.

| Mistake | What it causes | Recovery |

|---|---|---|

| Treating distribution like a blast campaign | Outreach gets too broad | Pause and restart with a smaller, better-qualified cohort; use one clear tracker row per person with source, fit notes, acceptance date, and intended posting destination |

| Using vague asks that create posting confusion | Avoidable friction | Send a corrected message with explicit posting instructions and destination links; log the version and send date |

| Having no rule for non-reviewers | Completion data becomes unreliable | Define clear statuses and a simple removal-or-retry rule before the next cycle |

| Chasing volume without an audit trail | Decisions break down unless records are centralized | Keep one operating log with contact identity, channel, dates, follow-ups, and outcome |

If outreach gets too broad, pause and restart with a smaller, better-qualified cohort. Use one clear tracker row per person with source, fit notes, acceptance date, and intended posting destination so decisions stay evidence-based.

A generic "please leave a review" request often creates avoidable friction. Send a corrected message with explicit posting instructions and destination links, then log the version and send date so you can evaluate what changed.

If accepted participants can sit in limbo, your completion data becomes unreliable. Define clear statuses and a simple removal-or-retry rule before the next cycle so follow-up stays fair and consistent.

When outreach happens across multiple channels, decisions break down unless records are centralized. Keep one operating log with contact identity, channel, dates, follow-ups, and outcome so you can explain any result quickly and avoid duplicated outreach.

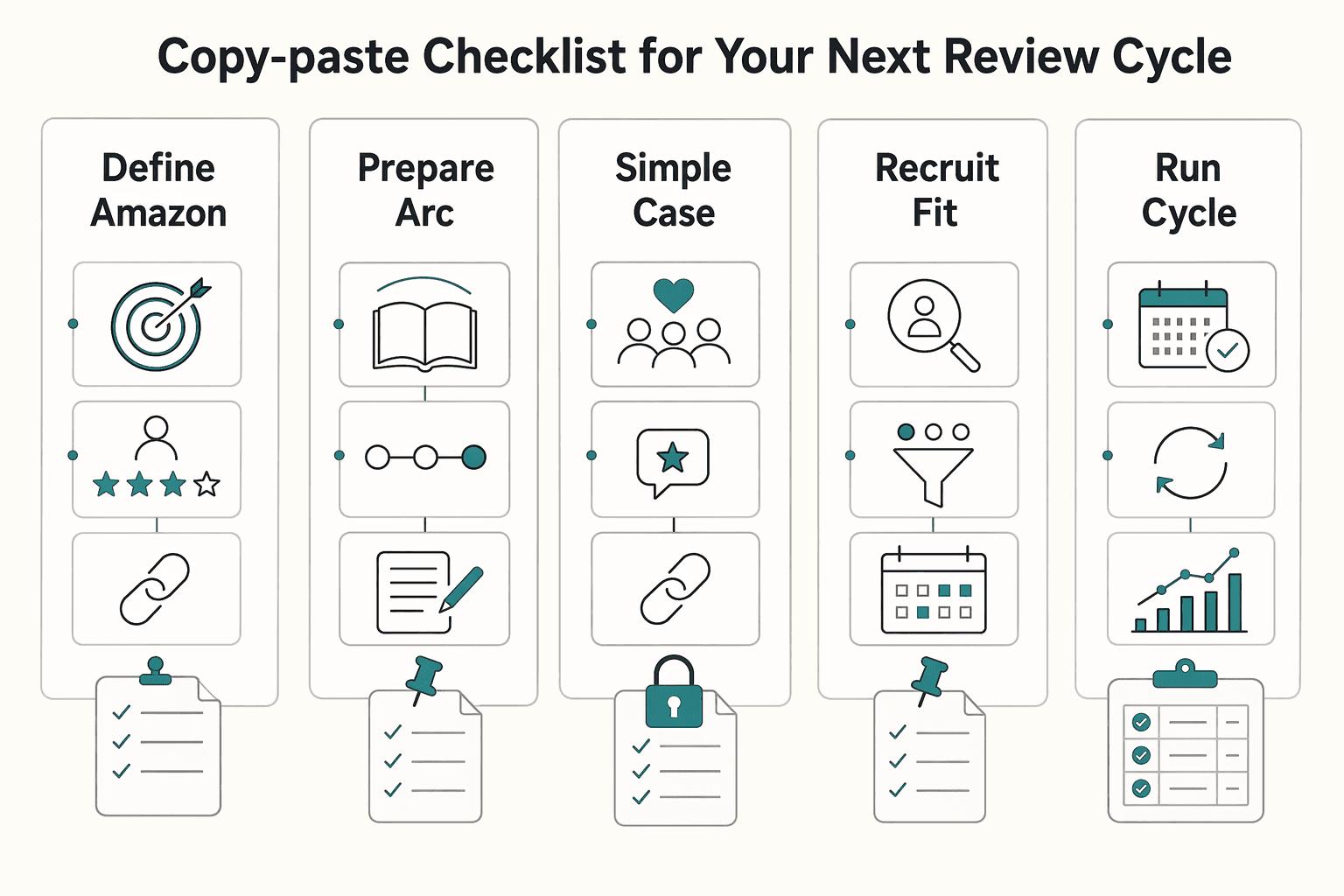

Use the same written checklist for every launch cycle. It will not guarantee review volume, but it gives you a repeatable process you can run, audit, and improve.

Set one clear goal for each platform before outreach. Keep your ask consistent: request an honest review and make posting instructions explicit for each platform. Checkpoint: your Amazon and Goodreads templates use the same honest-review language and clear destination links.

Your Advance Reader Copy (ARC) and outreach materials should be ready before you contact reviewers. Build a single tracker with source, accept/decline status, target platform, review status, and follow-up notes. Checkpoint: test the exact message flow yourself, including file access and every link.

Compare BookSirens, Booksprout, and direct outreach on reviewer fit, setup effort, and tracking visibility. No single strategy works for every author or book, so choose the model that best fits this title and your current capacity. Checkpoint: pick one primary channel; add hybrid only if your tracking remains clean.

Your ARC team is simply the list of people who sign up to receive an ARC copy. Prioritize fit over raw volume, and avoid requesting reviews outside a reviewer's target genres. Checkpoint: every accepted reviewer is tagged by source, platform target, and current status.

Pre-launch, confirm delivery and posting instructions. During launch, run reminders and status updates consistently. Post-launch, send a light final follow-up and close the cycle cleanly. Red flag: repeated nudges after silence usually hurt relationships more than a clear close.

Check outcomes by channel and segment, then document one process change for the next cycle. Iteration matters more than constantly adding new tactics.

For a step-by-step walkthrough, see How to Get an ISBN for Your Self-Published Book.

The fastest legitimate route is a focused ARC push with a near-final manuscript and a clear follow-through plan. Speed usually comes from preparation, not volume.

Start with a platform first if you need structure and do not already have a reliable reviewer pool. Booksprout is documented as an affordable option with a built-in reviewer base, which can reduce setup friction. Start with direct outreach first if you already have a strong reader network, but expect more coordination and follow-through work.

Use hybrid when one channel is not covering both reach and fit. A platform can help fill the ARC list, while direct outreach can bring in readers from your own audience. If your process becomes hard to track, use one channel until your follow-through is consistent.

Set expectations at acceptance, then follow up lightly and consistently instead of pushing harder. Keep reminders limited, then close the cycle respectfully when a reader goes quiet. Relationship damage usually comes from repeated nudges, not from clear closure.

Your Advanced Reader Copy checklist should include a manuscript that is in final proofreading stage, or very close to it, plus a clear distribution plan, launch timing, and one tracker for outreach and statuses. The main goal is coordination and follow-through before launch.

Treat them as two different jobs. Credibility means visible, honest reviews on the right pages so a new reader sees proof that the book has been read. Conversion is whether those reviews actually help someone buy, so judge sales impact separately after the launch window has settled.

A former tech COO turned 'Business-of-One' consultant, Marcus is obsessed with efficiency. He writes about optimizing workflows, leveraging technology, and building resilient systems for solo entrepreneurs.

Includes 4 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

The book needs a defined job and a defined boundary before you draft. In this cycle, give it one job: either support your client book services, or strengthen your own authority book so your niche, offer, and sales conversations become clearer.

For consultants and global professionals, writing a book is usually a business decision, not a bid for literary fame. The frustration starts when people follow the standard author path: write, publish, hope for traction, then wonder why a burst of Amazon sales does not turn into serious client work.

Move fast, but do not produce records on instinct. If you need to **respond to a subpoena for business records**, your immediate job is to control deadlines, preserve records, and make any later production defensible.