Assign one accountable owner and one backup reviewer, then run inclusion through concrete documents instead of broad messaging. Update the hiring rubric, interview scorecard, onboarding checklist, collaboration norms, and promotion criteria in that order. Use decision logs and monthly reviews to verify what changed, who owns the next action, and what gets checked again. Keep fairness controls when speed pressures rise, and shorten handoffs elsewhere rather than dropping structure.

Treat this as an operations guide, not a culture memo. The goal is to improve equality of opportunity in remote work through a few clear decisions people can actually follow. DE&I is broader than legal compliance, so the useful question is not whether you published a statement. It is whether people have fair access to information, input, and opportunity in daily work.

This guide is for small, growing teams that need simple actions with visible owners and checkpoints. You do not need a new committee or a thick policy pack. You do need one accountable owner, one backup reviewer, and a short list of documents that get updated when the team grows or when a process breaks.

Start with a basic but revealing check: can you name the owner, the backup, and the current version of your hiring rubric, onboarding checklist, collaboration norms, and promotion criteria? If any of those are missing, your inclusion work is still too abstract. The fastest failure mode is saying inclusion matters while leaving the real decision documents untouched.

By the end of month one, you should have a simple implementation sequence, a lightweight review cadence, and a copy-and-paste checklist you can use right away. The sequence is simple: define what success means for your team, gather the current artifacts, tighten the remote operating rules, then review what changed with evidence rather than impressions.

That order matters. Teams often jump to training or messaging before fixing the places where exclusion shows up early, such as inconsistent interview packets, unclear meeting norms, or promotion decisions that depend on manager visibility. If speed and fairness pull against each other, keep the control that protects fair access and shorten the process somewhere else.

Use a simple month-one verification point. At the end of the first review, you should be able to show three things: which documents changed, who owns the next action, and what you will check again next month. If you cannot show that paper trail, you probably added talk, not discipline.

Keep the scope on four places that shape an inclusive remote workplace every week:

That focus is intentional. DE&I work is often associated with stronger creativity, productivity, and employee retention, but many institutions still lack inclusive processes and cultures. This guide stays close to the operating layer because that is where gaps tend to appear early.

If you already have company values written down, treat them as background, not proof. What matters is a simpler evidence pack: interview scorecards, onboarding check-ins, written meeting expectations, promotion criteria, and review notes with named owners. When those artifacts are clear, inclusion becomes easier to verify and much harder to turn into bureaucracy.

Related: How to Build a Culture of Innovation in a Remote Agency.

Define success before you change hiring, meeting, or promotion workflows. In a remote team, success should cover representation, belonging, and equitable access to opportunity in day-to-day decisions.

Treat Diversity, Equity, and Inclusion as connected but distinct:

| Term | Definition |

|---|---|

| Diversity | Who is on the team |

| Inclusion | Whether people can contribute and be heard |

| Equity | Whether access to information, opportunities, and decisions is fair across the team |

If your goal only tracks representation in leadership or hiring, it is incomplete. Add outcomes for belonging and for equitable access to opportunities.

Keep the definition short enough to use in regular reviews. A practical remote baseline is:

This aligns with common DEI measurement areas: representation, belonging, and equity outcomes. Validate progress with observable artifacts like meeting records, promotion decisions, onboarding check-ins, and retention patterns.

Use one rule on every policy change: if it increases speed but reduces equitable access to opportunities for cross-functional teams, revise it before rollout. This is especially important for processes that rely on informal context, fast verbal decisions, or manager visibility.

Include retention in your definition as a signal, not an afterthought. One cited figure found Black employees were 14% more likely to leave when remote work was unavailable, which reinforces that access and flexibility can affect who stays.

Set ownership first, then baseline what already exists. Without clear ownership, DE&I work usually stalls across HR, managers, and founders.

Assign one accountable owner for DE&I operations and one backup reviewer so decisions do not stall. The owner maintains the working plan and timeline; the reviewer pressure-tests blind spots and can step in when needed.

Use a simple check: both names should appear on the review calendar and in the live tracker for open items. If ownership is shared but decision rights are unclear, updates often happen unevenly across hiring, promotion, and collaboration norms.

Start with current operating documents, not new templates. Pull the materials that already shape day-to-day experience:

Then verify each one is current, accessible to the right people, and actually used in practice.

Build your baseline from your own operating data, not external benchmarks. Create a snapshot of current hiring flow, participation patterns, promotion outcomes, and retention trends using existing counts, notes, and records.

If you run surveys or pulse checks, follow the results with structured conversations. Those discussions can surface barriers that quantitative data misses. Acting on feedback matters, since organizations that do not act on DEIB survey results have been linked to an average 23% drop in trust scores. Before rollout, test each initiative across employee groups to reduce unintended equity gaps.

Set one clear remote operating standard and make it easy to find. Remote work does not create inclusion on its own, so your first control is a shared rule for how people respond, meet, document, and make decisions across locations.

With owners and baseline artifacts in place, turn scattered habits into one visible source of truth. Keep it short enough to use and specific enough to remove guesswork.

Publish one page that covers four items for every team:

| Rule area | Include | Purpose |

|---|---|---|

| Response windows | Urgent vs non-urgent channels | Shared rule for how people respond across locations |

| Meeting etiquette | When cameras are optional | Shared rule for how people meet across locations |

| Documentation minimums | What gets captured after meetings | Shared rule for how people document across locations |

| Decision logs for cross-functional work | Decision, date, owner, brief rationale, and where final decisions live | Progress does not depend on who happened to join a call |

Define where each rule applies and who can approve exceptions. Include practical details teams can follow immediately: urgent vs non-urgent channels, when cameras are optional, what gets captured after meetings, and where final decisions live.

For cross-functional work, the decision log is critical. Each entry should include the decision, date, owner, and brief rationale so progress does not depend on who happened to join a call.

Use a simple verification check: a new hire can find this page from onboarding, and two managers describe the same standard when asked.

Make participation procedural, not optional. Rotate facilitators in recurring meetings, use explicit turn-taking for high-stakes topics, and collect written input before live calls.

These routines reduce common remote barriers such as accessibility gaps, unconscious bias, and limited face-to-face interaction. Written input also helps prevent influence from concentrating with the fastest speaker or the person closest to a manager.

Review meeting records after a few cycles. If the same people consistently present, summarize, or receive credit, adjust the routine. Managers should audit visibility patterns, not just attendance.

If a process depends on hallway context, manager proximity, or being online at the right moment, redesign it for async-first collaboration. Promotion discussions, project handoffs, and cross-functional approvals are common failure points.

Use one checkpoint: if a decision cannot be understood without side conversations, the process is not ready. Move key context into a written brief, comment thread, or decision log first, then use meetings to resolve open points.

For a step-by-step walkthrough, see How to Make Remote Work in Yachting Reliable and Compliant.

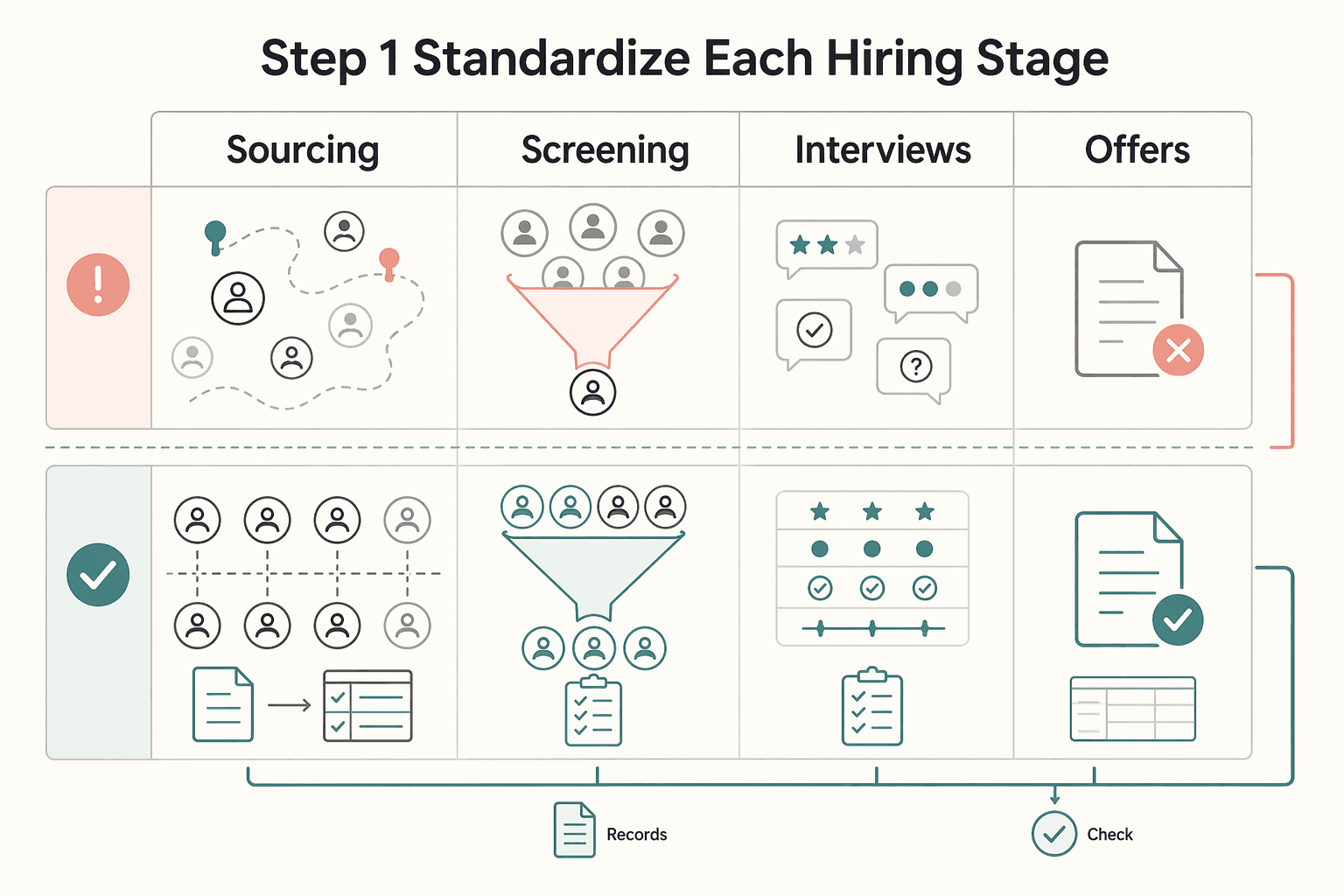

Redesign hiring and onboarding as controlled systems, not manager-specific routines. If either process depends on time zone access, informal context, or interviewer style, exclusion risk is already built in. Keep fairness controls in place, then recover speed elsewhere.

Bias awareness alone is not enough; the process itself has to reduce bias and discrimination. Set clear, objective, structured, transparent standards at each stage so decisions stay fair and explainable.

| Stage | Standard to set | Verification point |

|---|---|---|

| Sourcing | Use one job advert template, define must-have vs trainable criteria, and use a repeatable channel mix | Similar roles should not carry materially different wording or hidden requirements |

| Screening | Apply the same knockout criteria and record notes against those criteria for every candidate | A reviewer should be able to see why one candidate advanced and another did not |

| Interviews | Use a shared packet, fixed competencies, and one score scale across interviewers | Debrief notes should map to criteria, not personal impressions |

| Offers | Use one approval path with a short written rationale tied to agreed criteria | The decision should be explainable without relying on who advocated loudest |

Two controls are often missed: testing job advert wording and checking assessments for validity, reliability, and objectivity. Leaving sourcing or screening unstructured while standardizing interviews still creates an uneven shortlist before interviews begin.

Use one interview packet and one scorecard format for every candidate to prevent drift across interviewer style, time zone, or time slot. The packet should define what is being assessed, what evidence looks like, how to score it, and where notes go.

Include: role criteria, competencies assigned to that interviewer, 3 to 5 structured questions, defined rating levels, and evidence notes. Keep the debrief rule simple: everyone submits notes before score discussion. If a score has no written evidence, treat it as opinion rather than decision-grade input.

Remote onboarding needs a sequence from day one because it sets early confidence, belonging, and readiness to contribute. Build week one in order: role clarity first, communication norms second, support channels third.

Start with priorities, decision rights, and what good looks like. Then confirm where requests go, expected response windows, meeting etiquette, and where decisions are documented. Next, make support paths explicit: manager, peer support, operations contact, and an async place for basic questions.

Run an inclusion check-in in the first two weeks: does the new hire know how to get help, how decisions are made, and who they can go to beyond their manager? If speed-to-hire conflicts with fairness controls, keep the controls and shorten cycle time elsewhere through scheduling and handoff discipline, not by dropping structured interviews, scorecards, or onboarding check-ins.

For remote collaboration, make written context the default and use live meetings to resolve decisions, not to introduce them. This helps people contribute without depending on time zone overlap, speaking speed, or inside context.

Before scheduling a meeting, check whether async methods can reach the same outcome. If a topic needs background, tradeoffs, or cross-functional input, start with a shared agenda document or decision draft and collect comments first.

When you do schedule a call, include the agenda link in the invite so people can prepare in advance. Send invites with enough lead time: 72 hours is a strong standard, and 24 hours is a minimum. A practical checkpoint is simple: attendees should be able to read one document and understand the decision context before the meeting starts.

Recurring meetings should have a clear purpose, clear pre-reads, and a defined facilitation owner. If a meeting is no longer helping decisions move, pause it, tighten the agenda, and relaunch with better written preparation.

This keeps collaboration usable across functions and regions instead of rewarding only the people who can always join live with no preparation gap.

Time-zone fairness should be planned, not improvised. For cross-region collaboration, use overlap windows across regions where possible, and delegate facilitation to volunteers whose working hours align with participants.

Use the same inclusion standard for team-building activities: avoid relying on one fixed live slot, rotate time blocks where possible, and offer async ways to participate. The goal is meaningful connection without making after-hours attendance the price of belonging.

Related reading: Asia Remote Work Visa Planning for Thailand, Japan, Malaysia, and Indonesia.

Measure DE&I monthly with a small scorecard tied to decisions, then assign owners to fix what the data shows. This is where inclusion moves from intent to accountable operating practice.

Put measurements in place to track progress and hold managers accountable for results. Many teams stall because they are not sure which metrics to collect, so keep your scorecard narrow, stable, and tied to actions you can take next month.

For a small or growing remote team, track four categories: representation in leadership, participation quality, promotion movement, and employee retention.

| Category | What to review monthly | Evidence to pull | Red flag to watch |

|---|---|---|---|

| Representation in leadership | Who holds leadership or lead roles by team and level | Current org chart, team roster, recent role changes | Leadership composition stays frozen while hiring changes elsewhere |

| Participation quality | Who speaks, comments, and gets visible follow-up work | Meeting notes, speaker initials, action-item owners, async comments | The same few people keep carrying visible decisions |

| Promotion movement | Who was considered, promoted, or deferred | Promotion criteria, promotion decision notes, manager justification | Promotions rely on manager narrative without written criteria or examples |

| Employee retention | Who left, who looks at risk, and where drop-off is happening | Retention trend notes, onboarding check-ins, exit or stay notes if you have them | Early exits cluster in one team, manager group, or hire cohort |

Keep each category anchored to a document you already use. If a scorecard item cannot point to an org chart, meeting log, promotion document, or retention note, it is too vague to guide action.

At month-end, require one sentence on the finding, one sentence on the risk, and one named owner for follow-up in each category.

Cross-check manager reports with team signals before you trust the summary. Compare manager-reported inclusion health with team-reported psychological safety and meeting participation logs.

If those sources disagree, treat the mismatch as a review trigger. Keep the evidence pack lightweight:

Avoid relying on a single source. Use the categories together so you can see whether the same issue appears across more than one signal.

Each month, go deep on one failure mode and document corrective action. Keep scope tight enough to name the issue, assign an owner, and verify progress in the next review cycle.

Typical examples include onboarding drop-off, promotion opacity, uneven speaking access in decision meetings, or manager-only visibility of stretch work. Record three items in writing: what happened, what evidence supports it, and who owns the next action.

Do not move to a new failure mode until you check progress on the previous one. The scorecard should function as a dated record of decisions and follow-through.

In remote teams, inclusion usually breaks through operating drift, so the fix is to treat each pattern as a repeatable process problem with a named owner and a clear checkpoint.

| Mistake | Recovery | Check |

|---|---|---|

| Treating DE&I as a one-time announcement | Run a recurring monthly review, assign one owner per issue, and keep a dated action log | Each action item needs an owner, due date, and one linked evidence item |

| Over-indexing on hiring while ignoring promotion discipline | Publish written promotion criteria and audit decisions for consistency | If similar evidence against the same criteria leads to different outcomes, require a written explanation |

| Running generic remote team-building with no inclusion objective | Map each activity to one inclusion outcome and define how you will check it in the next recurring meetings | If you cannot state the intended outcome and the check in one sentence each, the activity is too vague |

| Citing outside commentary without operational translation | Turn each insight into one policy change and one measurable checkpoint | Document inclusion work in operational terms like promotion criteria, meeting access, onboarding clarity, and retention signals |

1. Mistake: treating DE&I as a one-time announcement. A kickoff message is not enough if follow-through has no owner. Recovery: run a recurring monthly review, assign one owner per issue, and keep a dated action log with what changed, who approved it, and when it will be checked again. Use a simple verification rule: each action item needs an owner, due date, and one linked evidence item, such as meeting notes, onboarding feedback, or a promotion document.

2. Mistake: over-indexing on hiring while ignoring promotion discipline. Hiring is visible, but promotion decisions are where quiet exclusion can harden when standards are unwritten. HR guidance also warns that people-process errors can erode productivity and culture, and that a bad hire or poor onboarding can snowball over time. Recovery: publish written promotion criteria and audit decisions for consistency. If similar evidence against the same criteria leads to different outcomes, require a written explanation.

3. Mistake: running generic remote team-building with no inclusion objective. Social activity alone does not guarantee belonging, especially in remote settings where informal social reinforcement is often missing. Recovery: map each activity to one inclusion outcome, such as psychological safety or cross-functional trust, and define how you will check it in the next recurring meetings. If you cannot state the intended outcome and the check in one sentence each, the activity is too vague.

4. Mistake: citing outside commentary without operational translation. External ideas are only useful when converted into operating behavior your team can execute and review. Recovery: turn each insight into one policy change and one measurable checkpoint. Keep this practical in sensitive contexts: Texas Attorney General Opinion No. KP-0505 (January 19, 2026) takes an explicit anti-DEI position, so document your inclusion work in operational terms like promotion criteria, meeting access, onboarding clarity, and retention signals.

Start with one documented month-one checklist with named owners, then keep only what improves inclusion and operational clarity.

Write a one-page definition of what should change in team behavior, decision access, and onboarding consistency. A practical check: two managers should read it and describe the same expected behaviors.

Name one accountable owner for DE&I operations and one person to review evidence monthly. This keeps the work from stalling between good intentions and actual follow-through.

Use one packet for each role: job criteria, interview questions, scorecard, and decision-notes template. Hold the same evaluation standard for every candidate, even when hiring speed is under pressure.

Cover week-one role clarity, communication norms, support channels, and one inclusion check-in. Track completion and capture whether the new hire knows how decisions are made, where to ask for help, and how to participate effectively.

Publish response windows, when to use written updates instead of meetings, meeting etiquette, and where decisions must be logged. If an important decision depends on side context or being online at the right moment, treat that as a process gap.

Review hiring flow, participation patterns, promotion movement, and retention signals once a month. Reported DE&I progress and employee experience can diverge, so use your logs to decide what to fix next.

Run one full monthly review cycle before scaling headcount. Document gaps, update your remote work standards, and then expand. That sequence is usually more effective than adding one-off DE&I activities.

Diversity is who is on the team and which viewpoints are present. Inclusion is whether those people can actually participate, influence decisions, and get fair access to hiring, onboarding, promotion, and high-visibility work. In remote teams, one practical check is whether key decisions still work for people outside the loudest time zone, manager circle, or meeting style.

No. Remote work does not guarantee better inclusion outcomes on its own. One cited figure is worth remembering: Black employees were reported as 14% more likely to leave a job if remote work was not available. That suggests work design can affect retention, but it does not mean remote work fixes inclusion by itself.

This grounding does not support a ranked "top risks" list for small remote teams. A practical approach is to watch for patterns you can evidence: whether varied viewpoints are represented in decisions, whether biased behavior is addressed consistently, and whether DEI&B metrics are being tracked over time.

This grounding does not establish a universal 30-day implementation plan. For an early rollout, keep scope tight: assign clear ownership, define a regular review cadence, and start tracking a small set of DEI&B metrics consistently. Treat this as a starting framework, not a guaranteed timeline or outcome.

Use a small scorecard instead of a big program. Track a few DEI&B metrics you can verify consistently, then review them on a regular cadence. The checkpoint that matters is whether your tracking shows initiative progress over time, not whether you run a large process.

Use a simple rule: if a faster process repeatedly depends on manager proximity or excludes some viewpoints, revisit it. Written pre-reads, decision logs, and explicit turn-taking can add some up-front friction but make participation patterns easier to review. If a decision is urgent, move fast, then document who was consulted and what criteria were used so you can improve the process next time.

Leila writes about business setup and relocation workflows in the Gulf, with an emphasis on compliance, banking readiness, and operational sequencing.

Educational content only. Not legal, tax, or financial advice.

Use focused time now to avoid expensive mistakes later. Start with a practical `digital nomad health insurance comparison`, then map your route in [Gruv's visa planner](/tools/visa-for-digital-nomads) so we anchor policy checks to your real plan before pricing pages pull you off course.

**Run your remote agency innovation culture through a one-week system with named owners, fixed Innovation Hours and Innovation Sprints, and simple controls that protect delivery quality and client trust.** Start here before you tune metrics or tooling. Execution discipline comes first, because remote culture alone does not create better ideas.

Most remote agencies do not need bigger social calendars. They need shorter, better-timed interactions that make daily work easier. The point is not to manufacture fun for its own sake. It is to reduce the friction that shows up in missed handoffs, quiet calls, weak trust, and low participation across distributed teams.