Use a two-stage process: qualify first, then run discovery after signature. On the first call, confirm the problem statement, decision owners, timeline or budget constraints, and participant access before drafting a SOW. During interviews, ask for recent real incidents, not predictions, and decide in advance whether you will use notes, recording, or both. End with a readout that ties evidence to a named owner and a specific decision.

If you want a practical answer to how to conduct user interviews for client work, split the job in two. Use the first call to test fit and reduce scope risk. Then, once the work is signed, use deeper interviews to understand the problem well enough to recommend action without guessing.

Your pre-engagement call is not a miniature project. It is a decision point: proceed, clarify, or decline. Keep it focused on risk, decision ownership, and whether interviews are even the right method. Interviews are strong for understanding why people act, what they value, and how they explain their experience. They are weaker for exact task behavior, especially when recall is poor or the work is partly tacit.

Before you draft scope, make decision ownership explicit: who controls budget, who runs the work day to day, who approves decisions, and who will use the outcome. If those lines are unclear, treat your proposal as provisional.

| Risk area | Ask this | Signal strength | Next action |

|---|---|---|---|

| Scope stability | "What problem are you trying to reduce, and what would count as a useful outcome?" | Green: one clear problem and a usable outcome. Yellow: multiple problems, weak priority. Red: "We need everything looked at." | Green: proceed. Yellow: narrow the brief. Red: decline or sell a short scoping phase first. |

| Decision ownership clarity | "Who controls budget, who owns day-to-day execution, who approves decisions, and who will use the result?" | Green: named people and a clear path. Yellow: one or two responsibilities unclear. Red: no clear approver or conflicting owners. | Green: proceed. Yellow: ask for role confirmation in writing. Red: do not scope yet. |

| Time and cost realism | "What timeline and budget range are you working within?" | Green: constraints are stated. Yellow: timing or budget still tentative. Red: they want a fixed proposal without basic constraints. | Green: draft options. Yellow: hold assumptions visibly. Red: pause until estimates are testable. |

| Evidence access | "Who can we speak with, and how will interview data be captured?" | Green: access and note or recording plan are clear. Yellow: limited access. Red: no participant access or no capture plan. | Green: proceed. Yellow: reduce claims. Red: do not promise discovery outcomes. |

Use one simple checkpoint before you move on: can you state the research question, the decision owners, and the evidence source in one sentence each? If not, you are not ready to price confidently.

Once the agreement is in place, switch from fit-checking to learning. Ask for past behavior and specific incidents, not predictions. "Tell me about the last time this happened" usually produces better material than "What would users want?" With qualitative interview data, credibility comes from disciplined capture and cross-checking, not from sounding certain.

Two operator details matter early. First, decide how interview data will be captured before you start: recording, notes, or both. Second, plan at least one credibility check, for example triangulation or looking for disconfirming cases. If the client wants claims about actual task flow, pair interviews with observation or another combined method. Otherwise, you risk the common failure mode where important steps never surface because the participant skips what feels obvious to them.

Before moving to the SOW, make sure you have this evidence pack:

That handoff keeps the next section grounded in evidence instead of optimism. You might also find this useful: How to Conduct an Effective Code Review. If you want to discuss your specific case, Talk to Gruv.

Do not draft your SOW until you can translate the client's symptom into a verifiable problem, a clear outcome, and a clear change path.

| Draft step | Key prompt | What to note |

|---|---|---|

| Capture the symptom exactly first | "What happens today that shows this is a problem?" / "What observable baseline are we starting from?" | Write the client's words first; current KPI definition pending stakeholder verification |

| Test business impact before solution language | "What decision is blocked or slowed?" / "Who feels the cost?" / "What evidence source confirms that impact?" | If the answer is opinion only, label it as an assumption |

| Define the in-scope outcome as outputs | Tie work to concrete outputs | Example outputs: a strategy, prioritized initiatives, and documented use cases |

| Make scope boundaries explicit | Name deliverables, assumptions, dependencies, client responsibilities, out-of-scope items, and the approval owner | If a dependency is uncertain, state that directly |

| Set acceptance and change handling before writing final language | "How will each deliverable be accepted?" / "Who can approve changes?" | Use an explicit change-order process |

Capture the symptom exactly first. Write the client's words before you refine them. Then ask: "What happens today that shows this is a problem?" and "What observable baseline are we starting from?" Keep the KPI definition pending until the stakeholder verifies the current definition.

Test business impact before solution language. Ask: "What decision is blocked or slowed?" "Who feels the cost?" and "What evidence source confirms that impact?" If the answer is opinion only, label it as an assumption.

Define the in-scope outcome as outputs. In one SOW-backed county engagement, stakeholder interviews and structured planning were tied to concrete outputs: a strategy, prioritized initiatives, and documented use cases, within a 10-week plan across five structured phases. Use that structure as a drafting model.

Make scope boundaries explicit. Name deliverables, assumptions, dependencies, client responsibilities, out-of-scope items, and the approval owner. If a dependency, like participant access or document availability, is uncertain, state that directly.

Set acceptance and change handling before writing final language. Ask: "How will each deliverable be accepted?" and "Who can approve changes?" In the same county example, change orders were explicit and capped at $12,600 on a $126,000 contract. You do not need that exact cap, but you do need an explicit process.

| Draft area | Weak | Strong |

|---|---|---|

| Problem statement | "Users are confused." | "Stakeholders report drop-off at the verified workflow step; baseline source pending analytics or support-log verification." |

| SOW language | "We will explore user needs and share ideas." | "Deliverables include interview summary, decision-ready findings, prioritized recommendations, assumptions, dependencies, client responsibilities, and approval owner." |

| Scope control | "Additional needs can be discussed." | "Out-of-scope items require a written change request and named approver." |

Ready-to-draft checklist:

If any item is missing, run one more clarification round before drafting. For synthesis after interviews, see A guide to 'Affinity Mapping' for synthesizing user research.

Price only after you can show what happens if nothing changes. Once the problem statement is solid enough for the SOW, test the cost of inaction. Then test who owns that pain and what evidence backs it up. That is what moves the fee conversation away from hours and toward consequences.

Before you start: bring the draft problem statement, the current baseline source if you have one, and room in your notes for two labels: verified and assumed. If a stakeholder gives you a strong opinion but no evidence trail, do not throw it away. Just do not price as if it were proven.

Step 1. Ask a short sequence around cost of inaction. Do not rely on one dramatic question. Ask three in order: what happens if this problem stays unsolved for the next planning cycle, who feels that cost first, and what evidence would confirm it. That sequence helps surface the consequence, the owner, and the proof source.

The point is to validate the user's problem, not your idea, and not let the interview turn into a sales pitch. A good checkpoint is simple: did you get a concrete story, a named consequence, and at least one follow-up that deepened the answer? If not, you probably have language for a proposal, but not enough for confident pricing.

Step 2. Map what you hear to business impact before you talk numbers. Interviews give you qualitative data: stories, feelings, motivations, and frustrations that explain why people behave the way they do. That depth becomes commercially useful when you translate it into a business risk the client already recognizes.

| Business risk | Evidence to collect in interview | Impact type | How it informs fee logic |

|---|---|---|---|

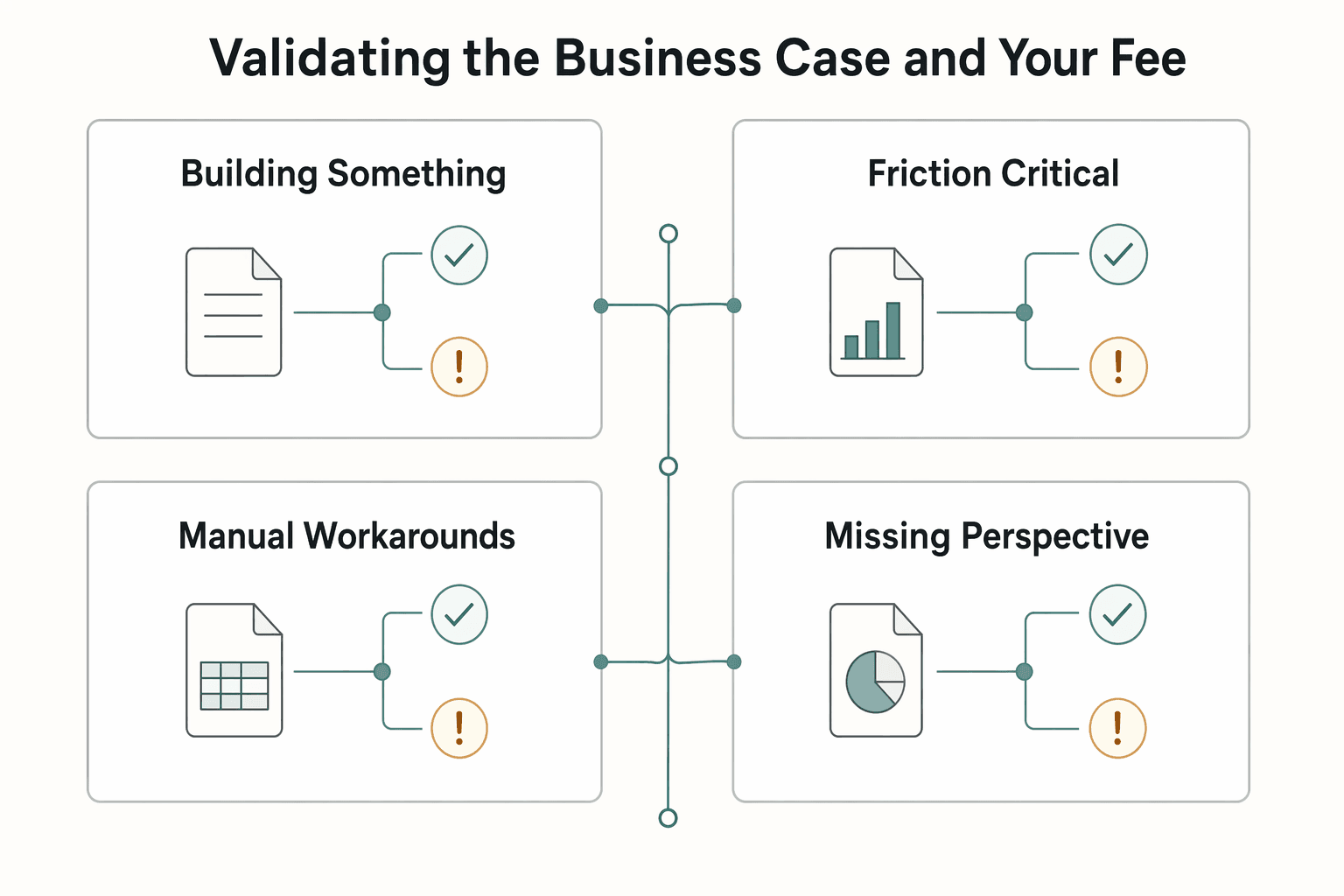

| Building something nobody wants | Stories showing weak demand, low perceived value, or a mismatch between the stated solution and the actual problem | Wasted build effort, strategic misfire | Supports pricing for early validation because changing direction early is cheaper and faster than rebuilding later |

| Friction in a critical task | Recent examples of where users struggle, what they tried, and why the current experience breaks down | Adoption or completion risk | Supports pricing for work that reduces decision risk around what to fix first |

| Manual workarounds or repeated pain | Verbatim descriptions of extra steps, frustration, and why people keep using a workaround | Operational drag, service burden | Supports pricing for diagnostic and prioritization work, not just deliverable production |

| Missing perspective from key user groups | Signs your participant pool is too narrow or excludes a meaningful segment | Discovery risk, biased decisions | Supports a narrower scope or a phased fee until participant diversity improves |

A common failure mode is treating a loud anecdote as a full business case. If the same issue appears only in one segment, say that. If you still need participant diversity to test whether the problem is broad or concentrated, keep that uncertainty visible in the pricing conversation.

Step 3. Match the value signal to the stakeholders in front of you. You may need to address different stakeholder concerns, even when one person brought you in. Keep the conversation anchored in evidence: what gets worse if the problem stays open, what is verified versus assumed, and what it will take to act on findings.

If one sponsor says "everyone agrees" but you cannot trace that claim to interview evidence or a current baseline, treat that as a red flag. Before you present pricing, make clear which stakeholder concerns are evidence-backed and which still need validation.

Step 4. Present pricing with a proof pack, not just a scope summary. Your fee is easier to defend when it sits next to evidence. Bring a short pack with interview quotes, the problem statement, the impact map, and the gaps that still need verification. In practice, this is the commercial side of learning how to price a UI/UX audit for a SaaS company. You are pricing the decision support and risk reduction, not only the time spent talking to users.

Before you present pricing, confirm you have:

This pairs well with our guide on A guide to writing a 'User Research' plan.

Treat compliance as part of how you run interviews, not legal theater. Your goal is simple: collect usable evidence while protecting participant trust before, during, and after every session. A practical anchor is the Belmont framework's 3 core principles: respect for persons, beneficence, and justice.

| Document | Use when | Notes |

|---|---|---|

| Participant consent notice | Whenever you collect participant data, especially if you plan to record | Keep it plain: purpose, recording, use of notes or recordings, access, and whether quotes may appear in anonymized reporting |

| Client confidentiality terms | When the client may share proprietary information or wants limits on raw-research distribution | Usually belongs in your MSA, SOW, or a separate NDA |

| Interviewer data-handling notice | When anyone besides you can access notes, transcripts, or recordings | Use as an internal one-pager; define who can access raw files, where they are stored, and why |

Before you start, prepare three separate documents instead of one catch-all form.

Step 1. Split the paperwork by audience. Use a participant consent notice whenever you collect participant data, especially if you plan to record. Keep it plain: why you are interviewing them, whether audio or video is captured, how notes or recordings will be used, who can access them, and whether quotes may appear in anonymized reporting.

Use client confidentiality terms when the client may share proprietary information, for example internal metrics or customer lists, or wants limits on raw-research distribution. This usually belongs in your MSA, SOW, or a separate NDA, not in the participant notice.

Use an interviewer data-handling notice as an internal one-pager when anyone besides you can access notes, transcripts, or recordings. If you cannot clearly state who can access raw files, where they are stored, and why, pause recording until that is defined.

Step 2. Check your workflow against common risk points.

| Area | Lower-risk practice | Risky practice |

|---|---|---|

| Consent capture | Plain-language notice shared in advance and reconfirmed at session start | Consent buried in scheduling text or assumed from attendance |

| Recording | Explicit confirmation before recording starts | Recording first and asking later |

| Storage access | Access limited to named people with a clear reason | Broad shared-folder access |

| Retention | Retention rule pending legal or policy verification | Keeping files indefinitely without a defined approach |

| Reporting anonymization | Direct identifiers removed; raw-quote exposure limited | Including names or traceable details in reports |

Step 3. Set a cross-border decision path before recruitment. When participants, clients, and storage may span jurisdictions, document this path first:

Cross-jurisdiction transfer decisions can change your risk profile quickly. If jurisdiction, transfer approach, or processing roles are still unclear, treat recording as pending until those items are verified.

Step 4. Use one repeatable pre-interview checklist. Run the same checklist every time:

Final operating reminder: strong paperwork does not fix weak interviewing. Poor execution wastes participant time and reduces insight reliability. Interviews are strong for understanding the "why," but for exact workflow detail, validate interview recall with observation or combined methods when needed.

Your readout should make a client decision easier, not just summarize interviews. Keep the flow simple: analyze first, then share findings, then ask for a clear decision.

| Visual | Decision need | Use when |

|---|---|---|

| Process map | Workflow complexity or breakdown points | The decision is about workflow complexity or breakdown points |

| Journey map | Stage-by-stage experience and handoffs | The decision depends on stage-by-stage experience and handoffs |

| Prioritization matrix | Funding sequence | The client agrees on problems but needs a funding sequence |

Step 1. Analyze before you recommend. Use a hard checkpoint between analysis and presentation. If you jump from transcripts to slides, you risk over-weighting single comments and missing contradictions. Treat every finding as traceable evidence: if you cannot show where it came from quickly, it is not ready for a client decision. Also keep reliability limits visible, since interview participants can misremember, omit details, or be less than fully candid.

Step 2. Build the readout with a decision-ready backbone.

| Backbone step | What belongs here | Evidence source | Confidence level | Owner | Decision required |

|---|---|---|---|---|---|

| Insight | A clear pattern from interview data | Notes, transcript excerpts, repeated task descriptions | High when repeated across interviews; medium/low when limited or conflicting | You (research lead) | Accept finding as stated, or request more validation |

| Implication | The business effect if the pattern continues | Insight evidence plus current operating context | Lower when impact is inferred; higher when corroborated by stakeholders or behavior | Product/ops/business lead | Prioritize now, defer, or discard |

| Recommendation | A specific next action with scope and dependencies | Insight + implication + known feasibility constraints | Working confidence until implementation checks are complete | Named implementation owner | Approve action, assign owner, or fund follow-up |

If owner and decision are both missing, it is commentary, not a recommendation.

Step 3. Use participant voice as evidence, not decoration. A quote is usable when it describes a specific event, task, or consequence in the participant's own words. Pair each quote with behavioral context, for example the steps they described, so it stands on more than sentiment alone. De-identify before sharing: remove direct identifiers and any detail that could make the person obvious. If a verbatim quote still risks identification, paraphrase the point instead. If the quote came from a leading question, mark it as lower confidence.

Step 4. Make recommendations specific enough to approve.

| Dimension | Weak recommendation | Strong recommendation |

|---|---|---|

| Specificity | "Improve onboarding." | Names the exact change and where it applies |

| Feasibility | No delivery path | Includes a practical first step and scope |

| Dependency clarity | Dependencies implied or missing | Calls out dependencies upfront |

| Expected business effect | Generic benefit language | States expected effect and what to validate |

When baseline evidence is not ready, state the unresolved item plainly, for example: Baseline metric pending source verification.

Step 5. Choose visuals by the decision you need. Use a process map when the decision is about workflow complexity or breakdown points. Use a journey map when the decision depends on stage-by-stage experience and handoffs. Use a prioritization matrix when the client agrees on problems but needs a funding sequence.

Executive readout checklist (final pass)

This structure helps your current project land and supports the next cycle, since interview work is usually ongoing rather than one-time. We covered this in detail in A guide to 'User Journey Mapping'.

Strong interview work is not about running isolated conversations well. It is about running a repeatable sequence: better participant selection, clearer scope, cleaner evidence, and recommendations you can defend.

Pre-engagement diagnostic: qualify before you commit. Start with a pre-engagement diagnostic and define clear learning objectives before you commit to deliverables. Verify that the problem is specific enough to investigate. If goals stay vague, teams can end up answering different questions.

Stakeholder discovery: probe for recalled behavior. Ask about the last time something happened instead of what people think they would do. Your checkpoint is simple: are you hearing a concrete story, not a theory? If answers stay abstract, your findings will stay weak too.

Compliance execution: run the session cleanly. Before recording, request consent. Keep your session structure steady with introduction, core questions, and closing so participants know what is happening. If recruitment is loose or your target list lacks diversity, insight quality can drop because participant selection directly affects what you can credibly claim.

Insight-to-action reporting: turn insight into action. In reporting, connect evidence to decisions. Use clear patterns and recommendations that match what you actually heard in interviews, not what a stakeholder hoped to prove.

| Habit | Reactive approach | Strategic approach |

|---|---|---|

| Decision quality | Chases vague opinions | Uses clear learning objectives and recalled behavior |

| Risk exposure | Records or recruits casually | Checks consent and participant fit before relying on data |

| Deliverable defensibility | Presents themes without evidence | Ties recommendations to concrete stories from interviews |

On your next project, do three things:

Related: How to recruit participants for a 'User Research' study.

This grounding pack does not verify the legal distinction, enforceability, or jurisdiction-specific requirements for consent forms vs NDAs. Treat document choice and wording as a legal decision: use approved templates and confirm final language with the client or legal counsel.

This grounding pack does not provide validated compensation amounts, ranges, or fixed decision factors. Decide compensation from your current internal policy and a live market check before use; keep the range marked as pending until those sources are verified for this study.

Ask for a concrete story or example, because richer insights usually come from that level of response. If the answer stays abstract, wait about 5 seconds, then use a neutral probe such as “Would you give an example?” or “Would you explain further?”

Use the same opening and closing checkpoints every time. Start with welcome, topic overview, ground rules, then your first question. End by summarizing, asking whether you missed anything, and closing with thanks. A common failure mode is a weak opening or closing, so setting a clear, open environment early matters.

A former product manager at a major fintech company, Samuel has deep expertise in the global payments landscape. He analyzes financial tools and strategies to help freelancers maximize their earnings and minimize fees.

Educational content only. Not legal, tax, or financial advice.

For a long stay in Thailand, the biggest avoidable risk is doing the right steps in the wrong order. Pick the LTR track first, build the evidence pack that matches it second, and verify live official checkpoints right before every submission or payment. That extra day of discipline usually saves far more time than it costs.

If you need to price UI/UX audit work for a SaaS client, the job is not finding a magic market number. It is turning uncertain scope into a quote you can defend, a Statement of Work (SOW) the client can approve, and payment terms that do not leave you carrying the risk.

**Participant recruitment is often the bottleneck** for independent consultants and boutique firms. It is high-risk, time-consuming, and easy to underestimate until it starts consuming the hours you need for the research itself. It also brings compliance traps, scheduling friction, and the constant risk that poor-fit participants will compromise the project.