An effective client code review is a documented approval process tied to scope, acceptance criteria, and one written record. Define what "done" means before coding starts, map each change to an agreed requirement, and submit proof such as tests, screenshots, logs, or notes on known limitations. During review, separate defects from new requests, keep decisions in one thread, and merge only after explicit approval.

The review is not a debate about whether the code feels right. It is a structured delivery checkpoint in your process. In practice, that means reviewing against defined requirements, acceptance criteria, and a clear approval path, not shifting opinions. Ad hoc risk handling is unreliable. Review works best when it is part of the software development lifecycle, not a last-minute conversation.

| Area | Subjective review | Criteria-based review |

|---|---|---|

| Decision basis | Preference, taste, loose expectations | Defined requirements and stated constraints |

| Evidence used | Comments and opinions | Test results, change list, issue log, review checklist |

| Outcome | More discussion, fuzzy next steps | Approve, request changes, or route new asks into separate scope decisions |

You do not need legal theater. You need clean operating rules. For scope control, bring the defined requirements into the review and label each request as "in scope," "defect," or "new request." That simple classification is your first defense against quiet scope expansion.

| Outcome | Review action | Verification |

|---|---|---|

| Scope control | Bring the defined requirements into the review and label each request as "in scope," "defect," or "new request." | First defense against quiet scope expansion |

| Risk control | Submit an evidence pack with the diff summary, tests run, known limitations, and a short checklist showing what was completed. | A reviewer should be able to match each delivered item to a stated requirement without guessing |

| Authority positioning | Lead the review with your criteria, not just your code, and pull the conversation back to the checklist. | Scattered preference comments do not pull the conversation off course |

For risk control, make approval traceable. Submit an evidence pack with the diff summary, tests run, known limitations, and a short checklist showing what was completed. The verification point is simple: a reviewer should be able to match each delivered item to a stated requirement without guessing.

For authority positioning, lead the review with your criteria, not just your code. A common failure mode is scattered preference comments that pull the conversation off course. Pull it back to the checklist. Where useful, sanity-check your process against a recognized review artifact such as the OWASP code review checklist or its do's and don'ts.

This is the operating frame for code review as an independent professional. It does not try to draft legal clauses or pick a platform. The next sections do that work: what to define before coding, what to verify before submission, and how to document the decision after review. Related: How to Manage a Global Team of Freelancers.

Set review rules before coding starts if you want fewer disputes later. In practice, client review should begin in your Statement of Work (SOW), not in the pull request.

Code review is a socio-technical practice, so unclear wording quickly turns into human friction. Define the process so it is scoped, documented, repeatable, and auditable before anyone is reacting to code.

Use verifiable criteria, not vague requests. In your SOW, write each deliverable so a reviewer can confirm completion from behavior, outputs, or named evidence, not taste.

| Vague request | Verifiable acceptance criteria |

|---|---|

| "Add user login" | "Registered users can sign in with email and password, receive a visible success state after authentication, and see a defined error message for invalid credentials. Session behavior and logout behavior are described in the deliverable notes." |

| "Improve checkout" | "Checkout supports guest purchase, applies shipping and tax rules already listed in scope, and sends the user to the agreed confirmation page after successful payment. Failed payment behavior is documented and testable." |

| "Clean up API security" | "The specified endpoints require authentication, reject unauthorized requests with the agreed response behavior, and log the events defined in the requirements. Any excluded endpoints are named explicitly in the SOW." |

Before work starts, run one check on every line item: "How would the client verify this?" If the answer is fuzzy, rewrite it.

Name the review process in the SOW so approval, revisions, and payment stay in one written record. A short checklist is enough:

| Contract item | Sample wording | Purpose |

|---|---|---|

| Feedback channel | All review comments, approvals, and change requests must be submitted in the agreed system of record. | Keep approval, revisions, and payment in one written record |

| Response window | Client review feedback is due within the response window named in the SOW. | Set the review timing |

| Included review scope | Fee includes the agreed number of review rounds covering defects and in-scope acceptance issues only. | Limit included review scope |

| Deemed acceptance wording | If no feedback is received through the agreed system of record within the response window named in the SOW, the deliverable will be treated as accepted, subject to local law and contract review. | Define acceptance if no feedback is received |

Treat timing and clause text as items to confirm, not universal defaults. For legal wording, use qualified counsel where needed.

The key lever is one system of record. It can be a GitHub pull request plus linked issues, or another agreed channel, but pick one and name it in the SOW. The goal is a consistent trail so decisions do not depend on chat history or memory.

Before submitting a milestone, confirm acceptance criteria, review comments, approvals, and scope changes are traceable in that one record. If feedback comes through Slack, calls, or voice notes, log it in the record before you act.

During review, separate defects and clarifications from new work. Use this script:

| Step | Example wording | Purpose |

|---|---|---|

| Acknowledge the request | "That makes sense and I can see why you want it." | Acknowledge the request |

| Map it to scope status | "As written, this is outside the current SOW" or "This fits the existing acceptance criteria." | Separate out-of-scope work from in-scope work |

| Route it properly | "I'll log it as a change request with impact on timeline and fee for approval before implementation." | Send new work into change control before implementation |

Concrete artifacts matter. When your trail shows the original scope, the request, and the approved change, decisions stay clear. When evidence is scattered, that clarity disappears.

We covered this in detail in How to Conduct a Yearly Financial Review for Your Freelance Business.

Before you open a pull request, show that the work matches scope and produces observable outcomes. This keeps review focused on substance instead of rubber-stamping or style-only debates.

Read the linked ticket, user story, or bug report first, then map it to the matching SOW acceptance item. In your PR description, add a short traceability block like SOW 2.1 -> sign-in success state, SOW 2.1 -> invalid credential message, SOW 2.1 -> logout behavior. Your checkpoint: every meaningful change should map to an in-scope requirement, a defect fix, or an approved change request.

Do not claim speed, savings, or risk reduction unless you can show evidence. Replace vague notes with outcome language tied to proof, and when a metric still needs confirmation, say that the metric is pending verification.

| Technical change | Business impact | Proof artifact | |---|---|---| | Refactored a slow query | Performance effect on the affected screen is pending verification. | Before/after query output, timing log, or profiler capture | | Added form input validation | Fewer bad submissions reach downstream processing | Test output for valid and invalid inputs, screenshot of error state | | Extracted a shared component | Easier reuse in later approved work and lower handoff risk | Screenshot of both use cases, test output, component notes in PR |

In the PR, call out dependency changes, config updates, migration steps, and test intent in plain language. Use a simple check: could another developer understand setup changes and key entry points from the PR notes plus the diff alone? This is where hidden upgrade or config details usually cause avoidable handoff problems.

Shipping pressure is when technical debt, security risk, and maintainability issues often slip in, so record the safeguards you actually implemented and attach evidence. Include happy-path evidence and at least one bad-input or failure-path check where relevant. If evidence is missing, mark it as follow-up work instead of presenting it as complete.

If you want a deeper dive, read Value-Based Pricing: A Freelancer's Guide.

After scope validation, your main job is to make decisions easy to verify later. A clear written trail protects scope, timeline, and handoff quality better than ad hoc replies.

Step 1. Keep every review decision in one written trail. Use one system of record for the full review cycle (for example, the same PR thread, ticket, or client portal from start to closeout). If feedback happens in a call or chat, post a short written recap the same day: request, scope status, key risk, and next step.

Use this checkpoint: if someone returns in two weeks, they can see the request, your assessment, the decision, and current status without asking for a retell. Strong records keep reopenings and scope drift down; weak records do the opposite.

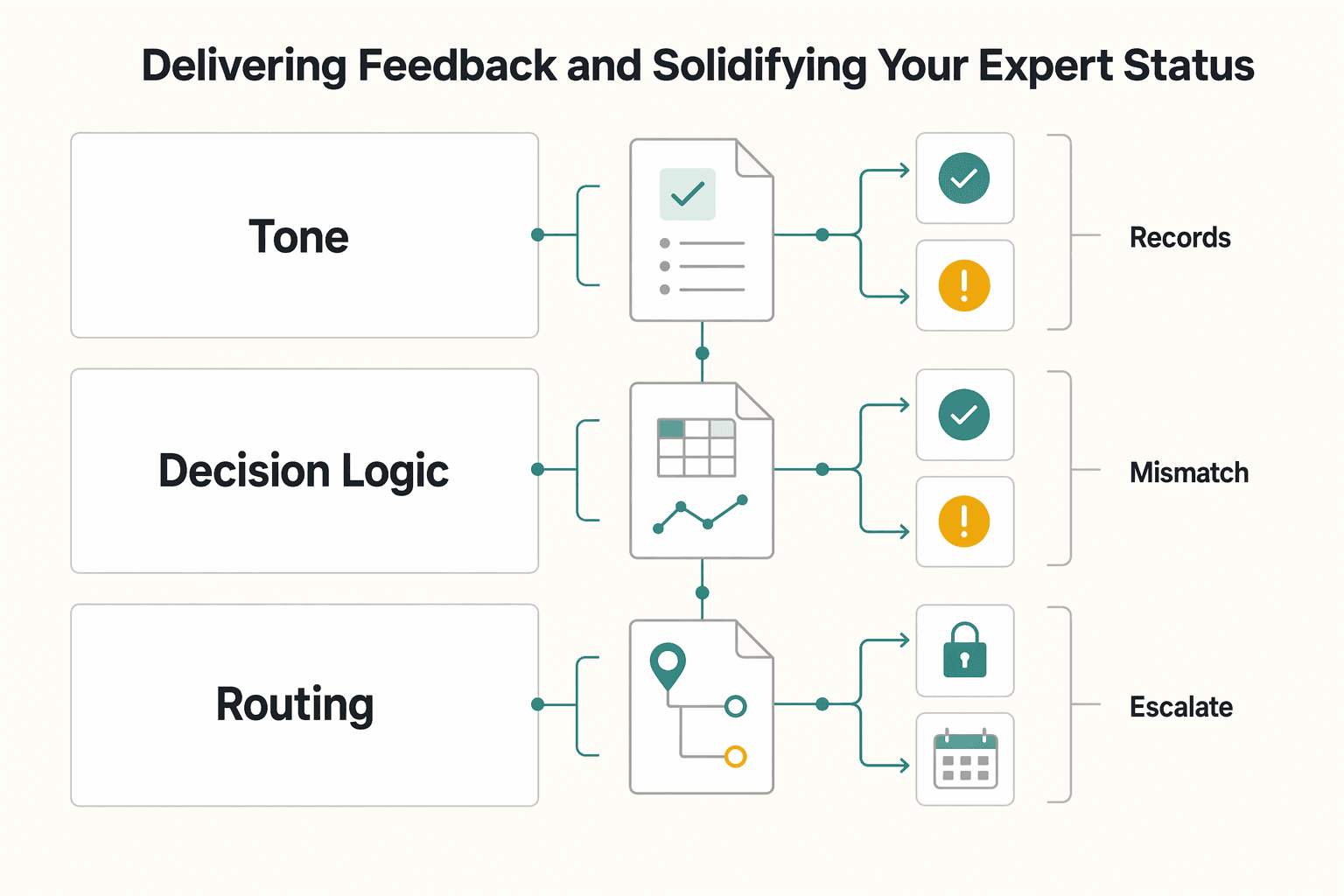

Step 2. Use a repeatable response structure instead of improvising. Keep your replies calm and decision-focused with a three-part pattern: acknowledge the request, evaluate scope/risk/effort, and recommend the next action.

| Aspect | Unstructured reply | Structured professional reply |

|---|---|---|

| Tone | Reactive or vague | Calm, specific, decision-focused |

| Decision logic | Opinion first | Scope, effort, risk, and next step are explicit |

| Routing | Informal changes | Clear path for in-scope, out-of-scope, or higher-risk items |

OWASP positions code review as part of the SDLC and includes concrete review artifacts, including a Do's and Don'ts list (p. 192) and a checklist (p. 196). Your written responses should follow that same discipline.

Step 3. Frame pushback as risk communication. When you need to push back, avoid style debates. Name the risk category, explain likely impact, recommend a safer option, and record the decision.

If feedback conflicts with controlling guidance or an approved standard, escalate to the responsible approver and log that escalation in the same record.

Step 4. Send a formal closeout note when review ends. Close the loop in one final update so status is unambiguous.

Your final check is simple: a third party should be able to see what was delivered, what was approved, and what happens next from that one note.

You might also find this useful: A Guide to Pair Programming with Remote Teammates.

If you want clearer scope control and a cleaner approval handoff, run each review cycle as a documented approval process, not a courtesy pass. Prepare the pull request well, make feedback intent explicit, and do not merge on vague promises.

Before you request review, make the PR carry the core context. In the description, state what was broken, how you fixed it, and how you know it works. Keep the change readable, ideally under 400 lines; very large PRs (for example, 2,000-line changes) are more likely to get skimmed and reopened.

Review architecture and logic before style nits, and let automation handle formatting where possible. Include the proof that matters for this change, such as tests, screenshots, logs, or output. If feedback introduces new behavior, log it as a scope change instead of quietly folding it into the same revision.

Keep decisions in one GitHub PR thread and label comment intent clearly. Tags like issue(blocking) and suggestion reduce clarification loops when written feedback loses context. The handoff point is explicit final approval before merge, followed by a short closeout note: what was approved, what was merged, and what remains out of scope.

| Review habit | Observable action | Likely business impact |

|---|---|---|

| Reactive | Comments are split across chat, email, and the PR | Decisions get lost and approval is harder to prove |

| Reactive | Feedback is subjective and unlabeled | More back-and-forth and slower revisions |

| Shield-based | PR states problem, fix, and proof | Clearer acceptance record |

| Shield-based | Merge happens only after explicit final approval | Cleaner completion handoff |

On your next cycle, open one small PR, use the three-part description, respond in-thread with clear labels, and wait for explicit approval before merge. That is code review with business discipline, not just technical care.

For a step-by-step walkthrough, see How to Conduct a Weekly Review for Your Freelance Business.

Set it up in writing before the first pull request or merge request. Document the review channel, the primary reviewer, the expected response window, and what counts as approval in one system of record.

Start with correctness, tests, and functional changes, then review maintainability and fit within the larger codebase. Summarize your checklist, what changed, and which tests you ran in the PR note or submission summary.

Acknowledge the comment, evaluate whether it affects correctness, maintainability, or scope, and recommend the next step in writing. Keep the decision in the PR thread, and route net-new behavior into a follow-up changelist instead of hiding it in comments.

The main risks are missed issues, avoidable delays, rework, and unclear completion. Reviews that skip correctness, tests, functionality, or maintainability context are more likely to create defects, and unclear approval can leave the milestone ambiguous.

Compare every comment against the current changelist and agreed requirements before implementing it. Document accepted revisions in the PR note, and route net-new work into a follow-up changelist or change request.

Decide how review time is handled before work starts and state it clearly in writing for the engagement. If review expands beyond the original scope, record it as follow-up work before implementation.

Use a tool that keeps the full review trail in one place, usually a pull request or merge request. Keep comments, revisions, and final approval in that same thread so the review stays traceable and completion is clear.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

Includes 4 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

If you want to manage a global freelance team without constant cleanup, use the same intake-to-payout process for every engagement and save an artifact at each gate. Common failure points are instinct-based classification, vague scope, and payments approved in chat with no audit trail.

---