Start by assigning metric ownership, locking KPI definitions, and naming one reporting record before you automate anything. Build in Looker Studio for presentation, use native sources for simpler GA4 or Google Ads setups, and add Supermetrics when recurring non-Google channels are required. Blend data for ROAS or CAC only after source definitions reconcile. Tie the dashboard to your SOW and review agenda, then deliver on schedule only after checks on date range, connector health, and headline totals.

If clients rarely engage with your reports, your value story feels fuzzy, and month-end reporting keeps eating into paid work, the fix is usually not more charts. It is tighter operating discipline. The Client Reporting Flywheel is a practical model for turning reporting into three linked outcomes: proof of value, clearer scope control, and better growth planning.

| Part | How the report is used | Main outcome |

|---|---|---|

| Part 1 | Reporting ties activity to business outcomes | Prove value |

| Part 2 | Use the report as a shared record of what is being measured and discussed | Scope is easier to manage |

| Part 3 | Use trends, gaps, and recurring questions to surface growth opportunities worth proposing | Growth planning |

For consistency, I'll use Looker Studio throughout this article. In this setup, treat the dashboard as the presentation layer and your data pipeline as the delivery layer. Your actual reporting process still owns metric definitions, review steps, and client decisions. That separation matters because a tool-first rollout can amplify a broken process instead of fixing it.

Start with accountability, not automation. Before you build or refresh a dashboard, decide who owns each metric, which source is authoritative, who reviews the report before it goes out, and what question the report is supposed to answer.

Use a simple checkpoint. Can you trace every number on the page back to a named source and a named owner? If more than one function touches the data, get the relevant people into one short working session. Depending on your setup, that may include legal, HR, marketing, operations, and IT or security. If nobody agrees on what a metric means, automation just repeats the disagreement faster.

Measure the reporting process, not just the client's performance data. TrustArc's 2024 benchmark found that organizations actively measuring program effectiveness score 31 percentage points higher in its index. You do not need to map that result directly onto agency reporting, but the lesson holds. If you never measure whether reporting is working, you will not know what to fix.

For client reporting, keep the checks simple. Track whether the report went out on time, whether the core metrics reconciled cleanly, whether the client had a clear next action, and whether clarification emails dropped over time. A common failure mode is the silent dashboard. The data is there, but no decision is attached to it.

Use the flywheel in the order you will use it in real work. First, prove value with reporting that ties activity to business outcomes. Second, use that same report as a shared record of what is being measured and discussed, so scope is easier to manage without turning every disagreement into a subjective debate. Third, use trends, gaps, and recurring questions to surface growth opportunities worth proposing.

That sequence sets up the rest of the article. In Part 1, you will decide what belongs in a report that proves value. In Part 2, you will make the report dependable enough to protect your time. In Part 3, you will turn reporting from a recap into planning input. If your current report cannot show one outcome, one boundary, and one next recommendation, fix the structure before you add more automation.

If you're trying to automate client reporting in Google Data Studio, browse Gruv tools.

Build this section in executive order: outcome first, explanation second. If you lead with activity metrics, you force a debate about clicks and impressions instead of a decision about business impact.

| Business model | Prioritize | Secondary context only |

|---|---|---|

| Ecommerce | revenue and efficiency views (for example, ROAS when media efficiency is the goal) | clicks, sessions, CTR, engagement rate, and organic impressions |

| Lead generation | qualified leads, cost per lead, or downstream value when available | clicks, sessions, CTR, engagement rate, and organic impressions |

| Subscription | trial-to-paid movement and acquisition efficiency | clicks, sessions, CTR, engagement rate, and organic impressions |

Step 1. Choose outcome KPIs by business model, then add diagnostics. Start with the result the client is paying you to improve, then map supporting metrics behind it.

Use a quick opening-page test: can the client answer, in about 30 seconds, what happened, what it cost, and what to do next?

Step 2. Lock definitions before you build calculated fields. Calculated fields help only after you settle definitions. Keep ROAS and ROI as separate scorecards unless the client has explicitly approved one blended business formula.

Before publishing any client-facing scorecard, verify:

Raw numbers from GA4, Search Console, and other platforms do not explain themselves. If you skip context checks, you can react to noise and weaken trust.

Step 3. Choose the connector path by reporting job, not tool preference. Pick the path that gives you the field coverage your KPI definitions require and a reporting process you can maintain.

| Setup path | Connector path | Typical use case | Maintenance effort | Reporting reliability |

|---|---|---|---|---|

| Native Looker Studio source | Source to Looker Studio | One primary platform or common Google sources with straightforward scorecards | Lower | Reliable when source fields already match required KPIs |

| Connector tool such as Supermetrics | Source to connector to Looker Studio | Broader source coverage or more consistent pulls across client accounts | Medium | Reliable when field mapping is documented and reviewed |

| Blended scorecard | Native and connector-fed sources blended in Looker Studio | Executive summary combining spend, site behavior, and conversion outcomes | Higher | Strong for decisions only when join keys and definitions reconcile |

Automate delivery only after manual QA passes. Scheduled emails and dashboard delivery improve consistency, but they will also repeat bad logic if your scorecard is wrong.

Step 4. Make every major chart follow Problem-Action-Result. Use one annotation workflow across the report:

Then use a short monthly narrative: "We saw [problem/opportunity]. We changed [action] on [date]. The early result is [movement] against [outcome KPI]. Next month we recommend [decision]."

This keeps multi-source reporting decision-ready instead of chart-heavy. You might also find this useful: SEO Client Reporting That Drives Better Client Decisions.

Use your reporting system as the operating record for scope decisions, not just a place to display results. You protect margin when the dashboard, your agreement, and client communication all reference the same source of truth.

Choose one named dashboard and repeat that exact name and link in every working document: proposal/SOW, kickoff notes, review agenda, and any scope-change request. If you are inconsistent here, side exports and one-off screenshots start competing with your core record.

Use this implementation checklist:

Quick validation:

If either answer is unclear, decision quality drops and reporting debates replace planning.

Define boundaries in the report so scope changes are handled as decisions, not surprises.

| Engagement setup | Boundary metric to show | Alert trigger | Follow-up action |

|---|---|---|---|

| Retainer | Active priorities and work queue status | A new request would displace agreed priorities or require new tracking inputs | Ask the client to swap priorities or approve added scope |

| Fixed-scope project | Deliverables, milestone status, and revision status | A request adds deliverables, changes success criteria, or expands measurement needs | Route the request through a scope-change workflow before execution |

| Audit or sprint | Agreed outputs and review rounds | The client requests implementation, ongoing monitoring, or custom reporting outside the original output | Convert it into a follow-on engagement with a separate plan |

Reusable script framework:

Treat access governance as a repeatable operating habit. Tool choice will vary by stack, budget, and growth stage, so keep your process explicit and simple.

If your reporting depends on sources like GA4 and GSC, maintain a lightweight access log:

For client offboarding, complete the same checklist each time:

Keep a short report footer to reinforce trust through process:

Close each review with a prioritized action list so the dashboard stays operational instead of archival.

If you want a deeper dive, read The Best Analytics Tools for Your Freelance Website.

Use your monthly report to drive the next decision, not just recap performance. In each review, move in order: what happened, why it happened, what should happen next. Your dashboard can automate compilation, but you still need to interpret the signal and recommend the action.

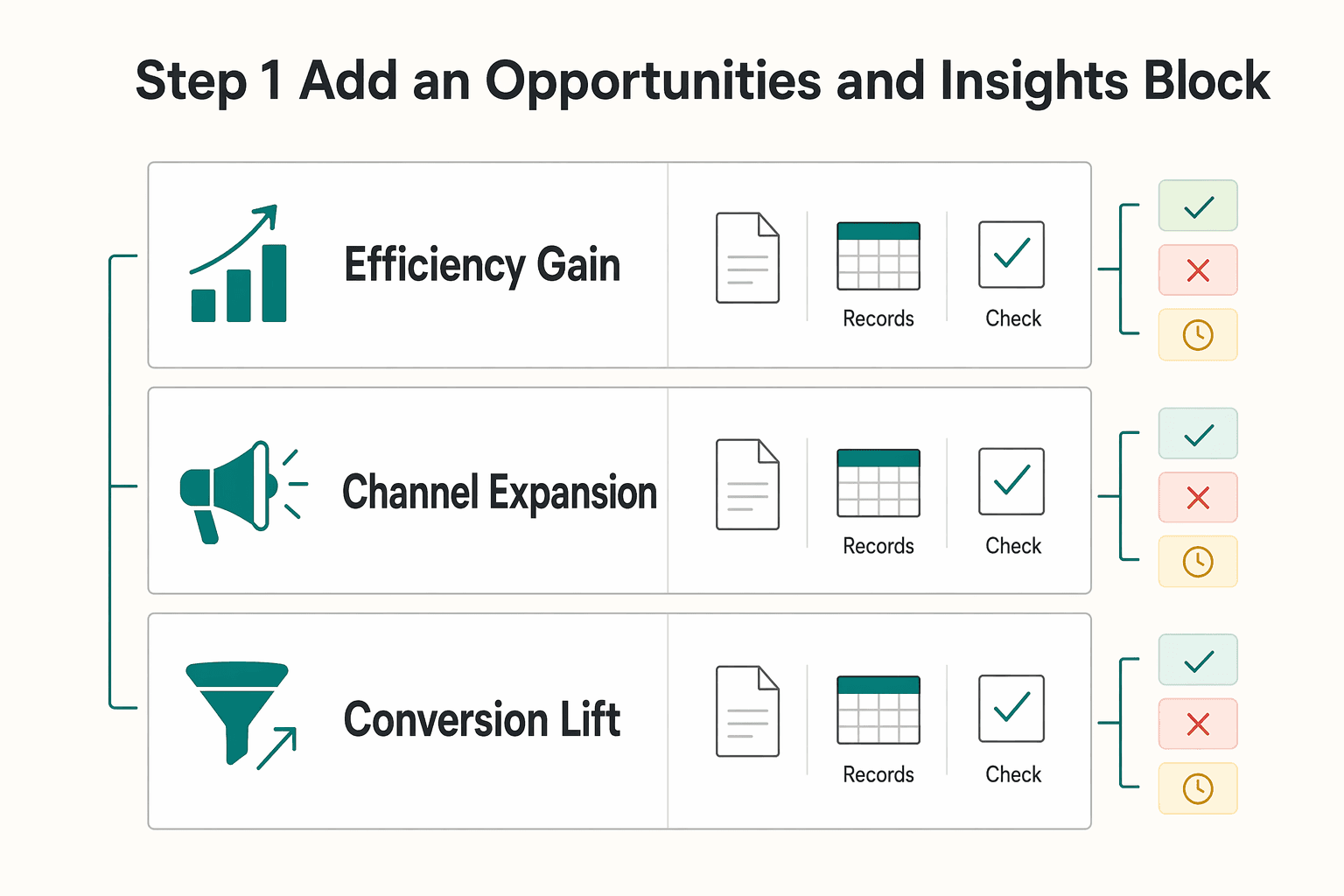

Add one fixed summary block called Opportunities and Insights and complete it every cycle. Keep the same four fields so each recommendation is decision-ready:

Write it so the client can approve, reject, or defer without extra clarification. Focus on outcomes and business impact, not just activity metrics.

| Opportunity type | Required inputs | Confidence level | Expected outcome |

|---|---|---|---|

| Efficiency gain | Current spend, waste indicators, baseline CPA/CPL, current benchmark pending source-record verification | Set after data-quality and stability check | Better budget use or lower waste |

| Channel expansion | Current channel mix, assisted-conversion view, source/segment trend, current benchmark pending source-record verification | Set after enough history is confirmed | Qualified demand from an underused channel |

| Conversion lift | Funnel or landing-page data, current conversion rate, friction points, current benchmark pending source-record verification | Set after tracking reliability is confirmed | More conversions from existing traffic |

Use a simple quality check before presenting any recommendation: include the source, date range, and benchmark note. If those are missing, treat it as a draft, not a proposal.

Trend analysis is useful only when it triggers a concrete test plan. Use this workflow each month:

| Trigger | Response |

|---|---|

| Plateau in a core metric | Propose one new growth input (for example: creative refresh, landing-page variant, or nurture sequence test) |

| Demand shift by segment, geography, or source | Propose a targeted budget or messaging adjustment for that segment |

| Mix changes without outcome gains | Pause expansion and diagnose quality before recommending more spend |

For every response, define owner, timeline, and success measure. If those are not clear, hold the upsell recommendation until they are.

Build a private scenario version of your report before you pitch next scope. This keeps the recommendation risk-aware and easier to approve.

Include four parts:

Present scenario ranges instead of a single-point forecast so your recommendation stays grounded in uncertainty and execution reality. If you want your commercial model to match this approach, read Value-Based Pricing: A Freelancer's Guide.

This pairs well with our guide on How to Create a Social Media Report for a Client.

To scale reporting without quality drift, run one standard Looker Studio system: a master template, a phased client handoff, and a recurring quality-control loop.

Keep one master report for your global defaults, then document what can vary by client.

Before rolling out template changes, document your approval path and test process on a copy first. Verify the approver role from current approval records before anyone uses the SOP.

| Update method | Typical risk to existing reports | Typical effort | When to use |

|---|---|---|---|

| Shared data source update | Higher (one change can affect many reports) | Lower after setup | Standard metric logic you want consistent across clients |

| Per-report edit | Lower blast radius | Higher over time | Client exceptions or one-off presentation needs |

| Connector-level change | Medium to high (upstream schema/connection changes can alter data) | Medium | Reconnects, broken fields, or source-structure changes |

Use the same handoff sequence for every client so setup errors do not turn into reporting errors.

Before each send, run pre-send checks: date controls, connector status, and headline metric alignment with source platforms. Then keep a weekly 30-minute anomaly review to catch bottlenecks or breaks early.

If data fails, use a simple escalation path: pause commentary on affected metrics, log the issue, assign an owner, notify the client about impact, and set the next recheck time. This keeps reporting decision-ready instead of reactive.

Done consistently, this gives you fewer reporting errors, clearer expectations, and easier scaling as your client load grows.

For a step-by-step walkthrough, see How to Automate Client Gift Sending with a Gifting Platform.

Once you have chosen the lightest setup that still holds up, the job is straightforward: repeat the same client-facing sequence every cycle. Automation can compile the data, but your reputation is built on how you interpret it, define the reporting record, and set the agenda for what happens next.

Prove outcomes first. Start from one standard template in Looker Studio. Make sure every delivery answers the same three questions: what happened, why it happened, and what should happen next. The checkpoint is simple. If a client can only see scorecards and charts, the report is not finished. Automated dashboard reports save time, but they do not explain performance on their own.

Clarify scope with the report record. Use the report as the written reference for the review period, and annotate decisions inside the report or in the meeting notes you send with it. That keeps performance conversations tied to documented results instead of memory or opinion. If you send only a dashboard link, the client may fill in the story themselves, and you can end up defending work that was never framed clearly.

Surface the next opportunity. Every cycle should end with one recommended next action, even if the answer is to stay the course for another period. That is the part of data storytelling that stays human and cannot be fully automated.

| Provider model | Partner model | Why it matters |

|---|---|---|

| Sends a dashboard | Sends an annotated reporting record | Fewer gaps in interpretation |

| Recaps metrics | Links results to causes | Better review conversations |

| Waits for requests | Proposes the next test or action | Clearer path for future work |

Implement this now: standardize one template, one insight annotation routine, and one recommendation handoff in [your PM tool or client notes doc]. If you want to automate client reporting in Google Data Studio, it can handle the build, but you still need to own the story.

We covered this in detail in How to Automate Client Onboarding with Notion and Zapier.

Start with native sources when you have a simple setup such as one GA4 property, one Google Ads account, or a straightforward BigQuery table. Use a Google Sheets import or file upload when the data is occasional and you can tolerate manual updates, but expect repeat effort and potential version drift. Move to a third-party connector when you need recurring data from non-Google channels. If your report depends on heavy transformations across many sources, remember that Looker Studio is a visualization layer, not a full data integration platform.

Add the source only when it answers a reporting question you already care about. Click Add data and search for the source you need before you build charts around assumptions. Native options include Google Ads, Google Analytics, Google Sheets, YouTube, Google Search Console, file uploads, and MySQL. If the source is not native, that is your decision point: use a connector for recurring pulls or keep it manual if the volume is low and the reporting cadence is infrequent.

Use a blend only when you need spend and outcome metrics in the same chart or scorecard, such as ROAS or CAC. Validate your blend setup and metric definitions before you trust the result. If source definitions differ, treat the blended view as directional until you reconcile those definitions with the client.

Put the business outcome first, then pair it with one diagnostic KPI that explains movement. That keeps the report useful for decisions instead of turning it into a traffic summary. | Business model | Primary outcome KPI | Diagnostic KPI | Reporting caveat | | --- | --- | --- | --- | | E-commerce | ROAS | CAC | Keep revenue and spend sources consistent or the headline ratio will drift | | B2B/SaaS | MQL volume or cost per MQL | Lead-to-customer rate | Define what counts as an MQL in writing before you automate anything | | Subscription or SaaS growth | CAC | Trial-to-paid rate or pipeline created | Label whether the number comes from ad platforms, analytics, or CRM to avoid false comparisons |

Create one master report, then make one copy per client account instead of trying to force many clients into one live report. Use a naming convention you can scan quickly, such as Client Name plus channel plus reporting cadence. After each copy is connected, check the date range filter and a few anchor metrics before you hand it over.

Use the Share menu and keep a simple permission checklist that fits your workflow. Confirm viewer and editor access before delivery. The same menu also gives you shareable links, scheduled email delivery, and PDF downloads. Before you send the link, open it from a non-editor account so you can verify the client view and catch accidental edit access.

Looker Studio reports update automatically every day. Plan routine commentary around that cadence unless you have confirmed something different in your connector setup. You can schedule regular email delivery from the Share menu, but a scheduled send does not prove the underlying data is fully reconciled. Do a quick pre-send check for stale fields, broken connectors, and obvious total mismatches.

Start with freshness and schema checks: confirm each source has updated and that no platform schema changes have broken fields. If the report blends multiple sources, validate the blend setup and metric definitions before interpreting gaps. If those checks do not explain the mismatch, pause commentary and inspect the upstream source or connector setup before you rewrite the story.

A former tech COO turned 'Business-of-One' consultant, Marcus is obsessed with efficiency. He writes about optimizing workflows, leveraging technology, and building resilient systems for solo entrepreneurs.

Includes 7 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

Start with one sequence and keep it boring. Decide the business question. Choose one primary reporting source. Verify tracking against the origin. Then review it on the same day each week. That order matters more than which tool wins your shortlist.

Freelance work breaks generic wellness advice. Working for yourself demands responsibility and accountability, and your schedule shifts fast, context switching drains attention, and stress spikes when delivery risk climbs. As the CEO of a business-of-one, your mind is part of your delivery stack. You own the outcome, so your mindfulness routine has to protect energy and mental health during real deadlines, not just on calm days.