Terraform gives multi-client cloud work a reviewable, repeatable way to propose, approve, apply, and hand over infrastructure changes. It reduces surprises by tying reviewed plans, readable Git history, isolated state, and thin client configurations to a clear approval trail. That makes delivery easier to scale and leaves clients with an asset they can keep operating after handoff.

--- Terraform for infrastructure as code gives cloud consultants a practical operating model. It lets you propose changes, review them, approve them, apply them, and hand them over cleanly.

That matters because cloud infrastructure consulting runs on trust, predictability, and work the client can keep operating after you leave. Surprises hurt twice: they create delivery risk and they cost money.

Seen that way, IaC is not just a tool choice. It is a way to run your practice. It helps you manage complexity, enforce standards, and build repeatable assets instead of one-off environments.

This framework rests on three pillars: Systematic Risk Mitigation, Scalable Delivery and Asset Creation, and Professional Differentiation and Client Value.

If you want fewer client surprises, every infrastructure change should be reviewable before it is applied. That is where Terraform earns its keep. The value is not convenience. It is control over desired state, approvals, and change evidence.

terraform plan as a change approval record#The habit that matters most is simple: no apply without a reviewed plan. Generate terraform plan from the exact branch and environment you intend to change. Then show the client a short approval pack instead of raw terminal output.

| Approval pack item | Detail |

|---|---|

| Environment name | The environment name |

| Commit SHA | The commit SHA |

| Summary of intent | A plain-English summary of intent |

| Plan counts | The plan's add, change, and destroy counts |

| Destructive actions | Any destructive actions, called out separately |

In most cases, that is enough.

You do not need to treat the plan as a contract. Treat it as a proposed change record and store it with your approval trail. Capture sign-off in the place the client already uses for approvals, such as a ticket, email thread, or pull request comment. Link that approval to the exact commit and plan artifact you reviewed. If a client later asks why a change happened, you have a clean chain: requested change, reviewed plan, approval, apply.

Two verification steps should be non-negotiable. First, confirm the plan was generated against the correct workspace or environment before review. Second, right before apply, re-check for unexpected destroy actions and drift, because validation is most useful during the change itself, not after deployment. A failure mode to watch for is approving one plan, then applying after extra commits land or after someone changes resources manually in the console.

Git only becomes an audit trail if you keep it readable. A commit message like "updates" or "fix stuff" is not a record. It is a memory hole. A lightweight standard works well here: every commit or PR should include three fields in plain English: intent, scope, and rollback note.

That can be as small as: "Intent: restrict public ingress to app ALB. Scope: prod networking only. Rollback: revert SG rule change in prior module version." Make review a real checkpoint, not a courtesy, and run terraform validate on every change. Those two habits alone give you a usable history instead of a junk drawer.

This matters beyond engineering neatness. As environments grow, the pressure usually shows up in the same places: audit trails, standardized deployment processes, cost visibility, and drift. If your history is vague, every one of those conversations gets slower and more personal than it should be.

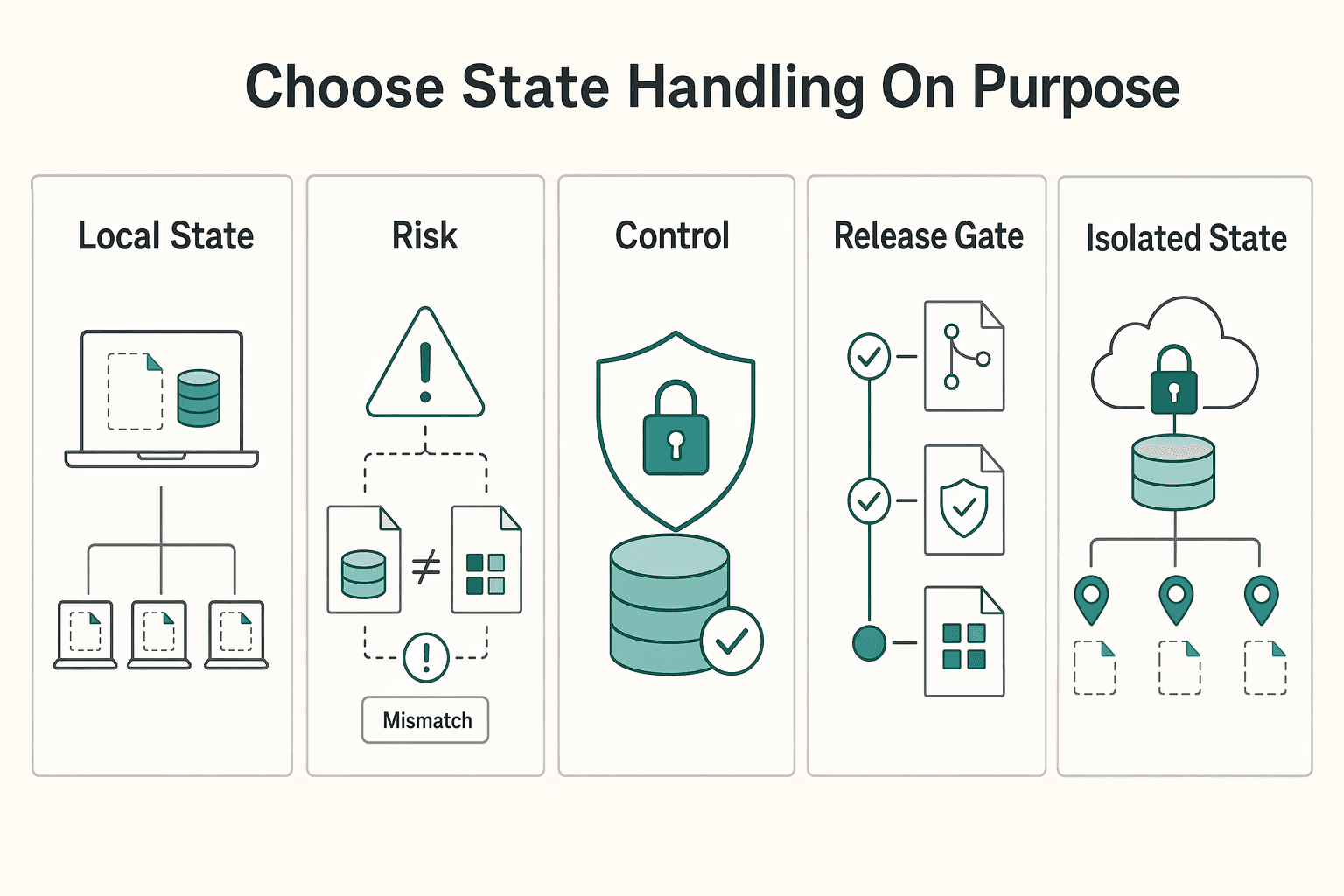

State choices affect reliability and how easy it is to explain what happened. Lock behavior can vary by backend and configuration, so verify your setup before you rely on it.

| State decision | What to verify before rollout | Why it matters |

|---|---|---|

| Local state | Backup, handoff, and recovery process | Reduces ambiguity during reviews and incident follow-up |

| Remote state without confirmed locking | How concurrent changes are prevented in practice | Avoids hidden race-condition risk from assumptions |

| Remote state with confirmed locking | Lock behavior and failure/recovery flow in your configuration | Prevents false confidence in untested controls |

| Shared state across clients or environments | Boundary and ownership model | Keeps audit and approval boundaries clear |

| Isolated state per client environment | Naming and operational overhead | Improves change ownership clarity at the cost of more setup |

The same discipline helps with compliance work. It gets stronger when you treat controls as code instead of cleanup after the fact. Put Policy-as-Code checks in the PR stage as a quality gate. Then review drift on a schedule and after urgent manual changes.

Your control mapping should be verified per client, but the operating pattern is stable. Define required checks for your organization's policies, review them before merge, and keep evidence in version control alongside approval records. Post-deployment checks still help, but on their own they are reactive.

For each client environment, use this short checklist:

terraform validate passed before mergeRelated: How to Calculate ROI on Your Freelance Marketing Efforts.

To scale delivery, separate reusable Terraform logic from client-specific configuration. Treat modules as maintained building blocks, and keep each client implementation thin, isolated, and reviewable.

Reusable modules should capture decisions you expect to repeat, not every client exception. Keep stable design choices in shared modules, and pass client-specific details in as inputs.

A practical boundary is:

That boundary protects maintainability. If client exceptions keep getting pushed into shared modules, testing gets harder, and one change can break multiple environments. If too much logic stays in each client repo, delivery drifts back to ad hoc builds, where portal changes, manual scripts, and fragmented configs raise operational risk.

Before you release a module, review its interface: keep inputs few and clearly named, keep outputs useful for downstream steps, and avoid hidden behavior that requires workaround flags.

| Delivery model | Onboarding speed | Change risk | QA effort | Handoff clarity |

|---|---|---|---|---|

| Ad hoc per-client build | Slower because each environment starts from scratch | Higher because patterns vary and manual differences creep in | Repeated from scratch for each client | Often weak because logic is spread across many places |

| Module-based delivery with thin client configs | Faster after core pieces are proven | Lower when shared code is reviewed, versioned, and reused carefully | Focused on shared modules plus targeted client checks | Stronger because shared logic and client choices are clearly separated |

Use a simple operating split: one shared module repository for common building blocks, plus one per-client configuration repository that calls those modules. The label matters less than clear ownership.

| Item | Repository |

|---|---|

| Module code | Shared module repository |

| Examples | Shared module repository |

| Release notes | Shared module repository |

| Compatibility notes | Shared module repository |

| Environment values | Per-client configuration repository |

| Provider configuration | Per-client configuration repository |

| Backend settings | Per-client configuration repository |

| Policy checks | Per-client configuration repository |

| Minimal composition for that client stack | Per-client configuration repository |

Your shared repo should hold module code, examples, release notes, and compatibility notes. Each client repo should hold environment values, provider configuration, backend settings, policy checks, and minimal composition for that client stack. Standardized module inputs and outputs keep client repos predictable.

Document ownership so changes do not stall:

This structure also helps prevent workspace sprawl and ownership gaps. If you cannot identify the owning repo, state, and CI job for an environment quickly, enforcement will slip.

Treat release discipline as an operating choice, not an afterthought. Use semantic versions, maintain a changelog, note compatibility impact, and document upgrade paths when behavior changes. Pin client environments to explicit module versions so current environments stay stable while shared modules evolve.

For multi-client work, keep isolation as a standing rule: separate repository, separate state location, and separate CI workflow or job boundary per client. That makes review and rollback clearer and limits blast radius when something fails.

Use that same discipline for secrets. Do not hardcode sensitive values in modules or client repos. Integrate CI/CD with your secrets manager, pass sensitive values at runtime, and verify how those values appear in plans, logs, and state for the resources you use. You might also find this useful: The Best Cloud Hosting Providers for SaaS Startups (AWS).

Your differentiator is the asset you leave behind: an operated, reviewable setup the client can keep using after handoff. Once Pillar 2 makes delivery repeatable, this pillar makes it trustworthy and manageable.

Define the deliverable before build starts. You are handing over a version-controlled repository with documented structure, clear module boundaries, a written environment promotion workflow, and ownership notes for shared modules, client configuration, state, and secrets.

| Repository type | Description |

|---|---|

| Private Terraform registry or local repository | Can store approved modules and providers |

| Remote repositories | Can proxy and cache external resources locally |

| Backend repository | Is specifically for Terraform state files; in Artifactory, this repository type has no remote or virtual variants |

If you use a controlled artifact store, say that plainly. A private Terraform registry or local repository can store approved modules and providers; remote repositories can proxy and cache external resources locally; and a backend repository is specifically for Terraform state files. That distinction matters because "it's in Git" does not mean everything is recoverable there, and in Artifactory the backend repository type has no remote or virtual variants.

Use a simple kickoff check: can the client name the repo, the state location, the module source, and the production approver? If not, your asset is still too dependent on one operator's laptop, tokens, or memory.

Skip dramatic promises and show operational readiness early: how scope is set, where approved modules come from, and how the first environment is created and reviewed.

| Setup model | Trust signals | Scope clarity | Time to first delivery |

|---|---|---|---|

| Ad hoc setup | Mostly your verbal confidence and past screenshots | Scope shifts as hidden decisions surface | Unclear until discovery and manual setup finish |

| Documented IaC onboarding | Repo, review flow, and planned inputs are visible early | Inputs, outputs, and change points are easier to define | More predictable because the environment path is written down |

| Controlled IaC onboarding with private modules and managed state | Client can see where modules, providers, and state are controlled | Boundaries are clearer because approved sources and ownership are explicit | More reliable because fewer external dependencies are left to chance |

Treat handoff as part of delivery, not an end-of-project courtesy. Your minimum handoff pack should include:

README with setup steps, environment notes, and command expectationsThen package follow-on services around the Terraform lifecycle rather than vague "maintenance," for example:

Keep SLA, response-time, and pricing details as explicit items to verify before publishing them. If you want a deeper dive, read Value-Based Pricing: A Freelancer's Guide.

Used well, Terraform changes your role from "set this up for me" to "make this change predictable, reviewable, and easy to hand over." That shift matters. It changes what the client is buying from you: fewer surprise changes, faster reviews, and a cleaner ownership trail as work moves from build to maintenance.

The three pillars are practical, not philosophical. Risk mitigation means changes move through pull requests, reviewers can comment on code diffs, and CI can post plan and policy check results before merge. Scalable delivery means you keep changes versioned and repeatable through the same pull-request and merge flow, with a remote state backend that uses locking. Professional differentiation means your final deliverable is not just running infrastructure, but a versioned repo, a defined state location, and a documented approval path.

In your next proposal, spell that out. Promise the operating model, not just the build:

Define success in terms the client can verify. A good checklist is simple: every production change has an approved plan, merges trigger repeatable deployment, drift is monitored, and the client can identify who reviews and who applies. One red flag to call out early is platform dependence. If your delivery depends on hosted features, verify current plan limits before you commit.

Before you send the proposal, check these four items:

That gives you a clean bridge to implementation: choose the backend, confirm the approval path, wire CI plan and policy checks, and verify any plan-tier limits before you promise hosted capabilities.

For a step-by-step walkthrough, see A Guide to Continuous Integration and Continuous Deployment (CI/CD) for SaaS.

Use Terraform when you need infrastructure changes to be reviewable before they are applied and repeatable across environments. terraform plan lets you review the exact diff, and version-controlled configuration makes handoff cleaner. That means you leave the client with an asset they can keep operating after you leave.

It helps when controls are turned into reviewed configuration and checked before merge, rather than treated as cleanup after deployment. In practice, that means versioned changes, reviewed plans before terraform apply, policy checks in the PR stage, and drift review after manual changes. It does not make you compliant on its own, and unmanaged legacy resources can weaken auditability and drift detection.

This article's operating model is Terraform-specific. It focuses on Terraform workflow checkpoints, provider-based API integrations, and context across AWS, Azure, and Google clouds. It does not provide equivalent CloudFormation evidence for a detailed side-by-side comparison, so the choice should be based on your team's operating requirements.

Use rules that prevent cross-client mistakes, unclear ownership, and state corruption. Keep a separate repository, state location, and CI boundary per client, use a stable backend with locking for shared work, and review terraform plan before terraform apply. Also avoid managing only net-new infrastructure while older manual resources stay unmanaged, because that weakens auditability and drift detection.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

Includes 7 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

If you want ROI to help you decide what to keep, fix, or pause, stop treating it like a one-off formula. You need a repeatable habit you trust because the stakes are practical. Cash flow, calendar capacity, and client quality all sit downstream of these numbers.

**Pick your cloud hosting by minimizing risk first, then tuning for features.**