Use remote pair programming as a delivery method clients can evaluate, not as a coding ritual. Define Driver and Navigator accountability, select the pairing mode based on task type, and lock expectations in a statement of work covering ownership, security boundaries, acceptance, and liability language. Execute with a standard rhythm: pre-call brief, visible in-session control loop, and a concise written recap with decisions and owners. That structure makes value easier to verify through cleaner handoffs, clearer scope control, and fewer avoidable rework cycles.

If you want a budget-conscious client to say yes, don't sell remote pair programming as a coding ritual. Sell it as a way to make delivery more predictable, catch issues earlier, and avoid having one person walk away with all the context.

That framing works because the value is not "two people on one keyboard." It shows up as four outcomes a client can judge during the project: steadier delivery through real-time collaboration, fewer defects from continuous review and faster feedback, stronger knowledge continuity because context is shared while the work happens, and cleaner handoffs because both people stay close to decisions as they are made. Those claims are easier to defend than vague promises about "better collaboration."

The simplest way to position pairing is the Driver/Navigator model. One person writes code. The other reviews and guides in real time. Most clients understand the value as soon as you explain the accountability on each side.

Driver owns

Navigator owns

Use one practical checkpoint on kickoff calls: agree on the session goal, then confirm timezone and normal daily schedule before pairing starts. Remote sessions break down for boring reasons, especially weak overlap and noisy environments. If you do not control those basics, the client can experience the method as slow and distracting instead of focused.

Pick the mode that fits the work and the people involved, not a one-size-fits-all rule.

| Mode | Best fit | Expected benefit | Main tradeoff |

|---|---|---|---|

| Driver/Navigator | Complex feature work, tricky refactors, architecture-sensitive changes | Continuous review, faster feedback loops, shared context during delivery | Benefits drop when roles or expectations are unclear |

| Ping-Pong | Test-led work, bug-prone areas, modules where checkpoints matter | Each handoff produces a concrete test artifact and rotates context across both people | Requires comfort with test-led handoffs |

Client objections usually make more sense once you translate them into the risk behind the words.

| Objection | Underlying concern | Grounded response |

|---|---|---|

| sounds expensive | prove this reduces downstream risk | Real-time review, shared context, and faster feedback; value goes beyond the lines of code written during the session |

| this looks duplicated | I only see hours, not avoided rework | Point to cleaner handoffs, test artifacts in ping-pong sessions, and fewer decisions lost in private notes or one person's head |

| can't you just code solo and review later? | I think delay is cheaper than prevention | That can be true for low-risk tasks, but on critical paths later review pushes feedback later in the cycle, after more assumptions are already embedded |

When a client says "sounds expensive," hear "prove this reduces downstream risk." Your answer is real-time review, shared context, and faster feedback. The value is not just the lines of code written during the session.

When they say "this looks duplicated," hear "I only see hours, not avoided rework." Point to what you can verify in the project: cleaner handoffs, test artifacts in ping-pong sessions, and fewer decisions lost in private notes or one person's head.

When they say "can't you just code solo and review later?" hear "I think delay is cheaper than prevention." That can be true for low-risk tasks. On critical paths, later review pushes feedback later in the cycle, after more assumptions are already embedded.

You might also find this useful: The Best Tools for Managing a Remote Development Team's Workflow.

Price and bill paired work by client context, not habit. Choose one path based on what the client is buying right now: recurring execution, a first engagement with open questions, or a defined outcome with clear scope.

Use a model the client can approve and you can defend: transparent, adaptable, and aligned with the value and expectations of the engagement.

| Pricing path | Use it when | Objection it helps you answer | Delivery risk coverage | Billing complexity |

|---|---|---|---|---|

| Premium blended rate or retainer | You are delivering recurring execution work, ongoing advice, or regular paired sessions | "Why not just bill solo time?" | Covers ongoing coordination, updates, and shifting priorities in one commercial structure | Moderate |

| Paid discovery | This is the first engagement and key assumptions are still untested | "I am not ready to commit to a larger scope yet." | Covers early ambiguity by forcing assumptions and decisions into a scoped artifact | Low to moderate |

| Outcome-based project fee | Scope boundaries and success criteria are clear enough to price a result | "I need budget predictability, not open-ended time billing." | Covers estimate drift better when scope control is strong | Higher |

If the client needs regular paired delivery, use a recurring structure instead of re-quoting every week. A retainer or monthly budget with a clearly labeled paired-session rate is usually easier to approve and easier to track.

Keep your value logic explicit: paired work is a different service unit, not hidden double billing. Before you set or defend the number, verify your inputs. Attendance, session goals, outcome logs, time entries, and invoice labels should all line up. Any multiplier range should remain an internal pricing note until it is verified against the project's records or current benchmark evidence before use.

For a first engagement, use paid discovery to reduce scope risk without giving away free consulting. Keep it narrow, decision-oriented, and documented.

| Discovery element | What to document |

|---|---|

| Objective | Define the decision this phase must answer: feasibility, architecture direction, or implementation approach |

| Artifact produced | Commit to one concrete output: technical memo, prototype notes, backlog slice, or estimate range |

| Assumption log | Record unknowns, dependencies, and client assumptions that could change scope or pricing |

| Conversion criteria | State what must be true to proceed: scope boundaries agreed, required access in place, and next-step proposal accepted |

Keep those elements in writing so discovery does not quietly expand into unpaid implementation planning.

Make invoice lines match the way the work was delivered. Accurate, on-time invoices matter, but billing quality also depends on accurate tracking and plain descriptions that procurement and accounting can verify.

| Invoice line | What it covers | What you should be able to show |

|---|---|---|

| Solo tasks | Documentation, setup, independent implementation, follow-up changes | Time entry, task reference, deliverable or commit summary |

| Paired sessions | Driver/Navigator session time, live debugging, implementation-time review | Session date, attendees, stated objective, short outcome summary |

| Strategic oversight | Planning, architecture review, backlog shaping, risk review, async decisions | Notes, recommendation summary, linked milestone or decision record |

Review this framework regularly as tools, requirements, and client expectations change. For deeper proposal design around outcomes, read Value-Based Pricing: A Freelancer's Guide. You can also see adjacent operating guidance in How to Manage a Global Team of Freelancers and Browse Gruv tools.

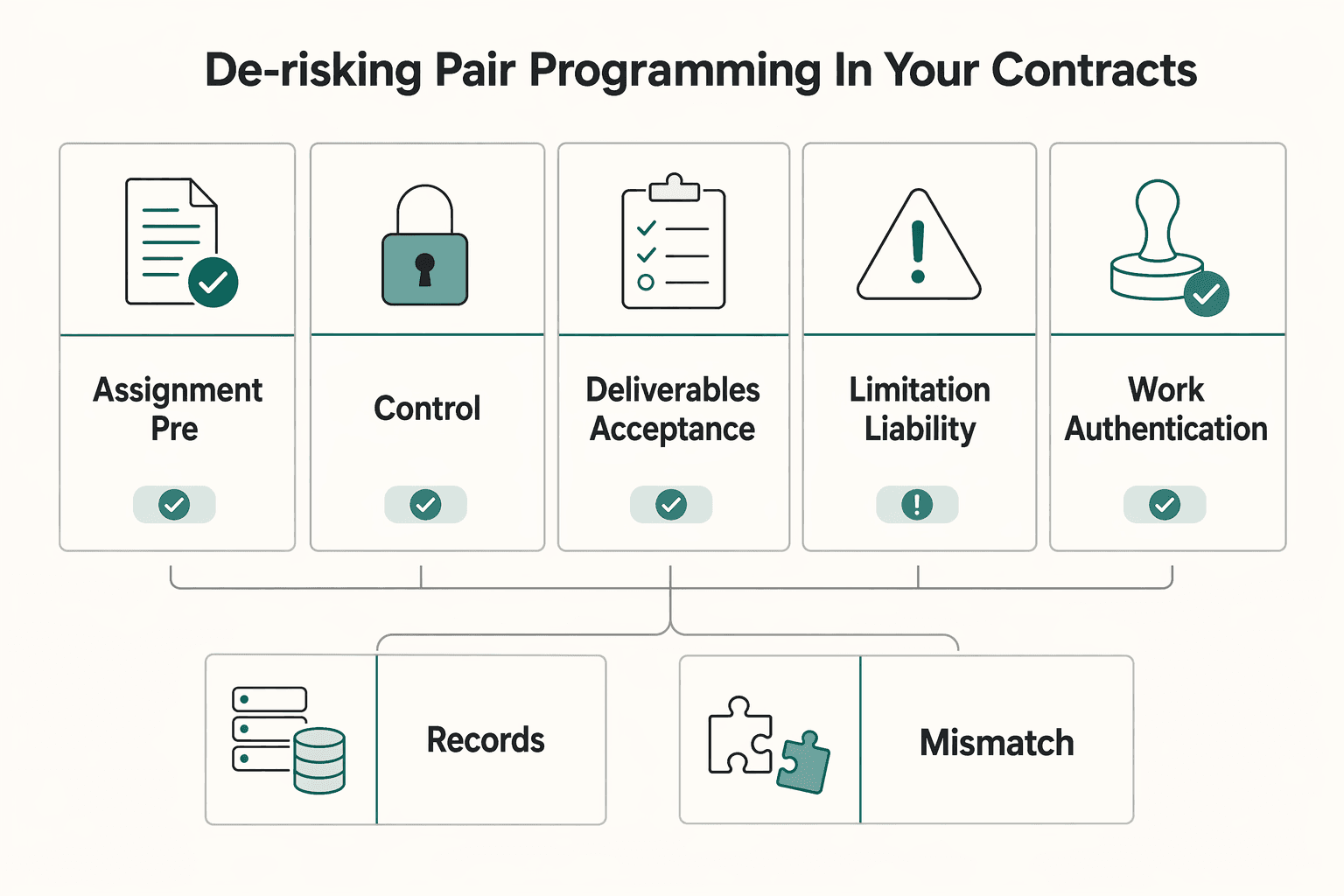

Write your SOW for disagreement, not goodwill. In remote pair programming, co-created code and decisions move quickly, and vague terms can become ownership, security, acceptance, or liability disputes.

| Clause | What to define | Risk reduced |

|---|---|---|

| IP assignment and pre-existing materials | Who owns co-created outputs and which pre-existing materials each party keeps | Ownership disputes after delivery |

| Confidentiality, environment, and access controls | Whose environment is used, which access method is approved, which collaboration tools are allowed, and who grants or revokes permissions | Unauthorized access and unclear responsibility for security boundaries |

| Deliverables and acceptance criteria | Acceptance tied to explicit outcomes and evidence, plus how change requests are documented, approved, and priced | Scope conflict and acceptance friction |

| Limitation of liability | A liability-limits clause and enforceability details confirmed with local counsel | Open-ended exposure from a defect or access incident |

Use these four clauses as your contract backbone, and have local counsel confirm final legal wording for your jurisdiction:

Define who owns co-created outputs (code, tests, docs, and session artifacts), and separately list any pre-existing materials each party keeps.

State whose environment is used, which access method is approved, which collaboration tools are allowed, and who grants or revokes permissions.

Tie acceptance to explicit outcomes and evidence, not effort. Because software requirements can change mid-build, define how change requests are documented, approved, and priced instead of assuming a fixed spec.

Include a liability-limits clause and confirm enforceability details with local counsel.

Before kickoff, confirm this clause-design checklist:

Keep security language scope-bound in the contract: state whether work happens in the client environment, whether direct write access is allowed or gated behind client-side sync or approval conditions, whether recording or logging is expected, and who receives incident escalation first.

| Ambiguous SOW phrase | Clearer acceptance-based wording you can adapt |

|---|---|

| "Work on authentication" | "Authentication changes are accepted when the named branch or PR is submitted, required test evidence is attached, and behavior matches the client's written acceptance notes." |

| "Pair on the API" | "Paired sessions produce the specified endpoint change, documented in the repo and review thread, with acceptance tied to the stated response format and agreed verification step." |

| "Done when tested" | "Acceptance requires listed test evidence and any additional validation named for critical functions, since testing alone can miss bugs." |

For a step-by-step walkthrough, see How to Run an Effective Daily Stand-up with a Remote Team.

Your SOW sets boundaries, but your operating rhythm proves value. To make a paired session worth the cost, run the same cycle every time: written pre-brief, visible control loop during the call, and outcome-first summary after it ends.

This matters even more in remote work because the value comes from live problem-solving, continuous review, and earlier error catching, not just two people on a call. You only get those outcomes when expectations, access, and decisions are explicit.

Start with a required pre-brief so the session can produce code, clarity, or a clean handoff.

One sentence defining what "done" means for this call.

What is in scope, what is out of scope, and what triggers a follow-up session.

Who starts as driver, who starts as navigator, and whether knowledge transfer is a session goal.

Which environment will be used, who grants access, and whether permissions are already verified.

Access gaps, unresolved questions, unstable environments, missing stakeholders, or dependencies that could block progress.

Before the call, get written confirmation that repo access, environment access, and required approvals are ready. If scope is still fuzzy, put the approach, clarifications needed, assumptions, and a timeline estimate in writing. For ambiguous work, an async clarity check can expose weak instructions early; one practical pattern is a 48-hour no-questions window to test whether the brief stands on its own.

| Setup approach | Access control model | Security posture | Latency and collaboration fit | Enterprise compatibility |

|---|---|---|---|---|

| IDE co-editing | Host grants session-level access to a shared coding session | Depends on pre-approved access, endpoint hygiene, and setup discipline before work starts | Strong when both people need to inspect and change code quickly | Varies by client policy and approved tooling |

| Screen sharing with one person typing | One side keeps direct control while the other reviews and guides | Narrower exposure because code stays with the sharer, but tool approval still matters | Good for debugging, review, and guided implementation; less fluid for shared editing | Often easier to align with standard meeting-tool policies |

| Client-managed remote desktop or VDI | Work runs inside a client-controlled environment | Useful when the client requires tighter control of code and credentials | Can feel slower for rapid co-editing if connection quality is weak | Often a practical fit when internal security rules drive tool choice |

Run a simple control loop and keep it visible. Restate the objective, agree on the first two or three agenda items, assign driver and navigator, and switch control at clear handoff points: subtask complete, blocker hit, or a deliberate knowledge-transfer moment.

Log decisions as you go: what was decided, what assumptions were made, and what still needs confirmation. When new issues appear, decide immediately whether they belong in this session. If not, move them to a parking lot with an owner and next step.

Treat the summary as part of delivery, not admin. Send it quickly and structure it for decisions, not storytelling:

This makes value legible in the same terms engineering work is judged: what shipped, how reliably work moved, and how well others were enabled. If the objective was not met, state that directly, explain the blocker, and record what was clarified.

Related: How to Manage an Offshore Development Team in a Different Time Zone.

Done well, you are not selling coding time. You are selling a risk-reduced way of working: clearer collaboration, fewer avoidable rework loops, and a better record of why decisions were made.

That position only holds if the basics show up in client-facing behavior. Set clear scope and session boundaries up front, then follow a repeatable session discipline: prep before the call, explicit roles during it, and a short written summary after it with decisions and next steps.

In practice, this often means fewer surprises. Clients can see what happened in a session and what remains in scope. They also see that you check basics before work starts, including timezone and normal working schedule with a new partner, because remote sessions can break down quickly when overlap is poor, internet is unreliable, or one side is working in a noisy environment.

One common failure mode is early rejection when pairing feels awkward at first, so your documentation and follow-through matter more than a polished sales pitch.

Your next move is simple. Tighten your positioning statement so it focuses on better collaboration and clearer decisions, not just "pair programming." Frame proposals around outcomes and boundaries, then reinforce the work after every session with a brief recap, a decision log, and a clear handoff. Pair programming benefits are often clearer over the medium to long term, not in the first few sessions.

We covered this in detail in A Guide to Employee Handbooks for a Remote-First Company.

This grounding pack does not support a single pricing benchmark or multiplier. The practical requirement is to scope paired time explicitly so it is tracked, reviewed, and not treated as invisible collaboration.

Keep this simple and explicit: document how pairing will run. A useful kickoff baseline is a pairing agreement that covers duration, pair setup, story distribution, and when to switch driver and navigator roles.

It can be, but there is no universal ROI figure in this evidence set. Treat it as an operational decision: define scope, track outcomes, and review after a trial period.

Use pairing when the work is ambiguous, high risk, or needs live knowledge transfer; use solo execution when the work is mostly straightforward implementation. A practical start is to pair for about 50% of the work for one sprint, then review results before scaling up.

Common failure modes include partner dominance, fatigue, and too much parallel work outside the session. In remote work, implicit cues disappear, so handoffs should be explicit and verbal. If schedules barely overlap, pairing is usually ineffective because context fragments.

Use the two-role model clearly: one driver codes while the navigator keeps broader context and directs next steps. The goal is shared understanding and earlier course correction, not duplicate effort.

Do not assume one tool is universally best. Choose the setup based on your team’s access model and confirm that permissions and workflow handoffs are clear before kickoff.

This pack does not provide jurisdiction-specific legal guidance. Keep ownership and deliverables explicit in your agreement before the first session so co-created code and notes are not ambiguous.

Decide the pairing agreement up front: how long to pair, how pairs are organized, how stories are distributed, and when driver/navigator roles switch. Also agree on explicit handoff norms and overlapping collaboration time so remote sessions stay effective.

A career software developer and AI consultant, Kenji writes about the cutting edge of technology for freelancers. He explores new tools, in-demand skills, and the future of independent work in tech.

Includes 3 external sources outside the trusted-domain allowlist.

Educational content only. Not legal, tax, or financial advice.

Value-based pricing works when you and the client can name the business result before kickoff and agree on how progress will be judged. If that link is weak, use a tighter model first. This is not about defending one pricing philosophy over another. It is about avoiding surprises by keeping pricing, scope, delivery, and payment aligned from day one.

If you want to manage a global freelance team without constant cleanup, use the same intake-to-payout process for every engagement and save an artifact at each gate. Common failure points are instinct-based classification, vague scope, and payments approved in chat with no audit trail.

**Step 1. Make the four decisions that actually shape the result.** To manage offshore development work well, decide early on your engagement model, how ownership and decision-making will work, how work will move across time zones, and how performance will be judged. Offshore hiring can give you access to global talent, faster delivery, and more scalable operations, but it is not just a cost play. If you optimize only for rate, you usually end up with less control and weaker alignment.