Yes: if you process client personal data on their instructions, you need a dpa for data scientists that reflects real delivery work. Lock Article 28(3) essentials first, including role allocation, processing details, documented instructions, sub-processor authorization, and end-of-contract return or deletion. Then verify your security commitments under Articles 28 and 32 against actual access, storage, and vendor setup. Keep a compact evidence file so you can show what was agreed and how you run it.

If you need a DPA as a data scientist, the goal is not just a signed document. You need a signed Data Processing Agreement, or DPA, that matches the work you actually do, plus a short internal checklist you can follow during delivery. In practice, that combination helps you manage risk during delivery.

This guide is for freelancers and small consulting teams handling personal data in analytics, modeling, reporting, dashboards, or data preparation. Under GDPR, personal data is information relating to an identified or identifiable natural person, so treat the engagement as in scope whenever the dataset can point back to a person.

A usable end state is not "we have a template." It is a DPA that clearly sets:

| DPA area | What it should set |

|---|---|

| Roles | who is controller and who is processor |

| Data coverage | what personal data is covered and which data-subject categories are involved |

| Processing scope | the processing subject matter, duration, nature, and purpose |

| Responsibilities and measures | the responsibilities, liabilities, and applicable technical and organisational measures |

Article 28(3) is the anchor for this structure. If scope expands mid-project, specific terms are usually easier to apply than broad language like "data services."

If you are a freelancer receiving client extracts, working in a client warehouse, using your own analysis tools, or producing reports in shared environments, start with role clarity. Ask a simple question first: are you processing personal data on the client's behalf?

When a controller uses a processor to handle personal data on its behalf, there needs to be a written contract that sets out responsibilities and liabilities. Before you sign, map where data will touch across your workflow and vendors. If you cannot map it clearly, pause before accepting data.

A signed DPA is often required, but it is not the same as full compliance. Keep evidence of the steps you take to comply, such as the signed version, a scoped processing summary, and notes on your technical and organisational measures.

Watch for role mismatch early. If the contract labels you as a processor but your actual behavior sets the purposes or means of processing, the label may not match your real role. Roles should reflect what each party actually does, because every clause that follows depends on that foundation. Related: What is a Data Processing Agreement (DPA) and When Do You Need One?.

Get the roles right first, because role classification sets the scope of the clauses you need.

A data controller determines the purposes and means of processing personal data. A data processor processes personal data on the controller's behalf and on documented controller instructions. Labels in a template can help, but they do not settle the issue on their own.

Use this quick role check before redlining:

If both parties jointly determine purposes and means, treat it as a joint controller scenario and use an Article 26 arrangement instead of forcing it into a standard controller-processor DPA.

Apply the same discipline to vendors. A provider helping you process client data on the client's behalf may be a sub-processor, not just a generic third party. That means you need controller authorisation before appointing it and a written processor-to-sub-processor contract. Keep a short role map for the client, your firm, and each downstream provider. If you cannot explain that map clearly, pause before signing or taking data.

Use a simple default. First map roles for this project: the same organization can be a controller, a processor, or both depending on context. If you will process client personal data through your tools or any SaaS service on the client's behalf, route the project to DPA review. Under Article 28(3) GDPR / UK GDPR, processing by a processor must be governed by a contract or other legal act.

The trigger is practical. Will identifiable person-related information move through your stack while you act on client instructions? If yes, treat it as DPA scope first, then narrow only if the facts support that.

Review for a DPA when you will receive, access, store, transform, analyze, export, or share client personal data in your environment or via SaaS tools while acting for the client.

Do not assume a DPA is required when the work does not involve processing personal data on the client's behalf. If you take that position, record why.

Keep internal methodology work separate from delivery work that touches client records. If client records are uploaded, queried, cleaned, joined, or visualized in your environment while you are processing on the client's behalf, that points to Article 28 contracting.

If you conclude a project is internal-only, write a short note with:

Put this checkpoint into proposal and scoping, not cleanup after the fact:

| Pre-sales check | Question |

|---|---|

| Role mapping | Have you mapped whether you are a controller, processor, or both for this scope? |

| Client personal data | Will you process client personal data on the client's behalf? |

| SaaS or cloud service | Will any SaaS vendor or cloud service process that personal data? |

| Documented instructions | Are you acting only on client documented instructions? |

| Subcontractors | If subcontractors are involved, is controller authorization required before engaging them? |

If personal data will be processed on the client's behalf, flag DPA review before the proposal is sent. If facts are unclear, treat it as DPA scope and narrow later.

For a step-by-step walkthrough, see Data Localization Laws for Global Freelancers Before You Sign.

Before signing, make sure your DPA package includes a clear responsibility matrix for the controller, processor, and each sub-processor. Keep it operational, not abstract. It should match how data is actually handled in delivery.

Build the matrix next to the DPA and main services agreement, and make role terms match the EU or UK data protection law definitions used in your contract. Also confirm contract order. If the DPA is supplemental and has conflict priority, align your role map there first.

Include at least:

| Setup | What to check first | Confusion to test for | What to lock in writing |

|---|---|---|---|

| Client-controlled environment | Who controls the account/environment and who gives processing instructions | Legal role is inferred from hosting setup instead of contract definitions | Access authority, export authority, approval path, deletion-request handling |

| Consultant-managed environment | Whether client personal data enters the consultant's cloud or SaaS stack | Downstream providers are not clearly mapped in the role matrix | Current provider list and role boundaries for each party |

| Hybrid shared environment | How personal data moves between client and consultant environments | Control boundaries are assumed rather than documented | Handoff points, admin boundaries, and event ownership by incident type |

Do not leave sub-processor changes to vague language. If your contract addresses those changes, state the process in clear terms.

Keep DPA and NDA roles separate in drafting. An NDA can cover confidentiality, but it does not replace DPA role and processing governance.

Document ownership rules for common events:

Define breach language clearly in the same section. A breach should track the contract definition, for example accidental or unlawful destruction, loss, alteration, or unauthorized disclosure or access. If your DPA does so, you can distinguish unsuccessful incidents with no unauthorized access from true breaches.

This pairs well with our guide on Data Controller vs Data Processor for Freelancers.

Once roles are mapped, scope is often where DPAs become too generic. The contract should describe the real engagement, not generic "business purposes" language. Article 28(3) requires the contract to set out subject matter, duration, nature and purpose, type of personal data, and categories of data subjects.

Make the scope schedule match your services agreement or project brief. If you are cleaning and modeling churn data, say that. If you are analyzing compensation patterns from payroll software exports, say that. "Data science support" is too vague to control later requests.

A workable scope schedule should name:

Use concrete descriptions such as CRM exports with lead contact details for pipeline forecasting, or payroll datasets with compensation and department fields for cost analysis. You are not legally required to list every software product, but naming real sources makes scope auditable and easier to enforce.

Scope needs to be narrow enough to enforce data minimisation and storage limitation under Article 5(1)(c) and Article 5(1)(e). In practice, that means stating what data enters your environment, why it is processed, and what happens at contract end.

Run one pre-sign check. Compare the clause to the access request. If the scope says compensation bands but the export includes extra fields like home addresses or bank details, pause and update the paperwork before ingestion. Do the same for retention. If the clause is silent, deletion and return decisions can become harder to defend later.

Keep a processor-side record of processing categories per controller, and make sure it matches the DPA schedule.

Put reuse terms in writing to avoid ambiguity. Article 5(1)(b) requires specified purposes and blocks incompatible further processing, so the contract should explicitly state whether client data may be reused for model training, internal testing, benchmarking, or later engagements.

Do not leave these points implied:

If the client wants broader reuse, require documented instructions that clearly authorize it and tie it to the stated purpose.

Treat "just one more data source" as a contract change, not a chat request. A processor should act only on documented controller instructions, so any new source, purpose, or processing step should trigger a written update.

Your change-control gate should require an updated scope schedule covering the new source, revised purpose, any new data-subject categories, retention, and any new vendor. If expansion adds a sub-processor, obtain controller authorization under Article 28 before data moves.

Need the full breakdown? Read Using a Data Processing Agreement with Subcontractors.

Once scope is fixed, turn technical and organisational measures, or TOMs, into controls you can run and evidence under Articles 32 and 28, not vague "reasonable security" language. If you promise access limits, protection, monitoring, and escalation, those controls should map to every place data sits: your device, your cloud tools, client environments, and each sub-processor.

Start with four control areas: access, protection, logging, and incident response. Article 32 is risk-based, so the control level should match the data and setup, but the obligations still need to be explicit and workable.

State who can access personal data, use individual accounts, and define how access is removed when work ends or people roll off. Include regular monitoring for inappropriate or unauthorised access, not just "authorised personnel only."

For protection, Article 32 explicitly includes encryption and pseudonymisation as TOM options. Use wording you can consistently meet, such as encryption in transit where supported, encryption at rest where available in the environment, and masked or pseudonymised working data when full identifiers are not needed. Keep data minimisation practical: process only the fields required for the agreed purpose.

Use a compact TOM table to test whether your promises are true across each environment and sub-processor.

| Data location or party | Typical personal data exposure | TOM controls to state in contract or schedule | Evidence you should be able to produce |

|---|---|---|---|

| Your local workstation | Temporary extracts, cached files, notebooks, screenshots | Restricted device access, encryption where available, minimal local storage, data removal at end of engagement | Device security status record if used, local handling note, deletion checklist |

| Client-controlled environment | Query results, model outputs, dashboards | Access only under client-granted permissions, no export unless instructed, activity tied to named accounts | Client access approval record, user list, dated access review |

| Your cloud workspace or storage tool | Uploaded datasets, transformed tables, model artifacts | Role-based or equivalent access controls, logging where available, minimisation and retention limits aligned to purpose, restore capability defined | Access list, logging settings record, retention configuration |

| Sub-processor SaaS | Files, backups, service-side access depending on service | Controller authorisation under Article 28, configured vendor security settings, defined incident route | Current sub-processor list, vendor TOM summary, controller authorisation record |

If you cannot complete this table honestly for a tool, fix the setup or narrow the contract before using that tool.

Your evidence pack should be small but audit-ready. Article 28 requires processors to provide information needed to demonstrate compliance and support audits, so keep records you can share without exposing sensitive internal security details.

A practical pack can include a current sub-processor list, a TOM summary, a recent access review record, and a central log of breaches and near misses. Because Article 32 expects regular testing and evaluation, keep at least one dated record showing controls are being checked over time.

If a client asks for controls you cannot operate consistently, narrow the clause before signing. Article 33 requires notifying the controller without undue delay after becoming aware of a personal data breach, but your contract wording still has to match your real operating capability.

Use one rule. If a control depends on tools, staffing, or habits you do not have in place, rewrite it into specific obligations you can actually perform. In daily work, that means minimizing payloads, masking direct identifiers in shared samples, keeping pseudonymisation lookup data separate where used, and maintaining a clear record of exports, storage locations, and access removal.

We covered this in detail in Data Sovereignty in Cloud Storage for Cross-Border Freelancers.

A signed Data Processing Agreement (DPA) does not, by itself, satisfy international transfer requirements. If client data will cross regions, run the transfer check before kickoff, not after procurement signs.

EU GDPR and United Kingdom (UK) transfer rules are related, but not interchangeable. UK guidance starts with "Are we making a restricted transfer?", while GDPR Chapter V allows transfers only under specific conditions. If your project touches EU and UK data, test both regimes explicitly.

Use a short pre-project check whenever data may move outside the client's home region or into a vendor environment in another country. Before work starts, confirm:

| Transfer check | What to confirm |

|---|---|

| Applicable regime | which regime applies: EU GDPR, UK GDPR, or both |

| Access location | where you will access the data from |

| Vendor or sub-processor location | where each vendor or sub-processor stores data or can access it |

| Transfer treatment | whether the move is being treated as a transfer, including a UK restricted transfer |

| Transfer basis | what transfer basis the parties are relying on, including whether adequacy is part of that assumption |

Adequacy assumptions need maintenance, not autopilot. They can be amended or withdrawn, and UK adequacy status was amended in December 2025. If your contract assumes coverage without naming the assumption and change process, expect rework later.

Transfer mistakes can become regulator-facing quickly if your records do not match reality. In the EEA, complaints can be brought to a national Data Protection Authority, or supervisory authority. In the UK, unresolved complaints can escalate to the Information Commissioner's Office (ICO).

This does not mean every complaint leads to enforcement or fines. The practical risk is documentation failure: the client says data stayed in-region, vendor logs show access elsewhere, and neither side recorded the transfer assumption.

Require both controller and processor to notify each other when transfer assumptions change. Keep the triggers operational: a new hosting region, a new sub-processor, access from a different country, or a change to the adequacy basis.

Keep a lean evidence pack for this section:

Decision rule for data science work: if data crosses borders between the client, you, or any vendor, pause and document the transfer basis before live data moves.

Redline liability, indemnity, and termination first, because those clauses set your real downside. Treat them as the commercial center of the DPA, not boilerplate. If they stay vague, routine mistakes can turn into exposure that has little connection to project value.

Use one practical default. If an MSA or services agreement already governs the deal, align liability there and have the DPA point to it. GDPR does not require every risk-allocation term to sit inside the DPA, and a second liability regime can create conflicts.

Read the DPA, MSA, order form, and security terms together. One drafting risk is a cap in one document, then a broad carve-out elsewhere for "any data protection breach," "any confidentiality breach," or "any breach of law," which can reopen uncapped liability.

Keep the statutory baseline in view. GDPR Article 82 ties processor liability to processor-specific failures under the Regulation or acting outside lawful controller instructions. For controllers, contract wording does not remove regulatory exposure.

| Clause area | Acceptable | Risky | Reject |

|---|---|---|---|

| Limitation of Liability | DPA follows the liability clause in the MSA or main contract, with any privacy carve-outs named and narrow | DPA has separate cap language but does not say how it interacts with the MSA, security terms, or confidentiality clauses | "Unlimited liability for any data protection, security, confidentiality, or regulatory issue" without tight definitions |

| Indemnification | Indemnity is limited to specific third-party claims tied to a defined breach, with clear triggers | Indemnity covers "losses arising from any breach" but does not define third-party versus direct claims, fault, or scope | Indemnity for any claim, loss, fine, cost, or liability connected to personal data, regardless of fault or governing law |

| Termination | Controller choice of return or deletion is explicit, with a wind-down timeline, access shutoff, and confirmation steps | Return or deletion is required but timing, revocation steps, and proof are missing | Immediate return or deletion duties that ignore practical handover, access revocation, or audit cooperation |

Define indemnity triggers narrowly. Indemnity is usually aimed at third-party claim allocation, not direct disputes between the contracting parties.

If a draft says you indemnify for "all losses arising from this agreement," check whether it is quietly replacing the liability framework. Keep the trigger, claim type, and causation explicit. Tie the wording to governing law, because broad language can be interpreted differently across jurisdictions.

Make termination operational, not symbolic. ICO guidance states that at contract end the processor must return or delete personal data at the controller's choice and submit to audits and inspections. That guidance is under review, so use clear contract mechanics rather than assumptions.

Spell out who decides return versus deletion, the completion timeline, when access is revoked, and how audit cooperation works in wind-down. Keep a short end-of-contract evidence pack: controller instruction, return or deletion confirmation, and a final access-revocation check.

Do not accept the template's law and forum by inertia. In a Data Processing Agreement (DPA), these terms should make enforcement predictable and practical enough that you would actually use them.

UK GDPR guidance treats Article 28 terms as minimums, which means parties can add their own terms. So while many controller and processor duties are set by GDPR, governing law, jurisdiction, and dispute process still shape how a contract dispute works in practice.

Choose governing law for predictability, not home advantage. The goal is fewer surprises when liability, termination, audit, or deletion clauses are tested.

Keep governing law aligned across the DPA, MSA, order form, and security schedule. If the MSA is under one law and the privacy addendum under another, interpretation can get harder when the contract is already under stress.

If disputes go to court, make the choice explicit and exclusive on purpose. Exclusive forum clauses are used for certainty and enforceability, not just drafting style.

For a solo consultant, litigation cost and working language are practical constraints. Before signing, confirm in the final PDF:

If either side wants arbitration, define the sequence clearly. A staged path like mediation first, then arbitration after a stated period, for example 60 or 90 days, can create a clearer escalation path.

Avoid vague "good faith discussion" wording with no timing or escalation step. If you use mediation or arbitration, say whether urgent interim measures can still be requested from a competent court where the agreed rules and applicable law permit it. This can reduce the risk that disputes delay immediate GDPR actions like access shutdown or deletion steps.

Move fast by deciding your red lines before the first redline pass. Keep one list for protections you will not trade, and one list for items you can flex on.

When legal asks for broader data-handling duties, counter with risk-based scope instead of a blanket no. Narrow duties to what you actually do in the engagement, and remove language that expands obligations beyond that scope. If the paper no longer matches the real scope, pause and fix the structure before signing.

Keep fallback language ready for clauses that usually stall procurement. For audits, a practical fallback is staged review. Start with evidence review, then allow deeper review only when processing presents significant privacy or security risk. Keep scope tied to the business's size and complexity and the nature and scope of processing.

Protect confidentiality in the same pass. Do not accept audit or risk-assessment disclosure terms that could expose sensitive detail that malicious actors could misuse. Ask for confidentiality and privilege protections over those materials, including recognition for risk assessments already done under other laws or regulations.

Before you sign, check one thing above all: your contract terms, tool setup, and day-to-day process need to match. When a controller uses a processor, a written contract is required, and it works best when you can show what was agreed, who approved it, and how you will execute it.

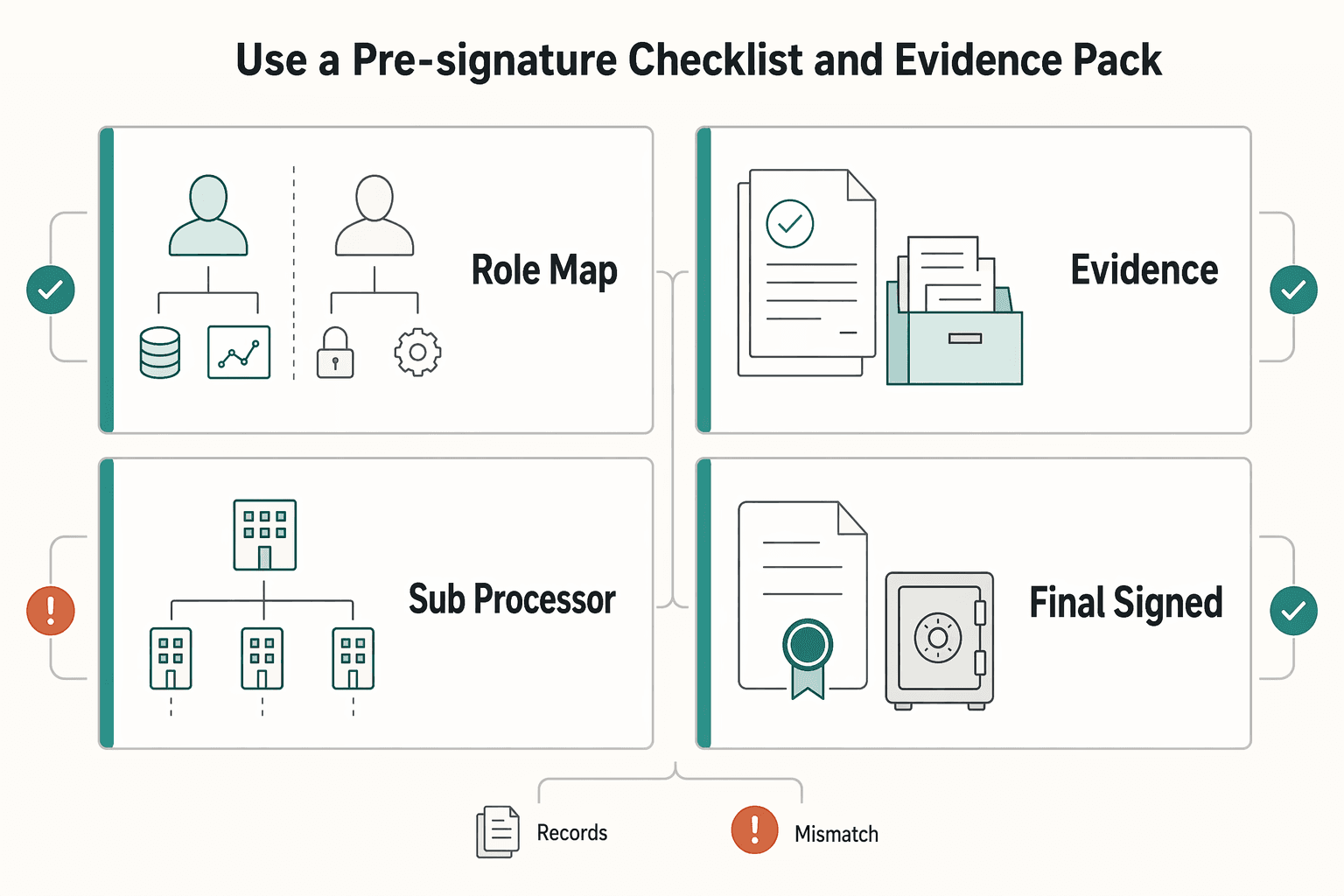

Your pre-signature evidence pack should cover the core Article 28(3) contract points in a retrievable format: role split, processing scope, security measures, and sub-processors. Keep the executed version easy to identify.

| Item | What to verify before signing | Common failure mode |

|---|---|---|

| Role map | Client is the data controller and you are the data processor, unless the facts clearly say otherwise | Controller decisions drift to you, and you take on obligations you cannot control |

| Scope record | Subject matter, duration, purpose, data types, and data subject categories are specific enough to audit | Boilerplate quietly expands the work beyond the real engagement |

| TOM evidence | Stated controls match your real access, storage, transfer, and logging setup | Contract promises controls your stack does not actually support |

| Sub-processor list | Every downstream vendor is listed, with written authorization where required | A vendor is added informally and contract compliance breaks |

| Final signed version | Executed DPA version and date are immediately identifiable | Different drafts circulate and teams follow conflicting terms |

Then pressure-test operational readiness before signature. If the DPA says you will delete or return data at contract end, notify the controller of breaches without undue delay, and support audits or inspections, make sure you can actually do that across your storage, notebooks, backups, and sub-processors.

Run three quick traces: access removal, deletion request, and incident escalation. The controller may need to notify a supervisory authority within 72 hours of becoming aware of a breach, where feasible, so your internal escalation path usually needs to move faster than vague contract language.

Keep the evidence pack simple and retrievable: signed DPA, saved written instructions including key emails, TOM summary, sub-processor list, deletion or return process, and audit-response materials you are prepared to share. The goal is accountability. You want to produce records quickly during renewals, audits, or dispute checks instead of rebuilding history from memory.

After signature, send a short kickoff note that converts legal language into delivery rules. Cover where data can live, approved tools, who approves new sub-processors, how instructions are recorded, what triggers a scope update, and who gets contacted first for a security issue. That is what keeps the DPA usable in delivery, not just filed away.

Related reading: How to Vet Transcription Tools for User Interviews: DPA, SOC 2 Type II, and Data Use Checks.

Before signature, turn your scope and responsibilities into a clean draft you can redline quickly with the SOW Generator.

Before your next signature, run one live client contract through a role-and-redline check. Start with the real engagement, not a new template, and confirm that the contract matches how you actually process data.

| Check | What to confirm now | Redline if missing |

|---|---|---|

| Role map | The contract identifies who decides processing activities as controller and who acts on instructions as processor | Tighten role language so you are not given open discretion over purpose or use |

| Legal spine | If you process personal data on the client's behalf, the contract includes required processor terms | Add or fix processor terms, starting with Article 28(3)(a): processing only on documented instructions |

| Scope | Subject matter, duration, nature, and purpose are stated clearly | Replace vague service-only wording with concrete processing scope |

| Operations fit | The written obligations match your real tools, access, and delivery workflow | Edit clauses you cannot actually perform as written |

Once one contract is solid, keep the strongest clauses as modular blocks you can reuse. That approach is defensible because minimum processor terms are required, and parties can add supplemental terms. Focus your reusable blocks on scope, documented instructions, sub-processor authorization, and other required processor terms. Then review those blocks periodically so they stay current.

Reopen the DPA whenever work location or sub-processor stack changes. For UK GDPR, if the transfer test indicates a restricted transfer, each transfer needs a valid mechanism: adequacy, safeguards, or an exception. In EU framing, where no adequacy decision applies, appropriate safeguards are the key check, and SCCs can be part of that safeguard path. If you are using general written authorization for sub-processors, keep a clear notice path for intended additions or replacements.

When you're ready to package your DPA with stronger core terms, start from a reusable baseline in the Freelance Contract Generator.

A DPA is the written contract (or other legal act) that sets how personal data is processed when you act on a client’s behalf as a processor. In a dpa for data scientists, it should define the processing scope (subject matter, duration, nature and purpose, data types, and data-subject categories) plus documented instructions, confidentiality, security, sub-processor controls, audit support, and end-of-contract data handling. A practical check is whether those terms match how you actually work in tools, storage, and access.

If a client uses you as a processor, a written contract or other legal act is required. A services agreement can work only if it includes the required Article 28(3) processor terms (or other legally binding arrangements that bind the processor appropriately). If required terms are missing, the arrangement may not meet UK GDPR requirements.

Use a role test first: who decides the purposes and means of processing. If the client decides and you process on their behalf, you are generally the processor. If you decide purposes and means, you are a controller. If both sides jointly decide, joint-controller rules can apply.

Include the core scope items: subject matter, duration, nature and purpose, data types, data-subject categories, and controller rights and obligations. Include minimum Article 28 terms: documented instructions, confidentiality, appropriate security, sub-processor controls, rights assistance, end-of-contract deletion or return, and audit support. If any of these are vague or missing, tighten before signing.

There is no single automatic fine outcome. But if required processor terms are missing, the controller may still be subject to corrective measures and sanctions regardless of contract terms with the processor. Individuals can complain to the ICO, and the ICO can investigate misuse of personal data and take action. If you go beyond instructions and decide purposes or means, you can be treated as a controller for that processing, with controller-level liability.

These are commercial terms set by the parties, not universally mandated GDPR clauses, as long as the contract complies with UK GDPR. Keep those terms consistent with required processor obligations, including clear end-of-contract data handling.

There is no universal best choice. These are commercial terms for the parties to negotiate, so long as GDPR contract requirements are still met. If EU personal data is transferred to a third country, SCCs are one available transfer mechanism to confirm before signature.

Maya writes about data privacy in plain English—what to do, what to avoid, and how to build trust with clients handling sensitive data.

Priya specializes in international contract law for independent contractors. She ensures that the legal advice provided is accurate, actionable, and up-to-date with current regulations.

Educational content only. Not legal, tax, or financial advice.

Choose your track before you collect documents. That first decision determines what your file needs to prove and which label should appear everywhere: `Freiberufler` for liberal-profession services, or `Selbständiger/Gewerbetreibender` for business and trade activity.

Start with one decision before kickoff: if you will touch client personal data on client instructions, settle the DPA before anyone gets live access.

Stop optimizing for the highest advertised rate. Optimize for net yield plus predictable money movement, because that is what keeps your freelance cashflow stable. If you run a business-of-one, treat checking like your cashflow control panel, not an investment product. Once checking becomes a system, you can choose a setup that survives late invoices, odd payout timing, and vendor auto-bills. Then interest becomes a bonus instead of a trap.